Technogen India PvtLtd

https://technogeninc.comAbout

Connect with the team

Jobs at Technogen India PvtLtd

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

Brief write up on how you could be a great fit for the role :

What your days and weeks will include.

- Agile scrum meetings, as appropriate, to track and drive individual and team accountability.

- Ad hoc collaboration sessions to define and adopt standards and best practices.

- A weekly ½ hour team huddle to discuss initiatives across the entire team.

- A weekly ½ hour team training session focused on comprehension of our team delivery model

- Conduct technical troubleshooting, maintenance, and operational support for production code

- Code! Test! Code! Test!

The skills that make you a great fit.

- Minimum of 5 years to 10 Years of experience as Eagle Investment Accounting (BNY Mellon product) as a Domain expert/QA Tester.

- Expert level of knowledge in ‘Capital Markets’ or ‘Investment of financial services.

- Must be self-aware, resilient, possess strong communications skills, and can lead.

- Strong experience release testing in an agile model

- Strong experience preparing test plans, test cases, and test summary reports.

- Strong experience in execution of smoke and regression test cases

- Strong experience utilizing and managing large and complex data sets.

- Experience with SQL Server and Oracle desired

- Good to Have knowledge on DevOps delivery model including roles and technologies desired

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

- Analyze client requirements and design customized Saviynt IGA solutions to meet their identity management needs

- Configure and implement Saviynt IGA platform features, including role-based access control, user provisioning, de-provisioning, certification campaigns, and entitlement management

- Collaborate with clients and internal stakeholders to understand business processes and translate them into effective IGA policies

- Assist in the integration of Saviynt IGA with existing identity and access management (IAM) systems, directories, and applications

- Conduct testing and troubleshooting to ensure the accuracy and effectiveness of the implemented IGA solution

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

Daily and monthly responsibilities

- Review and coordinate with business application teams on data delivery requirements.

- Develop estimation and proposed delivery schedules in coordination with development team.

- Develop sourcing and data delivery designs.

- Review data model, metadata and delivery criteria for solution.

- Review and coordinate with team on test criteria and performance of testing.

- Contribute to the design, development and completion of project deliverables.

- Complete in-depth data analysis and contribution to strategic efforts

- Complete understanding of how we manage data with focus on improvement of how data is sourced and managed across multiple business areas.

Basic Qualifications

- Bachelor’s degree.

- 5+ years of data analysis working with business data initiatives.

- Knowledge of Structured Query Language (SQL) and use in data access and analysis.

- Proficient in data management including data analytical capability.

- Excellent verbal and written communications also high attention to detail.

- Experience with Python.

- Presentation skills in demonstrating system design and data analysis solutions.

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

.Net Core is preferred along with SQL or very strong .Net developer with SQL

The job requires the resource to do documentation, SQL support, development with .Net,integration,interaction with end user- mix of all activities

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

We are looking for a Full Stack Developer-REMOTE Position

Strong knowledge of HTML, CSS, JavaScript, and JavaScript frameworks (e.g., React, Angular, Vue)

• Experience with backend technologies such as Node.js, DynamoDB, and PostgreSQL.

• Experience with version control systems (e.g., Git)

• Knowledge of web standards and accessibility guidelines

• Familiarity with server-side rendering and SEO best practices

Experience with AWS services, including AWS Lambda, API Gateway, and DynamoDB.

• Experience with Dev Ops- Infrastructure as Code, CI / CD, Test & Deployment Automation.

• Experience writing and maintaining a test suite throughout a project's lifecycle.

• Familiarity with Web Accessibility standards and technology

• Experience architecting and building Graph QL APIs and REST-full services.

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

- Data Steward :

Data Steward will collaborate and work closely within the group software engineering and business division. Data Steward has overall accountability for the group's / Divisions overall data and reporting posture by responsibly managing data assets, data lineage, and data access, supporting sound data analysis. This role requires focus on data strategy, execution, and support for projects, programs, application enhancements, and production data fixes. Makes well-thought-out decisions on complex or ambiguous data issues and establishes the data stewardship and information management strategy and direction for the group. Effectively communicates to individuals at various levels of the technical and business communities. This individual will become part of the corporate Data Quality and Data management/entity resolution team supporting various systems across the board.

Primary Responsibilities:

- Responsible for data quality and data accuracy across all group/division delivery initiatives.

- Responsible for data analysis, data profiling, data modeling, and data mapping capabilities.

- Responsible for reviewing and governing data queries and DML.

- Accountable for the assessment, delivery, quality, accuracy, and tracking of any production data fixes.

- Accountable for the performance, quality, and alignment to requirements for all data query design and development.

- Responsible for defining standards and best practices for data analysis, modeling, and queries.

- Responsible for understanding end-to-end data flows and identifying data dependencies in support of delivery, release, and change management.

- Responsible for the development and maintenance of an enterprise data dictionary that is aligned to data assets and the business glossary for the group responsible for the definition and maintenance of the group's data landscape including overlays with the technology landscape, end-to-end data flow/transformations, and data lineage.

- Responsible for rationalizing the group's reporting posture through the definition and maintenance of a reporting strategy and roadmap.

- Partners with the data governance team to ensure data solutions adhere to the organization’s data principles and guidelines.

- Owns group's data assets including reports, data warehouse, etc.

- Understand customer business use cases and be able to translate them to technical specifications and vision on how to implement a solution.

- Accountable for defining the performance tuning needs for all group data assets and managing the implementation of those requirements within the context of group initiatives as well as steady-state production.

- Partners with others in test data management and masking strategies and the creation of a reusable test data repository.

- Responsible for solving data-related issues and communicating resolutions with other solution domains.

- Actively and consistently support all efforts to simplify and enhance the Clinical Trial Predication use cases.

- Apply knowledge in analytic and statistical algorithms to help customers explore methods to improve their business.

- Contribute toward analytical research projects through all stages including concept formulation, determination of appropriate statistical methodology, data manipulation, research evaluation, and final research report.

- Visualize and report data findings creatively in a variety of visual formats that appropriately provide insight to the stakeholders.

- Achieve defined project goals within customer deadlines; proactively communicate status and escalate issues as needed.

Additional Responsibilities:

- Strong understanding of the Software Development Life Cycle (SDLC) with Agile Methodologies

- Knowledge and understanding of industry-standard/best practices requirements gathering methodologies.

- Knowledge and understanding of Information Technology systems and software development.

- Experience with data modeling and test data management tools.

- Experience in the data integration project • Good problem solving & decision-making skills.

- Good communication skills within the team, site, and with the customer

Knowledge, Skills and Abilities

- Technical expertise in data architecture principles and design aspects of various DBMS and reporting concepts.

- Solid understanding of key DBMS platforms like SQL Server, Azure SQL

- Results-oriented, diligent, and works with a sense of urgency. Assertive, responsible for his/her own work (self-directed), have a strong affinity for defining work in deliverables, and be willing to commit to deadlines.

- Experience in MDM tools like MS DQ, SAS DM Studio, Tamr, Profisee, Reltio etc.

- Experience in Report and Dashboard development

- Statistical and Machine Learning models

- Python (sklearn, numpy, pandas, genism)

- Nice to Have:

- 1yr of ETL experience

- Natural Language Processing

- Neural networks and Deep learning

- xperience in keras,tensorflow,spacy, nltk, LightGBM python library

Interaction : Frequently interacts with subordinate supervisors.

Education : Bachelor’s degree, preferably in Computer Science, B.E or other quantitative field related to the area of assignment. Professional certification related to the area of assignment may be required

Experience : 7 years of Pharmaceutical /Biotech/life sciences experience, 5 years of Clinical Trials experience and knowledge, Excellent Documentation, Communication, and Presentation Skills including PowerPoint

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

- Sr. Data Engineer:

Core Skills – Data Engineering, Big Data, Pyspark, Spark SQL and Python

Candidate with prior Palantir Cloud Foundry OR Clinical Trial Data Model background is preferred

Major accountabilities:

- Responsible for Data Engineering, Foundry Data Pipeline Creation, Foundry Analysis & Reporting, Slate Application development, re-usable code development & management and Integrating Internal or External System with Foundry for data ingestion with high quality.

- Have good understanding on Foundry Platform landscape and it’s capabilities

- Performs data analysis required to troubleshoot data related issues and assist in the resolution of data issues.

- Defines company data assets (data models), Pyspark, spark SQL, jobs to populate data models.

- Designs data integrations and data quality framework.

- Design & Implement integration with Internal, External Systems, F1 AWS platform using Foundry Data Connector or Magritte Agent

- Collaboration with data scientists, data analyst and technology teams to document and leverage their understanding of the Foundry integration with different data sources - Actively participate in agile work practices

- Coordinating with Quality Engineer to ensure the all quality controls, naming convention & best practices have been followed

Desired Candidate Profile :

- Strong data engineering background

- Experience with Clinical Data Model is preferred

- Experience in

- SQL Server ,Postgres, Cassandra, Hadoop, and Spark for distributed data storage and parallel computing

- Java and Groovy for our back-end applications and data integration tools

- Python for data processing and analysis

- Cloud infrastructure based on AWS EC2 and S3

- 7+ years IT experience, 2+ years’ experience in Palantir Foundry Platform, 4+ years’ experience in Big Data platform

- 5+ years of Python and Pyspark development experience

- Strong troubleshooting and problem solving skills

- BTech or master's degree in computer science or a related technical field

- Experience designing, building, and maintaining big data pipelines systems

- Hands-on experience on Palantir Foundry Platform and Foundry custom Apps development

- Able to design and implement data integration between Palantir Foundry and external Apps based on Foundry data connector framework

- Hands-on in programming languages primarily Python, R, Java, Unix shell scripts

- Hand-on experience in AWS / Azure cloud platform and stack

- Strong in API based architecture and concept, able to do quick PoC using API integration and development

- Knowledge of machine learning and AI

- Skill and comfort working in a rapidly changing environment with dynamic objectives and iteration with users.

Demonstrated ability to continuously learn, work independently, and make decisions with minimal supervision

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

Similar companies

About the company

About Us

Incubyte is an AI-first software development agency built on the principles of software craftsmanship—where how we build is just as important as what we build. We partner with organizations across stages, from enterprises looking to scale and modernize to early-stage founders bringing new ideas to life.

At Incubyte, AI is deeply integrated across the software development lifecycle to drive speed, efficiency, and smarter outcomes. Guided by Software Craftsmanship values and Extreme Programming practices, we combine high velocity with disciplined engineering to deliver reliable, high-impact solutions.

We don’t just build software—we incubate dedicated engineering teams. From designing systems to shaping team structures and organizational strategy, we enable our clients to launch and scale products that are relevant today and resilient for the future.

Whether you’re scaling an existing product, building from scratch, or optimizing manual processes, we help you move faster with confidence:

- Scale and modernize your product

- Launch quickly and iterate continuously

- Automate processes for non-linear growth

- Build systems that are stable, predictable, and measurable

Our approach is rooted in ownership. As a DevOps-driven organization, our engineers take responsibility for the entire lifecycle—from development to release—ensuring quality at every step.

Founded by product professionals, we bring a strong product mindset into services. We’re driven by curiosity, continuous learning, and a passion for building great software the right way.

We’re always looking for people who care deeply about code, craftsmanship, and growth. Join us if you’re excited to build, learn, and make an impact.

Jobs

5

About the company

Baker Street Fintech (Product Name: Cambridge Wealth) is a Financial Products Company. We help build world-class Fintech Products for our Clients who want to manage their wealth on our platform. Founded by professionals with Experiences spanning from PwC UK to Banking and Technology firms, we are a financially stable, profitable company growing quickly!

Jobs

3

About the company

The Wissen Group was founded in the year 2000. Wissen Technology, a part of Wissen Group, was established in the year 2015. Wissen Technology is a specialized technology company that delivers high-end consulting for organizations in the Banking & Finance, Telecom, and Healthcare domains.

With offices in US, India, UK, Australia, Mexico, and Canada, we offer an array of services including Application Development, Artificial Intelligence & Machine Learning, Big Data & Analytics, Visualization & Business Intelligence, Robotic Process Automation, Cloud, Mobility, Agile & DevOps, Quality Assurance & Test Automation.

Leveraging our multi-site operations in the USA and India and availability of world-class infrastructure, we offer a combination of on-site, off-site and offshore service models. Our technical competencies, proactive management approach, proven methodologies, committed support and the ability to quickly react to urgent needs make us a valued partner for any kind of Digital Enablement Services, Managed Services, or Business Services.

We believe that the technology and thought leadership that we command in the industry is the direct result of the kind of people we have been able to attract, to form this organization (you are one of them!).

Our workforce consists of 1000+ highly skilled professionals, with leadership and senior management executives who have graduated from Ivy League Universities like MIT, Wharton, IITs, IIMs, and BITS and with rich work experience in some of the biggest companies in the world.

Wissen Technology has been certified as a Great Place to Work®. The technology and thought leadership that the company commands in the industry is the direct result of the kind of people Wissen has been able to attract. Wissen is committed to providing them the best possible opportunities and careers, which extends to providing the best possible experience and value to our clients.

Jobs

495

About the company

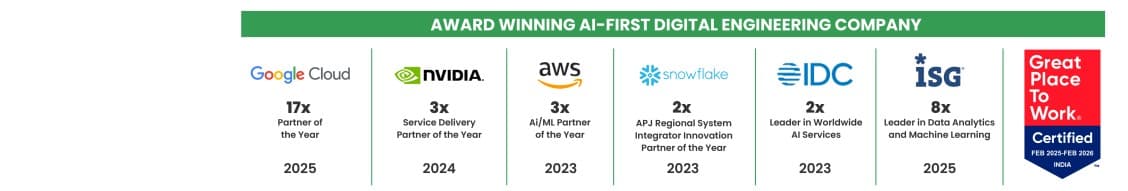

Quantiphi is an award-winning AI-first digital engineering company driven by the desire to reimagine and realize transformational opportunities at the heart of the business. Since its inception in 2013, Quantiphi has solved the toughest and most complex business problems by combining deep industry experience, disciplined cloud, and data-engineering practices, and cutting-edge artificial intelligence research to achieve accelerated and quantifiable business results.

Jobs

6

About the company

Lacroo Technologies Pty Ltd inc. in Australia, has emerged as a pioneering startup focused on transforming the way large-scale ($100m-$1b) civil construction projects are managed. Over the past two years, we have developed a unique no-code software solution, tailored to meet the complex demands of our enterprise customers. This platform has been meticulously crafted with direct feedback from industry leaders, ensuring it not only meets but exceeds the operational needs of our clients.

Our commitment to innovation was recognized and bolstered by significant enterprise deals and a recent infusion of seed capital, setting the stage for our next ambitious phase.

As we transition from a no-code environment to a robust, full-code enterprise-grade software system, our goal is to enhance the customization and scalability of our product. The move to a full-stack MERN architecture marks a pivotal shift in our development strategy, allowing for deeper integration and more sophisticated features that our clients require. This transition is not just about upgrading our technology, but also about redefining the standards of project management software within the civil construction industry. By harnessing cutting-edge technology and fostering a culture of innovation and agility, we aim to build a suite of tools that not only improves project oversight but also sets new benchmarks for efficiency and user engagement.

Looking forward, Lacroo Technologies is poised to expand its influence and market reach. The upcoming development cycle involves creating new features and functionalities that have been identified as crucial by our enterprise customers but are currently unaddressed in the market. Our vision for the next five years is to become the leading provider of project management software for the civil construction industry, synonymous with innovation, reliability, and customer satisfaction. We are seeking dynamic individuals who are ready to join us on this journey, contributing to a project that promises not only professional growth and technological challenge but also a chance to shape the future of construction project management.

Jobs

1

About the company

We are hiring for multiple clients

Jobs

2

About the company

Jobs

1

About the company

Jobs

55

About the company

Jobs

3

About the company

Jobs

1