Episource

https://episource.comAbout

Connect with the team

Jobs at Episource

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

- MSSQL, SSIS, Performance Tuning, Data modeling.

- Experience in No SQL tools is must

- Candidate should have exposure to write scripts in Python/Node JS/MongoDB or any other tool

- Maria DB/MYSQL, Mongo DB, Github, Jira

- Understand Requirements from client and on-site team.

- Participate in preparing design and data modelling.

- Comfortable with SQL Stored procedures and queries development.

- Comfortable with SQL Stored procedures and queries development.

- Manage SQL Server through multiple product lifecycle / environments, from development to critical production systems.

- Apply best in industry standard techniques for data modeling to ensure performance, integrate and requirement.

- Ability to analyze independently problems and resolve them on time.

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

About Us:

We’re looking to hire someone to help scale Machine Learning and NLP efforts at Episource. You’ll work with the team that develops the models powering Episource’s product focused on NLP driven medical coding. Some of the problems include improving our ICD code recommendations , clinical named entity recognition and information extraction from clinical notes.

This is a role for highly technical engineers who combine outstanding oral and written communication skills, and the ability to code up prototypes and productionalize using a large range of tools, frameworks, and languages. Most importantly they need to have the ability to autonomously plan and organize their work assignments based on high-level team goals.

What you will do at Episource:

You will be responsible for setting an agenda to develop and build machine learning platforms that positively impact the business, working with partners across the company including operations and engineering. You will be working closely with the machine learning team to design and implement back end components and services. You will be evaluating new technologies, enhancing the applications, and providing continuous improvements to produce high quality software.

Required Skills:

-

Strong background in analytics, BI or data science deployments is preferable with 2-6 years of experience

-

Knowledge of React/Vue, HTML, CSS

-

Experience building and consuming APIs

-

Experience with MySQL, MongoDB and MEAN stack

-

Knowledge and experience with serverless architectures is a plus

-

Hands-on experience with AWS or any major cloud service provider for deploying solutions

-

Experience with Docker or Kubernetes in deploying solutions on the cloud

-

Hands-on experience Python, Apache Spark & Big Data platforms to manipulate large-scale structured and unstructured datasets.

-

Fluent in data fundamentals: SQL, data manipulation using a procedural language, statistics, experimentation, and modeling

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

Product Analyst (Process Excellence Team, Episource)

Key Responsibilities and Deliverables

- Ensure timely release of features and bugs.

- Talk to development team and ensure all roadblocks are cleared.

- Participate in user acceptance testing and undertaking the functionality testing of new system

- Do due-diligence (or innovative workshops) with teams to identify areas of Salesforce improvement

- Interpret business needs and translating them into the application and operational requirement with the help of strong analytical and product management skills

- Prepare requirements and act as a liaison between development team and ops

- Create stories in JIRA and ensure they are discussed with dev team for proper sign offs.

- Prepare monthly, quarterly and six months plan for product roadmap

- Create product roadmaps after discussing with business heads.

- Ideate and define epics for the product and then define product functions.

- Each feature or new product development should result in cost per chart optimization or in helping new business line

- Work on other product lines associated with Salesforce

- Based on business need, work on other product line like retrieval, coding and HRA business on Salesforce

- Training about products and its usage to the customer

- Give proper training to end users on the product usage and its functionality

- Prepare product training manuals during roll out.

Skills & Attributes required

- Technical Skills

-

- Statistical Analysis Tools and techniques

- Process Definition, Process Designing, Process Modelling and Simulation, Process and Program Management and BPR

- Mid/Large Scale transformations and System Implementations Project

- Stakeholder Requirement Analysis

- Should have good understanding of the latest technology like Machine learning, Natural language processing, & AWS.

- Strong Analytical Skills

- Data Exploration and Analysis

- Has the ability of start from ambiguous problem statements, identify and access relevant data, make appropriate assumptions, perform insightful analysis and draw conclusions relevant to the business problems

- Communication Skills

- Has the ability to communicate effectively to all the stakeholders

- Demonstrated ability to communicate complex technical problems in simple plain stories

- Ability to present information professionally and concisely with supporting data

- Creative Problem Solving and Decision Making

- Needs to be a self-initiator and should be able to work independently on solving complex business problems

- Needs to understand customer pain points and should have the ability to innovate processes

- Inspecting process meticulously, identify value-adds thereby re-aligning processes for operational and financial efficiency

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

ABOUT EPISOURCE:

Episource has devoted more than a decade in building solutions for risk adjustment to measure healthcare outcomes. As one of the leading companies in healthcare, we have helped numerous clients optimize their medical records, data, analytics to enable better documentation of care for patients with chronic diseases.

The backbone of our consistent success has been our obsession with data and technology. At Episource, all of our strategic initiatives start with the question - how can data be “deployed”? Our analytics platforms and datalakes ingest huge quantities of data daily, to help our clients deliver services. We have also built our own machine learning and NLP platform to infuse added productivity and efficiency into our workflow. Combined, these build a foundation of tools and practices used by quantitative staff across the company.

What’s our poison you ask? We work with most of the popular frameworks and technologies like Spark, Airflow, Ansible, Terraform, Docker, ELK. For machine learning and NLP, we are big fans of keras, spacy, scikit-learn, pandas and numpy. AWS and serverless platforms help us stitch these together to stay ahead of the curve.

ABOUT THE ROLE:

We’re looking to hire someone to help scale Machine Learning and NLP efforts at Episource. You’ll work with the team that develops the models powering Episource’s product focused on NLP driven medical coding. Some of the problems include improving our ICD code recommendations, clinical named entity recognition, improving patient health, clinical suspecting and information extraction from clinical notes.

This is a role for highly technical data engineers who combine outstanding oral and written communication skills, and the ability to code up prototypes and productionalize using a large range of tools, algorithms, and languages. Most importantly they need to have the ability to autonomously plan and organize their work assignments based on high-level team goals.

You will be responsible for setting an agenda to develop and ship data-driven architectures that positively impact the business, working with partners across the company including operations and engineering. You will use research results to shape strategy for the company and help build a foundation of tools and practices used by quantitative staff across the company.

During the course of a typical day with our team, expect to work on one or more projects around the following;

1. Create and maintain optimal data pipeline architectures for ML

2. Develop a strong API ecosystem for ML pipelines

3. Building CI/CD pipelines for ML deployments using Github Actions, Travis, Terraform and Ansible

4. Responsible to design and develop distributed, high volume, high-velocity multi-threaded event processing systems

5. Knowledge of software engineering best practices across the development lifecycle, coding standards, code reviews, source management, build processes, testing, and operations

6. Deploying data pipelines in production using Infrastructure-as-a-Code platforms

7. Designing scalable implementations of the models developed by our Data Science teams

8. Big data and distributed ML with PySpark on AWS EMR, and more!

BASIC REQUIREMENTS

-

Bachelor’s degree or greater in Computer Science, IT or related fields

-

Minimum of 5 years of experience in cloud, DevOps, MLOps & data projects

-

Strong experience with bash scripting, unix environments and building scalable/distributed systems

-

Experience with automation/configuration management using Ansible, Terraform, or equivalent

-

Very strong experience with AWS and Python

-

Experience building CI/CD systems

-

Experience with containerization technologies like Docker, Kubernetes, ECS, EKS or equivalent

-

Ability to build and manage application and performance monitoring processes

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

Detailed Job Description / Essential Duties and Responsibilities:

The role requires deep expertise in delivering modern web application solutions on the Open Source Tech platform, as well as ability to communicate how these solutions drive business value. Detailed responsibilities include -

- Lead Engineering team and ensure customer requirements and needs across the current and future targeted segments are met.

- Work with Product Owner / US counter parts / SME’s to understand the requirements and prioritize development efforts.

- Work in capacity of Scrum Master role and manage development through Agile - Scrum framework. Activities include Sprint planning, Preside Scrum meetings, Sprint Retrospective, Publish reports, etc.

- Responsible for creating User stories, Epics in Project management tool based on the requirements received from various stakeholders including clients.

- Manage all aspects of engineering operations including Product Design, Development, Testing and Deployment.

- Architect the applications / providing recommendations to choose the right technologies for the products to be built.

- Manage Operational efforts for the contracted clients and ensure the SLA’s with required quality are met.

- Manage Product support team and ensure the SLA’s for response and resolutions are provided to client that meets or exceeds the customer’s expectations.

- Build / Guide Developers in building Full-Stack Web applications at Internet scale.

- Code Review and provide feedback to ensure timely delivery with required quality.

- Programming in MEAN stack or similar open source technologies and framework

- Data Modelling using NoSQL and Relational Database management Systems

- UI / UX Design and Implementation

- Web Development with BootStrap, Angular Material, and other libraries

- Develop Data / File parsing programs using Python or other programming languages

- Create methods and algorithms for data analysis and optimization

- Develop curriculum for talent development, Knowledge Sharing, etc.

- Establish and maintain cooperative working relationships with all stakeholders involved.

- Willing, capable and excited to roll up the sleeves to code when needed.

- Having exceptional expertise in one of the following areas –

- Backend / Front end Web Application Framework

- Serverless Architecture

- Micro Services Platforms

- AWS Services

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

We’re looking to hire someone to help scale Machine Learning and NLP efforts at Episource. You’ll work with the team that develops the models powering Episource’s product focused on NLP driven medical coding. Some of the problems include improving our ICD code recommendations , clinical named entity recognition and information extraction from clinical notes.

This is a role for highly technical machine learning & data engineers who combine outstanding oral and written communication skills, and the ability to code up prototypes and productionalize using a large range of tools, algorithms, and languages. Most importantly they need to have the ability to autonomously plan and organize their work assignments based on high-level team goals.

You will be responsible for setting an agenda to develop and ship machine learning models that positively impact the business, working with partners across the company including operations and engineering. You will use research results to shape strategy for the company, and help build a foundation of tools and practices used by quantitative staff across the company.

What you will achieve:

-

Define the research vision for data science, and oversee planning, staffing, and prioritization to make sure the team is advancing that roadmap

-

Invest in your team’s skills, tools, and processes to improve their velocity, including working with engineering counterparts to shape the roadmap for machine learning needs

-

Hire, retain, and develop talented and diverse staff through ownership of our data science hiring processes, brand, and functional leadership of data scientists

-

Evangelise machine learning and AI internally and externally, including attending conferences and being a thought leader in the space

-

Partner with the executive team and other business leaders to deliver cross-functional research work and models

Required Skills:

-

Strong background in classical machine learning and machine learning deployments is a must and preferably with 4-8 years of experience

-

Knowledge of deep learning & NLP

-

Hands-on experience in TensorFlow/PyTorch, Scikit-Learn, Python, Apache Spark & Big Data platforms to manipulate large-scale structured and unstructured datasets.

-

Experience with GPU computing is a plus.

-

Professional experience as a data science leader, setting the vision for how to most effectively use data in your organization. This could be through technical leadership with ownership over a research agenda, or developing a team as a personnel manager in a new area at a larger company.

-

Expert-level experience with a wide range of quantitative methods that can be applied to business problems.

-

Evidence you’ve successfully been able to scope, deliver and sell your own research in a way that shifts the agenda of a large organization.

-

Excellent written and verbal communication skills on quantitative topics for a variety of audiences: product managers, designers, engineers, and business leaders.

-

Fluent in data fundamentals: SQL, data manipulation using a procedural language, statistics, experimentation, and modeling

Qualifications

-

Professional experience as a data science leader, setting the vision for how to most effectively use data in your organization

-

Expert-level experience with machine learning that can be applied to business problems

-

Evidence you’ve successfully been able to scope, deliver and sell your own work in a way that shifts the agenda of a large organization

-

Fluent in data fundamentals: SQL, data manipulation using a procedural language, statistics, experimentation, and modeling

-

Degree in a field that has very applicable use of data science / statistics techniques (e.g. statistics, applied math, computer science, OR a science field with direct statistics application)

-

5+ years of industry experience in data science and machine learning, preferably at a software product company

-

3+ years of experience managing data science teams, incl. managing/grooming managers beneath you

-

3+ years of experience partnering with executive staff on data topics

Similar companies

About the company

Appknox, a leading mobile app security solution HQ in Singapore & Bangalore was founded by Harshit Agarwal and Subho Halder.

Since its inception, Appknox has become one of the go-to security solutions with the most powerful plug-and-play security platform, enabling security researchers, developers, and enterprises to build safe and secure mobile ecosystems using a system-plus human approach.

Appknox offers VA+PT solutions ( Vulnerability Assessment + Penetration Testing ) that provide end-to-end mobile application security and testing strategies to Fortune 500, SMB and Large Enterprises Globally helping businesses and mobile developers make their mobile apps more secure, thus not only enhancing protection for their customers but also for their own brand.

During the course of 9 years, Appknox has scaled up to work with some major brands in India, South-East Asia, Middle-East, Japan, and the US and has also successfully enabled some of the top government agencies with its On-Premise deployments & compliance testing. Appknox helps 500+ Enterprises which includes 20+ Fortune 1000 and ministries/regulators across 10+ countries and some of the top banks across 20+ countries.

A champion of Value SaaS, with its customer and security-first approach Appknox has won many awards and recognitions from G2, and Gartner and is one of the top mobile app security vendors in its 2021 Application security Hype Cycle report.

Our forward-leaning, pioneering spirit is backed by SeedPlus, JFDI Asia, Microsoft Ventures, and Cisco Launchpad and a legacy of expertise that began at the dawn of 2014.

Jobs

3

About the company

We are a fast growing virtual & hybrid events and engagement platform. Gevme has already powered hundreds of thousands of events around the world for clients like Facebook, Netflix, Starbucks, Forbes, MasterCard, Citibank, Google, Singapore Government etc.

We are a SAAS product company with a strong engineering and family culture; we are always looking for new ways to enhance the event experience and empower efficient event management. We’re on a mission to groom the next generation of event technology thought leaders as we grow.

Join us if you want to become part of a vibrant and fast moving product company that's on a mission to connect people around the world through events.

Jobs

8

About the company

Find latest openings in Talentoj. Search & Apply now!

Jobs

7

About the company

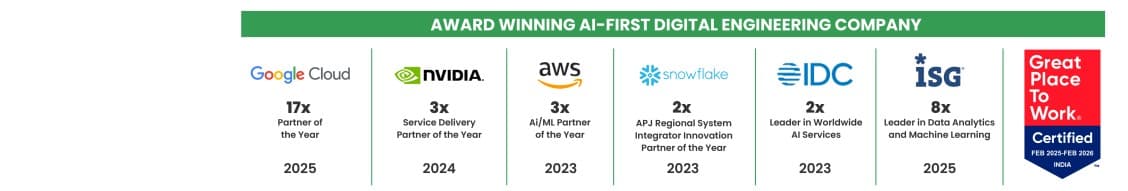

Quantiphi is an award-winning AI-first digital engineering company driven by the desire to reimagine and realize transformational opportunities at the heart of the business. Since its inception in 2013, Quantiphi has solved the toughest and most complex business problems by combining deep industry experience, disciplined cloud, and data-engineering practices, and cutting-edge artificial intelligence research to achieve accelerated and quantifiable business results.

Jobs

6

About the company

Jobs

2

About the company

Jobs

1

About the company

VDart Digital is a High-growth, Global Digital Solutions, Product Development and Professional Services firm, headquartered in Atlanta, GA, USA with global presence in Canada, Mexico, Belgium, UK, Malaysia, UAE & India with a proven track record in building next generation solutions and connected platforms to transform business and operations.

VDart Digital provides turnkey Digital Transformation, Mobility and Supply Chain Management Solutions using AI/ML/GenAI/Agentic AI, Intelligent Automation, Data Analytics, Blockchain, Cloud, IOT, Identity & Access management, UI/UX, Full-stack and mobile development, Integration etc.

VDart Digital’s Products include TestSamurAI, LendSmartAI; IDocLens, Fleet Management (VGo); Document Verification/Validation Platform (Vvalidate); Employee Engagement Mobile App (V Engage).

Jobs

15

About the company

Jobs

3

About the company

Don't fail your NAATI CCL Test. Access real 2026 exam questions, expert-led practice tests, with our Guaranteed strategy. Claim your 5 Australian PR points now!

Jobs

1

About the company

Jobs

1