Quantiphi

https://quantiphi.comAbout

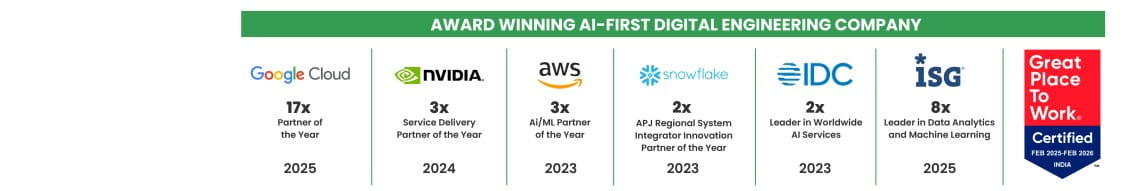

Quantiphi is an award-winning AI-first digital engineering company driven by the desire to reimagine and realize transformational opportunities at the heart of the business. Since its inception in 2013, Quantiphi has solved the toughest and most complex business problems by combining deep industry experience, disciplined cloud, and data-engineering practices, and cutting-edge artificial intelligence research to achieve accelerated and quantifiable business results.

Tech stack

Company video

Candid answers by the company

Bengaluru, Mumbai, and Trivandrum

Photos

Connect with the team

Jobs at Quantiphi

Role & Responsibilities

- Develop and deliver automation software to build and improve platform functionality

- Ensure reliability, availability, and manageability of applications and cloud platforms

- Champion adoption of Infrastructure as Code (IaC) practices

- Design and build self-service, self-healing, monitoring, and alerting platforms

- Automate development and testing workflows through CI/CD pipelines (Git, Jenkins, SonarQube, Artifactory, Docker containers)

- Build and manage container hosting platforms using Kubernetes

Requirements

- Strong experience deploying and maintaining GCP cloud infrastructure

- Well-versed in service-oriented and cloud-based architecture design patterns

- Knowledge of cloud services including compute, storage, networking, messaging, and automation tools (e.g., CloudFormation/Terraform equivalents)

- Experience with relational and NoSQL databases (Postgres, Cassandra)

- Hands-on experience with automation/configuration tools (Puppet, Chef, Ansible, Terraform)

Additional Skills

- Strong Linux system administration and troubleshooting skills

- Programming/scripting exposure (Bash, Python, Core Java, or Scala)

- CI/CD pipeline experience (Jenkins, Git, Maven, etc.)

- Experience integrating solutions in multi-region environments

- Familiarity with Agile/Scrum/DevOps methodologies

We are seeking a hands-on and technically strong Generative AI Engineer to join the AI Platform Capabilities team as part of a Platform Implementation Partner engagement. In this role, you will design, build, and deploy enterprise-grade Generative AI platform capabilities across multiple Local Business Units operating on both GCP and Azure environments.

The role focuses on engineering production-ready and reusable GenAI components across the full AI stack, including Decision & Orchestration Layers, Execution Runtime Layers, and Build & Lifecycle Layers. You will work closely with implementation partners and Data & AI teams to ensure scalable, enterprise-compliant AI capabilities are delivered within project timelines. This is a deeply technical engineering role with strong emphasis on implementation and operationalization rather than client management.

Key Responsibilities:

- Design and implement enterprise-grade RAG pipelines including ingestion, chunking, embeddings, vector search, retrieval logic, and evaluation frameworks.

- Build multi-agent AI systems with orchestration, semantic routing, memory management, workflow execution, and agent communication capabilities.

- Develop centralized LLM Gateway solutions covering model routing, observability, caching, rate limiting, and policy enforcement.

- Implement scalable GenAIOps, AgentOps, and MLOps frameworks for deployment, monitoring, evaluation, and governance of AI systems.

- Build scalable AI services and REST APIs using Python and deploy them on cloud-native infrastructure.

- Implement AI governance, safety guardrails, audit logging, PII protection, and compliance frameworks.

- Integrate AI solutions into CI/CD pipelines and provision infrastructure using Infrastructure-as-Code practices.

Must-Have Skills:

- Strong hands-on experience with Generative AI, RAG pipelines, embeddings, vector databases, and retrieval evaluation.

- Experience building agentic AI systems including orchestration frameworks, semantic routers, reasoning engines, and memory management.

- Expertise with LLM Gateway implementations, tool integrations, event-driven architectures, and AI runtime systems.

- Solid understanding of GenAIOps, AgentOps, and MLOps principles and tooling.

- Strong experience with GCP AI/ML ecosystem including Vertex AI, Cloud Run, GKE, Pub/Sub, BigQuery, and Cloud Storage.

- Excellent Python programming skills for AI pipelines, APIs, and automation workflows.

- Experience implementing AI governance, safety, compliance, and monitoring frameworks.

- Hands-on experience with CI/CD pipelines and Terraform-based infrastructure automation.

Nice-to-Have Skills:

- Experience working in multi-cloud environments across GCP and Azure.

- Familiarity with LangChain, LlamaIndex, RAGAS, DeepEval, or Vertex AI Rapid Eval.

- Exposure to Knowledge Graphs, Document Intelligence platforms, and Agent Marketplace concepts.

- Experience with enterprise AI-ready data layers including Vector Stores, Feature Stores, and Embedding Infrastructure.

- BFSI domain knowledge including regulatory compliance and data sovereignty considerations.

- Google Cloud Professional Machine Learning Engineer certification.

- Experience working in large-scale enterprise transformation programs.

We are looking for a hands-on Associate / Architect – Generative AI to design, build, and deploy enterprise-grade GenAI platform capabilities across multiple business units. This role focuses on developing scalable and reusable AI components across the full stack, covering RAG systems, agent orchestration, LLM infrastructure, and GenAIOps on GCP (primary) and Azure.

Key Responsibilities

- Design and build production-ready Generative AI systems and platform components

- Develop and deploy scalable RAG pipelines including data ingestion, embeddings, retrieval, and APIs

- Build agentic AI systems with orchestration, routing, memory, and workflow management

- Develop and manage LLM infrastructure including model routing, caching, observability, and rate limiting

- Build scalable backend services and APIs for AI-driven applications

- Implement GenAIOps/MLOps practices including prompt management, evaluation, monitoring, and deployment

- Work extensively with GCP services such as Vertex AI, BigQuery, Cloud Run, GKE, and Pub/Sub

- Ensure AI governance, safety, compliance, PII protection, and auditability standards are maintained

- Design scalable enterprise AI architectures with strong focus on performance, reliability, and reusability

- Collaborate with cross-functional teams to deliver enterprise-grade AI solutions

- Mentor junior engineers and contribute to technical leadership, architecture discussions, and design reviews

Required Skills & Experience

- Strong hands-on experience building and deploying production-grade Generative AI and RAG systems

- Experience working on multi-agent or agentic AI architectures

- Strong proficiency in Python and backend/API development

- Hands-on experience with GCP AI/ML ecosystem including Vertex AI and BigQuery

- Solid understanding of LLM infrastructure, orchestration layers, and AI platform engineering

- Experience with CI/CD pipelines and Infrastructure as Code tools like Terraform

- Good understanding of GenAIOps/MLOps practices and model lifecycle management

- Strong system design and architecture experience for scalable AI platforms

- Exposure to enterprise application architecture and distributed systems

- Experience leading small engineering teams, mentoring developers, or owning technical delivery is preferred

- Understanding of AI safety, governance, and compliance best practices

Nice to Have

- Experience with LangChain, LlamaIndex, or similar frameworks

- Familiarity with RAG evaluation tools such as RAGAS or DeepEval

- Knowledge of Knowledge Graphs with RAG systems

- Experience working in multi-cloud environments (GCP + Azure)

- Exposure to BFSI or other regulated domains

What We’re Looking For

- Engineers who have built and deployed real-world GenAI systems at scale

- Strong backend engineering and systems-thinking mindset

- Ability to thrive in fast-paced enterprise environments

- Ownership mindset with strong communication and collaboration skills

We are looking for a hands-on Generative AI Engineer to design, build, and deploy enterprise-grade GenAI platform capabilities across multiple business units.

This role focuses on developing scalable, reusable AI components across the full stack—covering RAG systems, agent orchestration, LLM infrastructure, and GenAIOps—on GCP (primary) and Azure.

This is a core engineering role, not a research or client-facing position.

Key Responsibilities

- Design and build production-ready GenAI systems and platform components

- Develop and deploy RAG pipelines (data ingestion, embeddings, retrieval, APIs)

- Implement agent-based architectures (orchestration, routing, memory, workflows)

- Build and manage LLM infrastructure (model routing, caching, rate limiting, observability)

- Develop scalable APIs and services for AI capabilities

- Implement GenAIOps/MLOps practices (prompt management, evaluation, monitoring, deployment)

- Work with GCP services (Vertex AI, BigQuery, Cloud Run, GKE, Pub/Sub) to deploy solutions

- Ensure AI safety, governance, and compliance (PII protection, guardrails, auditability)

- Collaborate with cross-functional teams to deliver reusable, enterprise-grade solutions

Required Skills & Experience

- Strong hands-on experience in Generative AI and RAG systems (production level)

- Experience building multi-agent or agentic AI systems

- Proficiency in Python and backend/API development

- Hands-on experience with GCP AI/ML ecosystem (Vertex AI, BigQuery, etc.)

- Solid understanding of LLM infrastructure and orchestration layers

- Experience with CI/CD pipelines and Infrastructure as Code (Terraform)

- Knowledge of GenAIOps/MLOps practices and model lifecycle management

- Understanding of AI safety, governance, and compliance

Nice to Have

- Experience with LangChain, LlamaIndex, or similar frameworks

- Familiarity with RAG evaluation tools (RAGAS, DeepEval)

- Knowledge of Knowledge Graphs with RAG

- Experience in multi-cloud environments (GCP + Azure)

- Exposure to BFSI/regulated domains

What We’re Looking For

- Engineers who have built and deployed real-world GenAI systems at scale

- Strong backend and systems-thinking mindset

- Ability to work in fast-paced, enterprise environments

We are seeking a skilled Data Engineer to join the AI Platform Capabilities team supporting the UDP Uplift program.

In this role, you will design, build, and test standardized data and AI platform capabilities across a multi-cloud environment (Azure & GCP).

You will collaborate closely with AI use case teams to develop:

- Scalable data pipelines

- Reusable data products

- Foundational data infrastructure

Your work will support advanced AI solutions such as:

- GenAI

- RAG (Retrieval-Augmented Generation)

- Document Intelligence

Key Responsibilities

- Design and develop scalable ETL/ELT pipelines for AI workloads

- Build and optimize data pipelines for structured & unstructured data

- Enable context processing & vector store integrations

- Support streaming data workflows and batch processing

- Ensure adherence to enterprise data models, governance, and security standards

- Collaborate with DataOps, MLOps, Security, and business teams (LBUs)

- Contribute to data lifecycle management for AI platforms

Required Skills

- 5–7 years of hands-on experience in Data Engineering

- Strong expertise in Python and advanced SQL

- Experience with GCP and/or Azure cloud-native data services

- Hands-on experience with PySpark / Spark SQL

- Experience building data pipelines for ML/AI workloads

- Understanding of CI/CD, Git, and Agile methodologies

- Knowledge of data quality, governance, and security practices

- Strong collaboration and stakeholder management skills

Nice-to-Have Skills

- Experience with Vector Databases / Vector Stores (for RAG pipelines)

- Familiarity with MLOps / GenAIOps concepts (feature stores, model registries, prompt management)

- Exposure to Knowledge Graphs / Context Stores / Document Intelligence workflows

- Experience with DBT (Data Build Tool)

- Knowledge of Infrastructure-as-Code (Terraform)

- Experience in multi-cloud deployments (Azure + GCP)

- Familiarity with event-driven systems (Kafka, Pub/Sub) & API integrations

Ideal Candidate Profile

- Strong data engineering foundation with AI/ML exposure

- Experience working in multi-cloud environments

- Ability to build production-grade, scalable data systems

- Comfortable working in cross-functional, fast-paced environments

Responsible for developing, enhancing, modifying, and maintaining chatbot applications in the Global Markets environment. The role involves designing, coding, testing, debugging, and documenting conversational AI solutions, along with supporting activities aligned to the corporate systems architecture.

You will work closely with business partners to understand requirements, analyze data, and deliver optimal, market-ready conversational AI and automation solutions.

Key Responsibilities

- Design, develop, test, debug, and maintain chatbot and virtual agent applications

- Collaborate with business stakeholders to define and translate requirements into technical solutions

- Analyze large volumes of conversational data to improve chatbot accuracy and performance

- Develop automation workflows for data handling and refinement

- Train and optimize chatbots using historical chat logs and user-generated content

- Ensure solutions align with enterprise architecture and best practices

- Document solutions, workflows, and technical designs clearly

Required Skills

- Hands-on experience in developing virtual agents (chatbots/voicebots) and Natural Language Processing (NLP)

- Experience with one or more AI/NLP platforms such as:

- Dialogflow, Amazon Lex, Alexa, Rasa, LUIS, Kore.AI

- Microsoft Bot Framework, IBM Watson, Wit.ai, Salesforce Einstein, Converse.ai

- Strong programming knowledge in Python, JavaScript, or Node.js

- Experience training chatbots using historical conversations or large-scale text datasets

- Practical knowledge of:

- Formal syntax and semantics

- Corpus analysis

- Dialogue management

- Strong written communication skills

- Strong problem-solving ability and willingness to learn emerging technologies

Nice-to-Have Skills

- Understanding of conversational UI and voice-based processing (Text-to-Speech, Speech-to-Text)

- Experience building voice apps for Amazon Alexa or Google Home

- Experience with Test-Driven Development (TDD) and Agile methodologies

- Ability to design and implement end-to-end pipelines for AI-based conversational applications

- Experience in text mining, hypothesis generation, and historical data analysis

- Strong knowledge of regular expressions for data cleaning and preprocessing

- Understanding of API integrations, SSO, and token-based authentication

- Experience writing unit test cases as per project standards

- Knowledge of HTTP, REST APIs, sockets, and web services

- Ability to perform keyword and topic extraction from chat logs

- Experience training and tuning topic modeling algorithms such as LDA and NMF

- Understanding of classical Machine Learning algorithms and appropriate evaluation metrics

- Experience with NLP frameworks such as NLTK and spaCy

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

Company Profile

Quantiphi is an award-winning Applied AI and Big Data software and services company, driven by a deep desire to solve transformational problems at the heart of businesses. Our signature approach combines groundbreaking machine-learning research with disciplined cloud and data-engineering practices to create breakthrough impact at unprecedented speed.

Some company highlights:

- Quantiphi has seen 2.5x growth YoY since its inception in 2013.

- Winner of the "Machine Learning Partner of the Year" award from Google for two consecutive years - 2017 and 2018.

- Winner of the "Social Impact Partner of the Year" award from Google for 2019.

- Headquartered in Boston, with 700+ data science professionals across different offices.

For more details, visit: our http://www.quantiphi.com/">Website or our https://www.linkedin.com/company/quantiphi/">LinkedIn Page

Job Description

Role: Associate Tech Architect / Tech Architect – ReactJS +Python+AWS

Experience Level: 7-13 Years

Work location: Mumbai & Bangalore

We are looking for an experienced full stack developer( ReactJS and Python ) who can help create dynamic software applications for our clients with their skill set. In this role, you will be responsible for gathering requirements from clients and accordingly write and test scalable code, and develop front end and back-end components.

Technologies worked on:

ReactJS, Python, AWS

Requirement Description:

- Full Stack developer with experience in ReactJS, Python, API Gateway, Fargate and ECS

- Well-experienced in working with tools like Git, Maven, JFrog

- Should have a solid understanding of object-oriented programming (OOP)

- Well-experienced to perform Unit Testing and Integration Testing and have good experience in Agile based development approach

- Expertise in developing enterprise-level web applications and REST and SOAP APIs using MicroServices, with demonstrable production-scale experience

- Demonstrate strong design and programming skills using JSON, Web Services, XML, XSLT, PL/SQL in Unix and Windows environments

- Strong background working with Linux/UNIX environments and strong Shell scripting experience

- Working knowledge with SQL or No SQL databases

- Understand Architecture Requirements and ensure effective design, development, validation, and support activities

- Understanding of core AWS services, uses, and basic AWS architecture best practices

- Proficiency in developing, deploying, and debugging cloud-based applications using AWS

- Ability to use the AWS service APIs, AWS CLI, and SDKs to write applications

- Ability to identify key features of AWS services

- Identify bottlenecks and bugs, and recommend solutions by comparing the advantages and disadvantages of custom development

- Should contribute to team meetings, troubleshooting development and production problems across multiple environments and operating platforms

- Execute strong collaboration and communication skills within distributed project teams

- Continuously discover, evaluate, and implement new technologies to maximize development efficiency

Similar companies

About the company

We are a product engineering company that empowers other startups and enterprises by building simple and elegant software solutions. Through our expertise in the domains of AI and Enterprise Applications, we have helped brands such as Unilever, IndiaMART, GreytHR, Fyle, Skylark Drones, etc to craft world-class products and improve their business. We are churning out amazing software for our clients located across the globe from our headquarters in Bengaluru.

Codemonk is on a mission to transform the way industries work by leveraging the power of AI, Blockchain and IoT. There is something special when you know that every line of code that you write impacts thousands of human lives!

By joining us you can expect newness and challenges every day. As a member of the team, you will be part of shaping the company's future fuelling the growth and defining the culture.

Jobs

4

About the company

Jobs

4

About the company

The Wissen Group was founded in the year 2000. Wissen Technology, a part of Wissen Group, was established in the year 2015. Wissen Technology is a specialized technology company that delivers high-end consulting for organizations in the Banking & Finance, Telecom, and Healthcare domains.

With offices in US, India, UK, Australia, Mexico, and Canada, we offer an array of services including Application Development, Artificial Intelligence & Machine Learning, Big Data & Analytics, Visualization & Business Intelligence, Robotic Process Automation, Cloud, Mobility, Agile & DevOps, Quality Assurance & Test Automation.

Leveraging our multi-site operations in the USA and India and availability of world-class infrastructure, we offer a combination of on-site, off-site and offshore service models. Our technical competencies, proactive management approach, proven methodologies, committed support and the ability to quickly react to urgent needs make us a valued partner for any kind of Digital Enablement Services, Managed Services, or Business Services.

We believe that the technology and thought leadership that we command in the industry is the direct result of the kind of people we have been able to attract, to form this organization (you are one of them!).

Our workforce consists of 1000+ highly skilled professionals, with leadership and senior management executives who have graduated from Ivy League Universities like MIT, Wharton, IITs, IIMs, and BITS and with rich work experience in some of the biggest companies in the world.

Wissen Technology has been certified as a Great Place to Work®. The technology and thought leadership that the company commands in the industry is the direct result of the kind of people Wissen has been able to attract. Wissen is committed to providing them the best possible opportunities and careers, which extends to providing the best possible experience and value to our clients.

Jobs

499

About the company

Devcare Solution is a place where a best-in-class working environment, professional management, and opportunities to learn exist bundled with exceptional rewards. It is ready to take more on board for all those who deserve a dream career. The team is full of good spirits complimenting each one's brilliance at workplace. Needless to say, about the interesting projects, you for sure will gain an enriching positive experience every moment.

Jobs

16

About the company

Jobs

4

About the company

Jobs

3

About the company

Jobs

2

About the company

Improving is a leading IT professional services firm committed to helping companies achieve lasting success through modern technology. With core expertise in AI, Data, and Applications, we specialize in transforming legacy systems, building cloud-native platforms, and delivering intelligent, future-ready solutions for today’s complex business needs. Improving’s leaders are equally committed to fostering a great place to work that is inclusive and purpose-centered, empowering Improvers to bring their whole selves to work. Our team is known for its collaborative approach and long-term partnerships that prioritize measurable outcomes. By combining technical excellence with strategic insight, Improving enables all stakeholders to grow, adapt, and lead in an ever-evolving digital landscape.

Jobs

11

About the company

Jobs

5

About the company

At Mudals Tech, we’re redefining how IT consulting and services are delivered. Unlike traditional firms that rely on headcount billing, our philosophy is simple: deliver innovation, automation, and real value that directly translates into cost savings and business growth for our clients.

We specialise in:

Cybersecurity – safeguarding businesses with proactive, adaptive, and resilient security strategies.

DevOps & DevSecOps – enabling faster, secure, and reliable software delivery.

Akamai Solutions – optimizing performance, security, and scalability for modern digital experiences.

ELK (Elasticsearch, Logstash, Kibana) – turning data into actionable insights with real-time observability.

Custom Development & AI – building intelligent, scalable solutions that drive innovation and efficiency.

What sets us apart is our commitment to optimization and automation. We constantly identify opportunities to eliminate inefficiencies, reduce manual effort, and free up our clients’ teams to focus on core business priorities.

With a strong, passionate, and highly skilled team, we pride ourselves on delivering outcomes faster, smarter, and with greater impact. Every engagement with us is designed as a win-win scenario—where our clients experience reduced costs, increased efficiency, and accelerated growth.

We’re not just another IT consulting company. We are your strategic partner in innovation—driving digital transformation with honesty, expertise, and a relentless focus on value creation.

Jobs

3