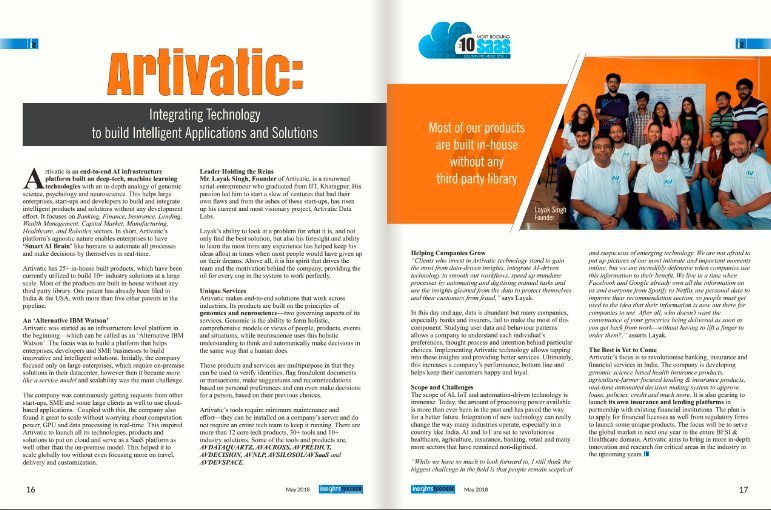

About Artivatic

About

Company video

Photos

Connect with the team

Similar jobs

Job Title : React.js Developer

Experience : 3+ Years

Location : Gurgaon (Work From Office – 5 Days a Week)

Employment Type : Full-time

Job Summary :

We are looking for a highly skilled and passionate React.js Developer with 3+ Years of hands-on experience to join our growing team in Gurgaon.

The ideal candidate should have strong expertise in building modern, responsive, and high-performance web applications using React.js and related technologies.

Key Responsibilities :

- Develop, test, and maintain responsive web applications using React.js.

- Translate UI/UX designs into high-quality code.

- Build reusable components and front-end libraries for future use.

- Optimize applications for maximum speed and scalability.

- Collaborate with backend developers, designers, and other team members to deliver high-quality products.

- Participate in code reviews and follow best practices for clean and efficient code.

- Troubleshoot and debug application issues.

Required Skills & Qualifications :

- Minimum 3 years of hands-on experience in React.js.

- Strong proficiency in JavaScript (ES6+), HTML5, CSS3.

- Experience with state management libraries such as Redux, Context API.

- Familiarity with RESTful APIs and modern front-end build pipelines and tools (Webpack, Babel, etc.).

- Good understanding of Git and version control workflows.

- Experience with responsive design and cross-browser compatibility.

- Ability to work independently and collaboratively in a fast-paced environment

Nice to Have:

- Knowledge of TypeScript.

- Experience with Next.js or any SSR frameworks.

- Exposure to Agile/Scrum methodologies.

Perks & Benefits :

- Competitive salary

- Friendly and collaborative work environment

- Opportunity to work on exciting and cutting-edge projects

We are seeking a highly motivated and knowledgeable DADS Trainer to conduct hands-on training in Data Analytics and Data Science. The ideal candidate will have strong domain expertise, coding proficiency, and a passion for teaching concepts in Python, statistics, machine learning, data visualization, and tools like Excel, Power BI, and SQL.

We are a 20-year old IT Services company from Kolkata working in India and abroad. We primarily work as SSP(Software Solutions Partner) and serve some of the leading business houses in the country in various software project implementations specially on SAP and Oracle platform and also working on Govt & Semi Govt projects as outsourcing partner all over PAN India.

Designation-Full Stack-Java Developer

Location-Mumbai,Pune & Kolkata-Remote

Sal-Nego

-

Current location -

-

Expected location -

-

Okay to relocate -

-

Notice period -

-

Overall experience -

-

Total years of relevant experience in the above role -

-

Current CTC - fixed + variable -

-

Expected CTC - fixed + variable ( Is this negotiable ?) -

-

Technologies:

Experience Required: 3 - 7 years

Skills Required:

Develop applications using Java/JEE, Microservices, Spring, Spring Boot, JPA, Hibernate,

Spring MVC and Spring Data

Nice to have Spring Integration

Very strong in data structure and algorithm

Experienced in Object-Oriented design

Must have strong experience in Java and Spring Framework

Excellent communication skills

Must possess strong problem solving and troubleshooting skills

JSP, Servlets, HTML, JavaScript, CSS, Angular, React

Hands on experience in developing RESTFUL Microservices using spring boot and Web

Services using REST, JSON, SOAP, XML

Linux / Unix operating systems

SQL and databases like MySQL, PostgreSQL, Oracle

Nice to have Cassandra, MongoDB

Requirement analysis (use cases, user stories etc.)

Worked in Agile Methodology & Scrums

Used CI/CD or DevOps platform technologies e.g., Docker, Kubernetes, Git, Jenkins,

Artifactory etc.

Proven ability to work cross-functionally

Company: SecuvyAI, Backed by Dell Technology Ventures

Location: Hyderabad

About Secuvy:

At Secuvy, we believe Privacy & Data Security will be a necessity for every global brand. Our mission is to provide best in class Contextual Data Intelligence tools to monitor, automate & simplify data governance for security, compliance & legal teams. We hire out of the box thinkers with the passion, creativity, and perseverance to handle constantly expanding data sprawls and deliver impactful results to our customers. Secuvy’s team comprises experts with Deep AI & Security background who have launched products for Fortune 100. We are backed by Dell Technology Ventures & Top Security VC firms in Silicon Valley. Learn more at www.secuvy.ai

About the Role:

Our enthusiasm for leadership driven by purpose is unwavering. We believe that every individual holds latent abilities waiting to be discovered and that a bold, unconventional approach is necessary to unleash them. We have grand aspirations and never settle for mediocrity. We are meticulous in our attention to detail. Our desire to tackle challenging issues and achieve exceptional outcomes for our customers is what drives us to always strive for excellence

Responsibilities:

- Design and develop high-quality, scalable, and performant software solutions using NodeJS and AWS services.

- Collaborate with cross-functional teams, including product managers, designers, and other engineers, to identify and solve complex business problems.

- Design and develop large-scale distributed systems that are reliable, resilient, and fault-tolerant.

- Write clean, well-designed, and maintainable code that is easy to understand and debug.

- Participate in code reviews and ensure that all code is of high quality and adheres to best practices.

- Troubleshoot and debug production issues and work with the team to develop and implement solutions.

- Stay up-to-date with new technologies and best practices in software engineering and cloud computing.

- Experience with Data Security or Cybersecurity Products is a big asset

Requirements:

- Bachelor's or Master's degree in Computer Science, Software Engineering, or a related field.

- At least 2-4 years of professional experience in building web applications using NodeJS and AWS services.

- Strong understanding of NodeJS, and experience with server-side frameworks such as Express and NestJS.

- Strong experience in designing and building large-scale distributed systems, with a solid understanding of distributed computing concepts.

- Hands-on Experience with AWS services, including EC2, S3, Lambda, API Gateway, and RDS.

- Experience with containerization and orchestration technologies such as Docker and Kubernetes.

- Strong understanding of software engineering best practices, including agile development, TDD, and continuous integration and deployment.

- Hands-on Experience with Cloud technologies including Kubernetes and Docker.

- Experience with no-sql technologies like MongoDB or Azure Cosmos

- Experience with a distributed publish-subscribe messaging system like Kafka or redis Pubsub

- Experience developing, configuring & deploying applications on Hapi.js/Express/Fastify.

- Comfortable writing tests in Jest

- Excellent problem-solving and analytical skills, with the ability to identify and solve complex technical problems.

- Strong communication and collaboration skills, with the ability to work effectively in a team environment.

Why Work With Us?

- Join a rapidly growing startup with a passionate team of experts at the forefront of data privacy & security.

- Opportunity to play a key role in shaping the technical vision and strategy for Fortune 1000 Customers.

- Work in a dynamic, fast-paced environment where you can make an impact and drive change.

- Enjoy a competitive salary, benefits, and opportunities for growth and advancement.

- If you are a passionate, self-motivated, and experienced Senior NodeJS Software Engineer with expertise in AWS and large-scale system design, we would love to hear from you!

Regional Sales Manager

Company Profile

Ashnik is a leading enterprise open source solutions company in Southeast Asia and India, enabling organizations to adopt open source for their digital transformation goals. Founded in 2009, it offers a full-fledged Open Source Marketplace, Solutions, and Services – Consulting, Managed, Technical, Training. Over 200 leading enterprises so far have leveraged Ashnik’s offerings in the space of Database platforms, DevOps & Microservices, Kubernetes, Cloud, and Analytics.

As a team culture, Ashnik is a family for its team members. Each member brings in different perspective, new ideas and diverse background. Yet we all together strive for one goal – to deliver best solutions to our customers using open-source software. We passionately believe in the power of collaboration. Through an open platform of idea exchange, we create vibrant environment for growth and excellence.

Responsibilities

- Create strong relationships with key client stakeholders at both senior and mid-

management levels - Create & articulate compelling value propositions around the use of Open-source technology

- Understand Ashnik’s capabilities & services and effectively communicate offerings to the customers

- Create, implement and own a sales pipeline to manage customer lead intake, outbound activity, prioritization and metrics for measurement of deal status

- Understand the competitive landscape and market trends

- Establish sales objectives by forecasting and developing annual sales quotas for regions and territories; projecting expected sales volume and profit for existing and new products/technologies

- Desire to own projects and exceed expectations, with ability to find solutions and deliver results within a rapidly changing, entrepreneurial, technology-driven culture

- Ability to identify and solve client issues strategically

- Excellent interpersonal skills, with the ability to communicate effectively with management and cross-functional teams, for both technical and non-technical audiences

- Work with the Sales, pre-sales, technical and Operations, teams to implement targeted sales strategy

- Generate and maintain accurate Account and Opportunity plans

- Work with internal teams on behalf of clients to ensure the highest level of customer service

- Interface with technical support internally to resolve issues that directly impact partners

- Establish high levels of quality, accuracy and process consistency for the sales team

- Reporting and analytics

- Ensure reports and other internal intelligence and insight is provided to the sales team

- Keen business sense, with the ability to find creative business-oriented solutions to problems.

Qualification and Skills

- Graduate with minimum 8-10 years of experience in selling IT software products to large enterprises in South India

- Strong skills in enterprise sales cycle

- Familiarity with open source software is highly desirable

- Strong communication and presentation skills

Location: Bangalore

Package: upto 20L

wireframing, prototyping, visual design, interaction design, and usability testing

- An intuitive eye for customer needs beyond the obvious

- Excellent attention to detail

- Ability to collaborate with cross-functional team members

- Ability to collect and interpret both qualitative and quantitative feedback

- A well-rounded portfolio of client work, demonstrating a strong understanding of client objectives

- Ability to effectively communicate and persuade around design concepts

- Passion for design; not satisfied with the status quo and always thinking of ways to improve

- Creative problem-solving skills

- Dynamic, creative personality, effective at engaging and influencing a variety of audiences

- Provide assistance to product engineers when needed

- Recommend new tools and technologies by staying abreast of the latest trends and techniques

Education and Experience

- 3 to 5 years of professional experience as an Interactive Designer / User Experience Designer.

- You have a Bachelor's Degree or equivalent practical work experience in UX Design, HCI or related field

- You are an expert in using tools like Figma, Sketch, Zeplin or any other similar tool for high-fidelity UI

|

WORK FROM HOME / 1 year Contract Role |

|

Designing a modern highly responsive web-based user interface with the latest user-facing features ·

·Building reusable components and front-end libraries ·

Translating wireframe and design into high-quality code ·

As a React.js Developer, you will be involved from conception to completion with projects that are technologically sound and aesthetically impressive ·

· Maintain code and write automated tests to ensure the product is of the highest quality ·

· Optimizing components for maximum performance across a vast array of web-capable devices and browsers ·

· Leverage assets and resources provided by the client into applications ·

· Write and optimize code in a well-documented and clean way alongside its benchmarking products

|

|

REQUIREMENTS:

|

|

We are looking for experienced React JS developers who are proficient with JavaScript and TypeScript.

The primary focus of the selected candidates would be on developing user interface components implementing and executing them following well-known React.js workflows (such as Next and redux). Enduring these components, the overall application should be robust and easy to manage.

You should be a team player with commitments to achieve perfection by every stage of development that includes collaborative problem solving, sophisticated design, and quality products |

|

TECHNICAL SKILLS:

|

|

·Proficiency in JavaScript and TypeScript including DOM manipulation

· Knowledge of functional and object-oriented programming in JS/TS · Experience with HTML, CSS, ECMAScript (ES6), Restful APIs (application programming interfaces)

Experience with common front-end development tools such as babel, Webpack, NPM, Yarn, etc. ·

Knowledge of modern authorization mechanisms, such as JSON Web Tokens · Knowledge of other frameworks such as Gatsby JS, Angular, Vue, etc. is a plus · Knowledge of Code versioning tools such as Git, SVN, etc. ·

Understanding of automated testing tools like Jest, Mocha, etc.

|

Position: IT Auditor

Experience: 4-12 Years

Location: Pune

Key Skills Required:

CISA, CISSP, CISM, IT Audit, Technology Audit, IT Infrastructure Audit, Application Security Audit, Information Security Audit, Cyber Security Audit, Cloud Security, Ethical Hacker

Additional key words: Vulnerability assessment, Penetration Testing, ITGC testing, Cloud Computing,

IT AUDITOR is responsible to plan and perform the audit assignment starting from audit announcement, audit planning, field work, audit quality reviews, pre-closing / closing meetings with the respective Directors / Head of the Departments including writing of the audit report and its finalization as well as follow up of the audit actions. Additionally IT AUDITOR will also be responsible to:

• Evaluate IT systems, processes and projects in place;

• Determine risks to the Group’s information assets, and help identify methods to minimize those risks;

• Ensure information management processes are in compliance with IT-specific laws, policies and standards;

• Determine inefficiencies in IT systems, IT projects and associated management processes and

• Consult in IT projects, new initiatives and organizational frameworks.

Description

Audit Planning

1) Perform audits at Volkswagen Group entities. and other concerned Volkswagen Group Companies with focus on IT processes keeping the associated business risks in mind.

2) Participate in the preparation of audit objective & scope document along with audit schedule based on the audit objective and timeline specified by Head of IT Audit India Hub.

3) Participate in the preparation of work program

Audit Process

1) Prepare and conduct preparatory interviews with the Directors and Heads of the audited departments to identify the processes to be assessed during the audit.

2) Request and collect relevant audit data for analysis from respective business areas.

3) Prepare audit matrix on periodic basis to record the audit field work and update the progress of the audit to IT Audit Manager and the Head of IT Audit Hub India.

4) Define actions including relevant controls to mitigate the business risks identified based on the evidences provided during the audit.

5) Organize and conduct pre-closing meetings with business areas to agree upon audit observations and relevant actions.

6) Prepare and conduct closing meetings with the Directors / Heads of the Department for audited division to agree upon the audit observations, risks and proposed actions.

7) Prepare the draft audit report and submit the same to the IT Audit Manager and the Head of IT Audit India Hub for review.

8) Ensure that adequate documentation is prepared for the audit assignment. Peer review changes are done before release of the final audit report to the business area.

9) Contact business area to review the progress of the implementation of audit actions defined in the final audit report. Based on the review, write the status of the follow up and submit the same for upload in RIAS.

10) Obtain necessary certifications / qualifications to support the job requirements by attending relevant trainings

11) Support the conduction of unscheduled audits/special investigations and audits from the anti-corruption system.

12) Relevant knowledge is shared among the team members.

13) Consult in IT projects, new initiatives and organizational frameworks.

14) Ensure information management processes are in compliance with IT-specific laws, policies and standards.

15) Determine risks to the Group’s information assets, and help identify methods to minimize those risks.

16) Evaluate IT systems, processes and projects in place.

17) Determine inefficiencies in IT systems, IT projects and associated management processes.

Role & Responsibilities

- A Proven work experience as a Ruby on Rails developer with good understanding of Java script, jQuery, HTML, CSS. The individual will need to be hands on with development, performance optimisation, secure development process, usability, and coding standards of the product.

- Strong ROR development experience and follows best practices (test-driven development, continuous integration, SCRUM, refactoring, and code standards).

- Should have a mix of excellent reasoning ability to document, develop and test software with the commitment to excellence and defect free product before being deployed.

- Should be able to work closely with all stakeholders to investigate, fix, optimise, test, and deploy high quality solutions.

- Should have hands on understanding of technical design, implementation, and maintenance of technical initiatives towards improving and scaling of products.

- Self-motivated and should rapidly incorporate new requirements and deliver successfully by self.

- Effectively communicates with peers and stakeholders, gathers and clarify requirements from technical & functional aspect.

- Follows software development process; consistently innovates processes to improve individual and team productivity and quality.

- Strong Analytical, Problem Solving Skills and participates in all activities with urgency, should be result oriented and with strong work ethic.

- Experience with Agile development lifecycle with excellent understanding of feature estimation and ability to communicate issues and risks that may impact timelines or resources.

Desired Candidate Profile

- 4+ years of IT experience.

- At least 4 years commercial web development experience.

- At least 3 years of Ruby on Rails commercial development experience.

- Familiarity with Relational and Non Relation Databases ( Postgres, Mysql, MongoDb)

- REST API development.

- Familiarity with In Memory Database ( Redis, Memcache )

- Web development - HTML, HTML5 JavaScript, AJAX, CSS, jQuery, http, REST.

- AGILE/SCRUM development Experience.

- Development practices - Rails test framework / TDD / BDD, Domain Driven Design, SOLID, refactoring, OOP design patterns

- Expertise in version control system ( GIT )

- Candidate need to be comfortable dabbling with some basic infrastructure management on AWS.

- Familiarity with ( React, Vue ) is a plus.