Infinium Associate

https://infiniumassociates.comAbout

Connect with the team

Jobs at Infinium Associate

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

Job Role: Teamcenter Admin

• Teamcenter and CAD (NX) Configuration Management

• Advanced debugging and root-cause analysis beyond L2

• Code fixes and minor defect remediation

• AWS knowledge, which is foundational to our Teamcenter architecture

• Experience supporting weekend and holiday code deployments

• Operational administration (break/fix, handle ticket escalations, problem management

• Support for project activities

• Deployment and code release support

• Hypercare support following deployment, which is expected to onboard approximately 1,000+ additional users

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

DataHavn IT Solutions is a company that specializes in big data and cloud computing, artificial intelligence and machine learning, application development, and consulting services. We want to be in the frontrunner into anything to do with data and we have the required expertise to transform customer businesses by making right use of data.

About the Role:

As a Data Scientist specializing in Google Cloud, you will play a pivotal role in driving data-driven decision-making and innovation within our organization. You will leverage the power of Google Cloud's robust data analytics and machine learning tools to extract valuable insights from large datasets, develop predictive models, and optimize business processes.

Key Responsibilities:

- Data Ingestion and Preparation:

- Design and implement efficient data pipelines for ingesting, cleaning, and transforming data from various sources (e.g., databases, APIs, cloud storage) into Google Cloud Platform (GCP) data warehouses (BigQuery) or data lakes (Dataflow).

- Perform data quality assessments, handle missing values, and address inconsistencies to ensure data integrity.

- Exploratory Data Analysis (EDA):

- Conduct in-depth EDA to uncover patterns, trends, and anomalies within the data.

- Utilize visualization techniques (e.g., Tableau, Looker) to communicate findings effectively.

- Feature Engineering:

- Create relevant features from raw data to enhance model performance and interpretability.

- Explore techniques like feature selection, normalization, and dimensionality reduction.

- Model Development and Training:

- Develop and train predictive models using machine learning algorithms (e.g., linear regression, logistic regression, decision trees, random forests, neural networks) on GCP platforms like Vertex AI.

- Evaluate model performance using appropriate metrics and iterate on the modeling process.

- Model Deployment and Monitoring:

- Deploy trained models into production environments using GCP's ML tools and infrastructure.

- Monitor model performance over time, identify drift, and retrain models as needed.

- Collaboration and Communication:

- Work closely with data engineers, analysts, and business stakeholders to understand their requirements and translate them into data-driven solutions.

- Communicate findings and insights in a clear and concise manner, using visualizations and storytelling techniques.

Required Skills and Qualifications:

- Strong proficiency in Python or R programming languages.

- Experience with Google Cloud Platform (GCP) services such as BigQuery, Dataflow, Cloud Dataproc, and Vertex AI.

- Familiarity with machine learning algorithms and techniques.

- Knowledge of data visualization tools (e.g., Tableau, Looker).

- Excellent problem-solving and analytical skills.

- Ability to work independently and as part of a team.

- Strong communication and interpersonal skills.

Preferred Qualifications:

- Experience with cloud-native data technologies (e.g., Apache Spark, Kubernetes).

- Knowledge of distributed systems and scalable data architectures.

- Experience with natural language processing (NLP) or computer vision applications.

- Certifications in Google Cloud Platform or relevant machine learning frameworks.

Similar companies

About the company

Building software to help e-commerce businesses sell better

Our company is dedicated to helping e-commerce merchants sell better through innovative software solutions. With a deep understanding of the e-commerce industry and a commitment to quality, we have developed a suite of products that improve the shopping experience for 100 million buyers worldwide every day.

Our passionate team of 15 is more than just a workforce; we're a family, growing together to better support our diverse range of over 25,000 global clients, from spirited startups to seasoned enterprises.

We've made our mark in the e-commerce world with our top-rated applications on the Shopify App Store, but our true pride lies in our inclusive, dynamic, and empathetic work culture. Here, every voice matters, every idea fuels growth, and every team member plays a crucial role in our collective success. Dive into the heart of our company ethos by exploring our culture book [https://cdn.starapps.studio/files/StarApps+Culture+Book.pdf] and see how we’re not just a company, but a community.

As the e-commerce landscape flourishes, we're not just keeping pace; we're setting the rhythm. We're on the lookout for individuals who are not just looking for a job, but a journey; a chance to make a real, heartfelt impact in this exciting industry. Be part of a journey that’s not just about growth, but about belonging, innovation, and shaping the future of e-commerce. Let's embark on this thrilling adventure together!

Jobs

3

About the company

Who are we?

Trendlyne is a funded, profitable products startup in the financial markets space. We have cutting-edge analytics products built for Indian and US customers, for stock markets and mutual funds.

Our founders are IIT + IIM graduates, with strong tech and marketing experience. We have top finance and management experts on the Board of Directors.

What do we do?

We build best in class analytics in the US and Indian stock market space. Organic growth in B2B and B2C products have already made the company profitable. We deliver 1 billion+ APIs every month to B2B customers, and have a B2C website and app.

Visit us at trendlyne.com, or look for the Trendlyne mobile app on the Google Play Store:

https://play.google.com/store/apps/details?id=com.trendlyne.markets

We are a great place to work

We have a culture where you are building something awesome, and your work makes a difference. Full-time employees get paid leave, parental leave, medical insurance, and employee stock options.

We invest in your learning and check in with you to help you meet your career goals. We keep regular hours and don't work on weekends.

Jobs

4

About the company

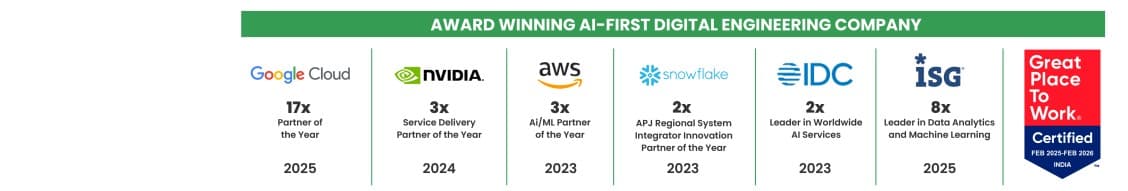

Quantiphi is an award-winning AI-first digital engineering company driven by the desire to reimagine and realize transformational opportunities at the heart of the business. Since its inception in 2013, Quantiphi has solved the toughest and most complex business problems by combining deep industry experience, disciplined cloud, and data-engineering practices, and cutting-edge artificial intelligence research to achieve accelerated and quantifiable business results.

Jobs

8

About the company

Jobs

2

About the company

Welcome to Neogencode Technologies, an IT services and consulting firm that provides innovative solutions to help businesses achieve their goals. Our team of experienced professionals is committed to providing tailored services to meet the specific needs of each client. Our comprehensive range of services includes software development, web design and development, mobile app development, cloud computing, cybersecurity, digital marketing, and skilled resource acquisition. We specialize in helping our clients find the right skilled resources to meet their unique business needs. At Neogencode Technologies, we prioritize communication and collaboration with our clients, striving to understand their unique challenges and provide customized solutions that exceed their expectations. We value long-term partnerships with our clients and are committed to delivering exceptional service at every stage of the engagement. Whether you are a small business looking to improve your processes or a large enterprise seeking to stay ahead of the competition, Neogencode Technologies has the expertise and experience to help you succeed. Contact us today to learn more about how we can support your business growth and provide skilled resources to meet your business needs.

Jobs

400

About the company

Are you ready to be at the forefront of the AI revolution? Moative is your gateway to reshaping industries through cutting-edge Applied AI Services and innovative Venture Labs.

Moative is an AI company that focuses on automating tasks, compressing workflows, predicting demand, pricing intelligently, and delighting customers. They design AI roadmaps, build co-pilots, and create predictive AI solutions for companies in energy, utilities, packaging, commerce, and other primary industries.

🚀 What We Do

At Moative, we're not just using AI – we're redefining its potential. Our mission is to empower businesses in energy, utilities, packaging, commerce, and other primary industries with AI solutions that drive unprecedented productivity and growth.

🔬 Our Expertise:

- Design tailored AI roadmaps

- Build intelligent co-pilots for specialists

- Develop predictive AI solutions

- Launch AI micro-products through Moative Labs

💡 Why Moative?

- Innovation at Core: We're constantly pushing the boundaries of AI technology.

- Industry Impact: Our solutions directly influence the cost of goods sold, helping clients surpass industry profit margins.

- Customized Approach: We fine-tune fundamental AI models to create unique, intelligent systems for each client.

- Continuous Learning: Our systems evolve and improve, ensuring long-term value.

🧑🦰Founding Team

Shrikanth and Ashwin, IIT-Madras alumni have been riding technology waves since the dotcom era. Our latest venture, PipeCandy (Data & Predictions on 12 million eCommerce sellers) was acquired in 2021. We have built analytical models for industrial giants, advised enterprise AI platforms on competitive positioning, and built 70 member AI team for our portfolio companies since 2023.

Jobs

1

About the company

Jobs

19

About the company

Jobs

58

About the company

Sanglob Business Services Pvt. Ltd. is a Pune-based IT consulting and staffing company established in 2018. We specialize in digital transformation, cloud, AI solutions, software development, and talent augmentation. We partner with global clients across multiple industries to deliver reliable technology solutions and top talent with a customer-first approach

Jobs

3

About the company

Healthcare AI return on investment statistics and performance metrics dataset

Jobs

1