Codemind Staffing Solutions

https://codemind.inAbout

Company social profiles

Jobs at Codemind Staffing Solutions

Key Responsibilities

Architect and implement enterprise-grade Lakehouse solutions using Databricks

Design and deliver scalable batch and real-time data pipelines using Apache Spark (PySpark/SQL)

Build ETL/ELT pipelines, incremental data loads, and metadata-driven ingestion frameworks

Implement and optimize Databricks components: Delta Lake, Delta Live Tables, Autoloader, Structured Streaming, and Workflows

Design large-scale data warehousing solutions with 3NF and dimensional modeling

Establish data governance, security, and data quality frameworks, including Unity Catalog

Lead ML lifecycle management using MLflow and drive AI use cases (RAG, AI/BI)

Manage cloud-native deployments on Microsoft Azure and integrate with enterprise systems (e.g., ServiceNow)

Drive CI/CD, DevOps practices, and performance optimization of Spark workloads

Provide technical leadership, mentor teams, and ensure successful delivery

Collaborate with stakeholders to translate business requirements into scalable solutions

Required Skills & Experience

10+ years in Data Engineering / Analytics / AI with strong delivery ownership

Deep expertise in Databricks ecosystem (Notebooks, Delta Lake, Workflows, AI/BI, Apps, Genie)

Strong hands-on experience with:

Apache Spark (performance tuning & scalability)

Python and SQL

Proven experience in:

Solution architecture and large-scale data platforms

Data warehousing and advanced data modeling

Batch and real-time processing systems

Experience with:

Azure Databricks and Azure data services

ML flow and MLOps practices

ServiceNow or enterprise integrations

Exposure to AI technologies (RAG, LLM-based solutions)

Strong stakeholder management and leadership skills

Certifications (Preferred)

Databricks certifications aligned to data engineering and AI tracks, such as:

Databricks Certified Data Engineer Associate (validates foundational ETL, Spark, and Lakehouse capabilities)

Databricks Certified Data Engineer Professional (advanced expertise in pipeline design, optimization, and governance)

Certifications in Databricks Machine Learning or Generative AI tracks (e.g., ML Associate / Professional) for AI-driven use cases

Relevant cloud certifications in Microsoft Azure or Amazon Web Services for platform deployment and architecture

We are looking for a highly skilled AI Engineer / AI Architect to design, develop, and deploy scalable AI solutions. The ideal candidate will have strong expertise in building the Enterprise Generative AI solution along with the ability to architect end-to-end AI systems aligned with business objectives.

Key Responsibilities

Design and implement end-to-end AI/Gen AI solutions.

Architect scalable and secure AI systems using cloud platforms.

Work on Generative AI use cases including LLMs, RAG, Workflow, Agentic AI, prompt

engineering, LLM-as-Judge, and fine-tuning.

Collaborate with cross-functional teams and customer to translate business requirements into AI solutions.

Should have knowledge and worked in SDLC process and understand DevOps

practices/workflows.

Stay updated with emerging AI trends and evaluate new tools/technologies.

Mentor junior engineers and guide best practices in AI development.

Required Skills

Strong proficiency in Python and AI/ML Tools like Google ADK/Langgraph/Langchain, etc.., Should have experience in configuring the LLM in AWS Bedrock/Azure Foundry/Databricks and

configure the LLMOPS and production grade AI System.

Hands-on experience with Generative AI (LLMs, embedding, prompt engineering).

Understands the Agile process and involve in technical scoping and estimations

Strong problem-solving and analytical skills.

Experience working on production-grade AI systems.

Nice to Have

Certifications in Gen AI/AI/ML in Azure/Databricks platforms.

Should have some foundational knowledge with Azure Cloud

Experience in handling an enterprise custom and enterprise AI solutions is a added advantage.

Key responsibilities

• Design, build, and maintain robust CI/CD pipelines using Azure DevOps Services (Azure Pipelines) and Git-based workflows.

• Implement and manage infrastructure as code (IaC) using ARM templates, Bicep, and/or Terraform for repeatable environment provisioning.

• Containerize applications (Docker) and manage container orchestration platforms such as AKS (Azure Kubernetes Service).

• Automate build, test, release, and rollback processes; integrate automated testing and quality gates into pipelines.

• Monitor and improve platform reliability and observability using logging and monitoring tools (e.g., Azure Monitor, Application Insights, Prometheus, Grafana).

• Drive platform security and compliance through pipeline controls, secrets management (Key Vault / Vault), and secure configuration practices.

• Implement cost-optimization and governance for Azure resources (tags, policies, budgets).

• Troubleshoot build/release failures, production incidents, and performance bottlenecks; perform root-cause analysis and implement permanent fixes.

• Mentor developers in Git workflows, pipeline authoring, best practices for IaC, and cloud-native design.

• Maintain clear documentation: runbooks, deployment playbooks, architecture diagrams, and pipeline templates.

Required skills & experience

• 4+ years hands-on experience working with Azure and cloud-native application delivery.

• Deep experience with Azure DevOps (Repos, Pipelines, Artifacts, Boards).

• Strong IaC skills with Terraform, ARM templates, or Bicep.

• Solid experience with CI/CD design and YAML pipeline authoring.

• Practical knowledge of containerization (Docker) and Kubernetes — preferably AKS.

• Scripting skills: PowerShell, Bash, and/or Python for automation.

• Experience with Git workflows (branching strategies, PRs, code reviews).

• Familiarity with configuration management and secrets management (Azure Key Vault, HashiCorp Vault).

• Understanding of networking, identity (Azure AD), and security fundamentals in Azure.

• Strong troubleshooting, debugging, and incident response skills.

• Good collaboration and communication skills; ability to work across teams.

Certification

AZ-400: Microsoft Certified: DevOps Engineer Expert or AZ-104 or AZ 305 or Terraform Associate.

Key responsibilities

• Design, build, and maintain robust CI/CD pipelines using Azure DevOps Services (Azure Pipelines) and Git-based workflows.

• Implement and manage infrastructure as code (IaC) using ARM templates, Bicep, and/or Terraform for repeatable environment provisioning.

• Containerize applications (Docker) and manage container orchestration platforms such as AKS (Azure Kubernetes Service).

• Automate build, test, release, and rollback processes; integrate automated testing and quality gates into pipelines.

• Monitor and improve platform reliability and observability using logging and monitoring tools (e.g., Azure Monitor, Application Insights, Prometheus, Grafana).

• Drive platform security and compliance through pipeline controls, secrets management (Key Vault / Vault), and secure configuration practices.

• Implement cost-optimization and governance for Azure resources (tags, policies, budgets).

• Troubleshoot build/release failures, production incidents, and performance bottlenecks; perform root-cause analysis and implement permanent fixes.

• Mentor developers in Git workflows, pipeline authoring, best practices for IaC, and cloud-native design.

• Maintain clear documentation: runbooks, deployment playbooks, architecture diagrams, and pipeline templates.

Required skills & experience

• 5+ years hands-on experience working with Azure and cloud-native application delivery.

• Deep experience with Azure DevOps (Repos, Pipelines, Artifacts, Boards).

• Strong IaC skills with Terraform, ARM templates, or Bicep.

• Solid experience with CI/CD design and YAML pipeline authoring.

• Practical knowledge of containerization (Docker) and Kubernetes — preferably AKS.

• Scripting skills: PowerShell, Bash, and/or Python for automation.

• Experience with Git workflows (branching strategies, PRs, code reviews).

• Familiarity with configuration management and secrets management (Azure Key Vault, HashiCorp Vault).

• Understanding of networking, identity (Azure AD), and security fundamentals in Azure.

• Strong troubleshooting, debugging, and incident response skills.

• Good collaboration and communication skills; ability to work across teams.

Certification

AZ-400: Microsoft Certified: DevOps Engineer Expert or AZ-104 or AZ 305 or Terraform Associate.

Key Responsibilities

Architect and implement enterprise-grade Lakehouse solutions using Databricks

Design and deliver scalable batch and real-time data pipelines using Apache Spark (PySpark/SQL)

Build ETL/ELT pipelines, incremental data loads, and metadata-driven ingestion frameworks

Implement and optimize Databricks components: Delta Lake, Delta Live Tables, Autoloader, Structured Streaming, and Workflows

Design large-scale data warehousing solutions with 3NF and dimensional modeling

Establish data governance, security, and data quality frameworks, including Unity Catalog

Lead ML lifecycle management using MLflow and drive AI use cases (RAG, AI/BI)

Manage cloud-native deployments on Microsoft Azure and integrate with enterprise systems (e.g., ServiceNow)

Drive CI/CD, DevOps practices, and performance optimization of Spark workloads

Provide technical leadership, mentor teams, and ensure successful delivery

Collaborate with stakeholders to translate business requirements into scalable solutions

Required Skills & Experience

10+ years in Data Engineering / Analytics / AI with strong delivery ownership

Deep expertise in Databricks ecosystem (Notebooks, Delta Lake, Workflows, AI/BI, Apps, Genie)

Strong hands-on experience with:

a. Apache Spark (performance tuning & scalability)

b. Python and SQL

Proven experience in:

a. Solution architecture and large-scale data platforms

b. Data warehousing and advanced data modeling

c. Batch and real-time processing systems

Experience with:

a. Azure Databricks and Azure data services

b. MLflow and MLOps practices

c. ServiceNow or enterprise integrations

Exposure to AI technologies (RAG, LLM-based solutions)

Strong stakeholder management and leadership skills

Certifications (Preferred)

Databricks certifications aligned to data engineering and AI tracks, such as:

a. Databricks Certified Data Engineer Associate (validates foundational ETL, Spark, and Lakehouse capabilities)

b. Databricks Certified Data Engineer Professional (advanced expertise in pipeline design, optimization, and governance)

Certifications in Databricks Machine Learning or Generative AI tracks (e.g., ML Associate / Professional) for AI-driven use cases

Relevant cloud certifications in Microsoft Azure or Amazon Web Services for platform deployment and architecture

Role Summary

We are looking for a highly skilled AI Engineer / AI Architect to design, develop, and deploy scalable AI solutions. The ideal candidate will have strong expertise in building the Enterprise Generative AIsolution along with the ability to architect end-to-end AI systems aligned with business objectives.

Key Responsibilities

Design and implement end-to-end AI/Gen AI solutions. Architect scalable and secure AI systems using cloud platforms.

Work on Generative AI use cases including LLMs, RAG, Workflow, Agentic AI, prompt

engineering, LLM-as-Judge, and fine-tuning.

Collaborate with cross-functional teams and customer to translate business requirements into AI solutions.

Should have knowledge and worked in SDLC process and understand DevOps

practices/workflows.

Stay updated with emerging AI trends and evaluate new tools/technologies.

Mentor junior engineers and guide best practices in AI development.

Required Skills

Strong proficiency in Python and AI/ML Tools like Google ADK/Langgraph/Langchain, etc.., Should have experience in configuring the LLM in AWS Bedrock/Azure Foundry/Databricks and

configure the LLMOPS and production grade AI System.

Hands-on experience with Generative AI (LLMs, embedding, prompt engineering).

Understands the Agile process and involve in technical scoping and estimations

Strong problem-solving and analytical skills. Experience working on production-grade AI systems.

Nice to Have

Certifications in Gen AI/AI/ML in Azure/Databricks platforms.

Should have some foundational knowledge with Azure Cloud

Experience in handling an enterprise custom and enterprise AI solutions is a added advantage.

Position: Dot Net Developer

Job Profile: We are seeking a highly motivated and experienced .NET Core Developer to join our team. The ideal candidate will have a strong background in designing, developing, and maintaining web applications and APIs using .NET Core. As part of our dynamic team, you will work on various projects that involve creating scalable and highperforming applications for our clients.

Responsibilities:

Design, develop, and maintain RESTful APIs using .NET Core and related technologies.

Develop, test, and deploy web applications that meet functional and non-functional

business requirements.

Collaborate with front-end developers to integrate user-facing elements with server-side logic.

Write clean, scalable, and efficient code following best practices in software

development.

Participate in code reviews, design discussions, and contribute to continuous

improvement processes.

Troubleshoot and debug applications and APIs to optimize performance.

Work with databases such as SQL Server or NoSQL databases.

Implement security and data protection measures.

Collaborate with cross-functional teams, including QA, DevOps, and Project Managers, to ensure successful project delivery.

Maintain proper documentation of code, processes, and functionality.

Skills:

3+ years of hands-on experience with .NET Core in API and web application

development.

Proficiency in C# and ASP.NET Core for building server-side applications.

Strong understanding of RESTful API design, development, and best practices.

Experience with front-end technologies such as HTML5, CSS3, and JavaScript

frameworks (e.g. React, Angular or Vue.js).

Solid understanding of Entity Framework Core or other ORM tools.

Experience with SQL Server or other relational databases.

Familiarity with cloud platforms like Azure or AWS is a plus.

Knowledge of microservices architecture and containerization technologies (e.g.,

Docker, Kubernetes) is preferred. • Familiarity with version control systems like Git.

Experience with CI/CD pipelines and automated testing frameworks is a plus.

Strong problem-solving and analytical skills.

Excellent communication and teamwork abilities.

Bachelor’s degree in computer science, Information Technology, or a related field (or equivalent work experience).

Job Summary:

We are looking for a highly experienced Senior Full Stack Developer / Technical Lead with strong expertise in .NET technologies and React. The ideal candidate should be comfortable handling multiple projects simultaneously, possess excellent communication skills, and be capable of leading and mentoring a team while delivering scalable, high-quality solutions.

Key Responsibilities:

· Design, develop, and maintain scalable web applications using .NET (C#, ASP.NET Core, Web API) and React

· Lead end-to-end project execution across multiple parallel projects

· Handle project switching efficiently with minimal ramp-up time

· Participate in architecture, design, and technical decision-making

· Manage, mentor, and guide a team of developers

· Conduct code reviews, ensure best practices, and maintain coding standards

· Collaborate with Product Owners, QA, UI/UX, and Stakeholders

· Ensure performance optimization, security, and application stability

· Take ownership of delivery timelines and technical quality

· Support production issues and provide root cause analysis

Required Technical Skills:

· Strong hands-on experience in:

o C#, ASP.NET, ASP.NET Core, Web API

o React.js, Redux / Context API

o HTML, CSS, JavaScript, TypeScript

· Experience with RESTful APIs, Microservices (preferred)

· Database expertise: SQL Server (mandatory), NoSQL (added advantage)

· Experience with Entity Framework / Dapper

· Familiarity with Azure / AWS (preferred)

· Knowledge of CI/CD pipelines, Git, DevOps practices

· Understanding of Agile / Scrum methodologies

Leadership & Soft Skills:

· Excellent verbal and written communication skills

· Proven experience in team handling and mentoring

· Strong problem-solving and decision-making abilities

· Ability to manage multiple stakeholders and priorities

· Ownership mindset and accountability-driven approach

Nice to Have:

· Experience working in product-based or fast-paced service environments

· Exposure to high-availability and scalable systems

· Client-facing experience

Job Title:

Senior Full Stack Developer / Technical Lead – .NET & React

Experience:

10+ Years

Location: Chennai – T.Nagar / WFO

Job Summary:

We are looking for a highly experienced Senior Full Stack Developer / Technical Lead with strong expertise in .NET technologies and React. The ideal candidate should be comfortable handling multiple projects simultaneously, possess excellent communication skills, and be capable of leading and mentoring a team while delivering scalable, high-quality solutions.

Key Responsibilities:

· Design, develop, and maintain scalable web applications using .NET (C#, ASP.NET Core, Web API) and React

· Lead end-to-end project execution across multiple parallel projects

· Handle project switching efficiently with minimal ramp-up time

· Participate in architecture, design, and technical decision-making

· Manage, mentor, and guide a team of developers

· Conduct code reviews, ensure best practices, and maintain coding standards

· Collaborate with Product Owners, QA, UI/UX, and Stakeholders

· Ensure performance optimization, security, and application stability

· Take ownership of delivery timelines and technical quality

· Support production issues and provide root cause analysis

Required Technical Skills:

· Strong hands-on experience in:

o C#, ASP.NET, ASP.NET Core, Web API

o React.js, Redux / Context API

o HTML, CSS, JavaScript, TypeScript

· Experience with RESTful APIs, Microservices (preferred)

· Database expertise: SQL Server (mandatory), NoSQL (added advantage)

· Experience with Entity Framework / Dapper

· Familiarity with Azure / AWS (preferred)

· Knowledge of CI/CD pipelines, Git, DevOps practices

· Understanding of Agile / Scrum methodologies

Leadership & Soft Skills:

· Excellent verbal and written communication skills

· Proven experience in team handling and mentoring

· Strong problem-solving and decision-making abilities

· Ability to manage multiple stakeholders and priorities

· Ownership mindset and accountability-driven approach

Nice to Have:

· Experience working in product-based or fast-paced service environments

· Exposure to high-availability and scalable systems

· Client-facing experience

Similar companies

About the company

Find latest openings in Talentoj. Search & Apply now!

Jobs

8

About the company

The Wissen Group was founded in the year 2000. Wissen Technology, a part of Wissen Group, was established in the year 2015. Wissen Technology is a specialized technology company that delivers high-end consulting for organizations in the Banking & Finance, Telecom, and Healthcare domains.

With offices in US, India, UK, Australia, Mexico, and Canada, we offer an array of services including Application Development, Artificial Intelligence & Machine Learning, Big Data & Analytics, Visualization & Business Intelligence, Robotic Process Automation, Cloud, Mobility, Agile & DevOps, Quality Assurance & Test Automation.

Leveraging our multi-site operations in the USA and India and availability of world-class infrastructure, we offer a combination of on-site, off-site and offshore service models. Our technical competencies, proactive management approach, proven methodologies, committed support and the ability to quickly react to urgent needs make us a valued partner for any kind of Digital Enablement Services, Managed Services, or Business Services.

We believe that the technology and thought leadership that we command in the industry is the direct result of the kind of people we have been able to attract, to form this organization (you are one of them!).

Our workforce consists of 1000+ highly skilled professionals, with leadership and senior management executives who have graduated from Ivy League Universities like MIT, Wharton, IITs, IIMs, and BITS and with rich work experience in some of the biggest companies in the world.

Wissen Technology has been certified as a Great Place to Work®. The technology and thought leadership that the company commands in the industry is the direct result of the kind of people Wissen has been able to attract. Wissen is committed to providing them the best possible opportunities and careers, which extends to providing the best possible experience and value to our clients.

Jobs

497

About the company

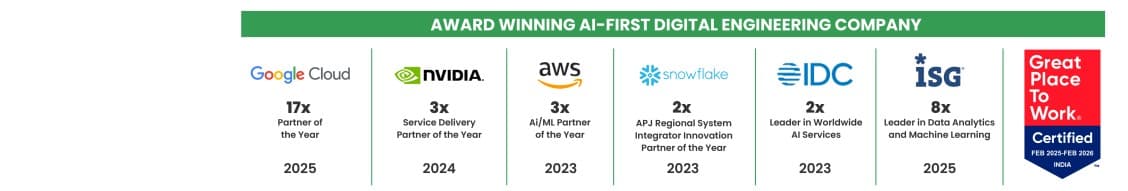

Quantiphi is an award-winning AI-first digital engineering company driven by the desire to reimagine and realize transformational opportunities at the heart of the business. Since its inception in 2013, Quantiphi has solved the toughest and most complex business problems by combining deep industry experience, disciplined cloud, and data-engineering practices, and cutting-edge artificial intelligence research to achieve accelerated and quantifiable business results.

Jobs

6

About the company

Jobs

2

About the company

Wama Technology integrates state-of-the-art technology smoothly to promote corporate success, providing a comprehensive “One-Stop Solution” for all digital demands, from cloud services, artificial intelligence, machine learning, and mobile and web app development technologies. Wama Technologies offers customized solutions that enable businesses to prosper in the digital era. At Wama Technology, our team prioritizes innovation, user experience, and client happiness to provide digital transformation. Wama Technology assists companies in improving user interaction, automatization processes, or delivering new ideas through the strategic use of technology.

Jobs

10

About the company

At PeopleX Ventures, we take great pride in our role as a recruiting partner, dedicated to fulfilling the unique staffing needs of our clients across levels for both technical and non-technical domains, as well as, hiring CXO and CXO -1 across functions and roles, where we ensure we take up only a limited number of roles so that we can deliver successful outcomes.

Our distinctive strength is derived from our exceptional freelance team members, some of whom possess over a decade of valuable experience in corporate and consulting positions, hiring for organizations such as Google, Meta, Flipkart, Intuit, Adobe, Microsoft, Walmart PLUS many early/late stage startups. We as a team carry varied strengths hiring across engineering, product, sales & marketing, finance, HR, etc across levels. Our clientele includes startup organizations (Pre-Series, Series A, B, C, D) and product companies. However, we continue to experiment with organizations we can support.

Jobs

35

About the company

BPO Hirings

Jobs

20

About the company

Jobs

16

About the company

Sanglob Business Services Pvt. Ltd. is a Pune-based IT consulting and staffing company established in 2018. We specialize in digital transformation, cloud, AI solutions, software development, and talent augmentation. We partner with global clients across multiple industries to deliver reliable technology solutions and top talent with a customer-first approach

Jobs

1