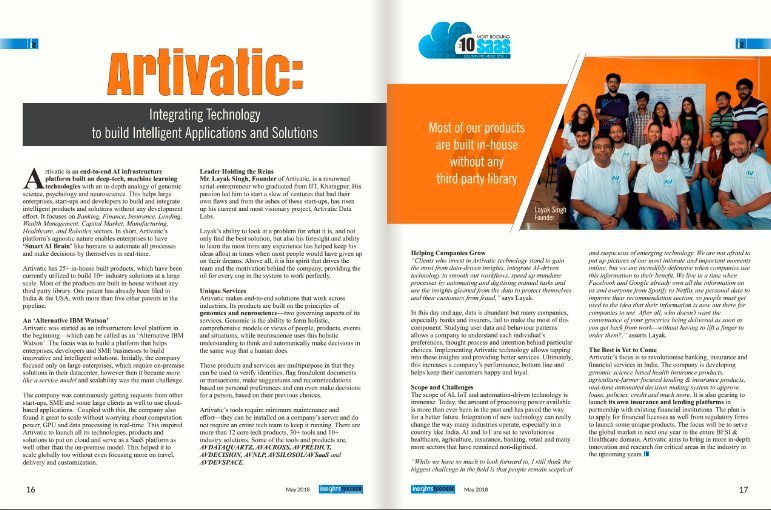

About Artivatic

About

Company video

Photos

Connect with the team

Similar jobs

Senior Mobile App Developer (Android & iOS)

Location: Brookefield, Bangalore — Onsite

Engagement: Full-Time

Experience: 3+ Years

Job Description

We are seeking a highly skilled Senior Mobile App Developer/Consultant with strong hands-on experience in building, deploying, and maintaining mobile applications for both Android and iOS, with React Native as the primary development framework.

The ideal candidate must be proficient with React Native, Android Studio, Xcode, and the end-to-end iOS App Store deployment process. This is an onsite, full-time role suitable for professionals who can take ownership and deliver high-quality product-based mobile applications. Experience in product companies or the banking/fintech domain is a strong advantage.

Primary Tech Stack (Mandatory)

React Native (Primary Framework)

Android Studio (Java/Kotlin)

Xcode (Swift/Objective-C)

RESTful APIs & Integration Patterns

App Store & Play Store Deployment Processes

Key Responsibilities

Design, develop, and maintain mobile applications using React Native for both Android and iOS.

Work with native modules when required using Swift/Objective-C and Java/Kotlin.

Build and release iOS apps to the Apple App Store, including managing certificates and provisioning profiles.

Debug, troubleshoot, and enhance mobile applications.

Optimize app performance, stability, and responsiveness.

Collaborate with product, backend, and QA teams for smooth development workflows.

Apply best practices in UI/UX, security, and mobile architecture.

Prepare documentation and provide technical guidance as needed.

Required Skills & Qualifications

3+ years of hands-on mobile development experience.

Mandatory: Strong proficiency in React Native.

Hands-on experience with Android Studio and Xcode.

Expertise in Swift, Objective-C, Kotlin, or Java.

Proven experience in building and publishing iOS apps to the App Store.

Strong understanding of RESTful API integrations.

Good knowledge of mobile UI/UX principles.

Strong debugging and problem-solving abilities.

Ability to work independently and deliver within timelines.

Nice to Have

Experience with Flutter or native-only app development.

Product-based company experience.

Exposure to Banking / FinTech systems.

Understanding of App Store Optimization (ASO).

Work Details

Location: Brookefield, Bangalore (Onsite)

Working Days: Monday to Saturday (Alternate Saturdays Off)

Timings: 9:00 AM – 6:00 PM

we are looking for a talented and passionate Python Engineer to join our team. As part of our Insights backend team, you will be building new and improving existing services powering the Insights platform. This is a fast-paced role with high growth, visibility, impact, and where many of the decisions for new projects will be driven by you and your team from inception through production. If you are seeking an environment where you get to do meaningful work with other great engineers, then we want to hear from you!

Skills & Requirements

- At least 1 years of experience with Python, Django.

- Well versed in building the backend logic of web applications.

- Strong database skills.

- Solid foundation in designing and developing scalable API’s.

- Understanding of general web architecture.

- A Bachelors, Masters, or PhD in Computer Science, Information Technology, Computer Engineering or some related discipline, or equivalent experience.

- Hands on experience with Django, Flask or other Python frameworks.

- Basic understanding of front-end technologies, such as JavaScript, HTML5, and CSS3.

- Debugging applications to ensure low-latency and high-availability.

- Integrating user-facing elements with server-side logic.

- Implementing security and data protection.

Good to have skills :

- Excellent interpersonal, organizational, written communication, oral communication and listening skills.

- Should come up with the work estimation and should provide inputs to managers on resource and risk planning.

- Ability to coordinate with, stakeholders, manage timelines, escalation & provide on time status.

- Familiarity with some ORM (Object Relational Mapper) libraries.

- Web frameworks and RESTful APIs experience.

Salary: ₹600,000.00 - ₹700,000.00 per year

Job Type: Full-time

Benefits: Leave encashment ,Paid sick time

Schedule: Day shift

Supplemental pay types: Performance bonus

Ability to commute/relocate: Lucknow, Uttar Pradesh: Reliably commute or planning to relocate before starting work (Required)

Education: Bachelor's (Preferred)

Position: Travel Sales Consultant

Location: Saket, New Delhi

Experience: 1 - 2 years (Freshers can also apply)

Minimum Educational Qualification: Graduation

Employment Type: Full-time

Responsibilities And Duties

Connect with our prospective clients both over the phone, email, and chat.

Ask the right questions to ascertain the needs of each unique traveler

Recommend and sell the right trip to the client based on their needs including any extra services that may enhance their experience.

Ensure the use of correct booking processes and procedures to minimize risk and reduce error rates.

Act at all times with the purpose of providing a life-changing experience

Things you will need to bring to begin your adventure with us:

Sales skills - You'll have that edge when it comes to sales and understanding how to provide amazing customer service. You'll be target-driven, and up for any challenge.

Travel experience - You'll be a globe trotter who has an incurable case of the travel bug.

Academic achievements - You'll have been a high flyer with academic accomplishments.

Career ambition - You'll love the thought of a challenging career that can take you places.

Benefits

Unlimited Earnings - You'll work on a fixed base salary plus uncapped commission; the more you sell, the more you'll earn! First-year average earnings are around 1 Million with potential for year-upon-year growth as you build your client base.

Training and development at our own in-house Learning Centre - We will provide you with all the tools you need to get up and running, as well as ongoing training to further develop your skills and knowledge.

Career development and advancement opportunities.

Unbeatable company culture.

Skills:- Inside Sales, Sales and Business Development

Please Apply - https://zrec.in/RZ7zE?source=CareerSite

About Us

Infra360 Solutions is a services company specializing in Cloud, DevSecOps, Security, and Observability solutions. We help technology companies adapt DevOps culture in their organization by focusing on long-term DevOps roadmap. We focus on identifying technical and cultural issues in the journey of successfully implementing the DevOps practices in the organization and work with respective teams to fix issues to increase overall productivity. We also do training sessions for the developers and make them realize the importance of DevOps. We provide these services - DevOps, DevSecOps, FinOps, Cost Optimizations, CI/CD, Observability, Cloud Security, Containerization, Cloud Migration, Site Reliability, Performance Optimizations, SIEM and SecOps, Serverless automation, Well-Architected Review, MLOps, Governance, Risk & Compliance. We do assessments of technology architecture, security, governance, compliance, and DevOps maturity model for any technology company and help them optimize their cloud cost, streamline their technology architecture, and set up processes to improve the availability and reliability of their website and applications. We set up tools for monitoring, logging, and observability. We focus on bringing the DevOps culture to the organization to improve its efficiency and delivery.

Job Description

Job Title: DevOps Engineer GCP

Department: Technology

Location: Gurgaon

Work Mode: On-site

Working Hours: 10 AM - 7 PM

Terms: Permanent

Experience: 2-4 years

Education: B.Tech/MCA/BCA

Notice Period: Immediately

Infra360.io is searching for a DevOps Engineer to lead our group of IT specialists in maintaining and improving our software infrastructure. You'll collaborate with software engineers, QA engineers, and other IT pros in deploying, automating, and managing the software infrastructure. As a DevOps engineer you will also be responsible for setting up CI/CD pipelines, monitoring programs, and cloud infrastructure.

Below is a detailed description of the roles and responsibilities, expectations for the role.

Tech Stack :

- Kubernetes: Deep understanding of Kubernetes clusters, container orchestration, and its architecture.

- Terraform: Extensive hands-on experience with Infrastructure as Code (IaC) using Terraform for managing cloud resources.

- ArgoCD: Experience in continuous deployment and using ArgoCD to maintain GitOps workflows.

- Helm: Expertise in Helm for managing Kubernetes applications.

- Cloud Platforms: Expertise in GCP, AWS or Azure will be an added advantage.

- Debugging and Troubleshooting: The DevOps Engineer must be proficient in identifying and resolving complex issues in a distributed environment, ranging from networking issues to misconfigurations in infrastructure or application components.

Key Responsibilities:

- CI/CD and configuration management

- Doing RCA of production issues and providing resolution

- Setting up failover, DR, backups, logging, monitoring, and alerting

- Containerizing different applications on the Kubernetes platform

- Capacity planning of different environment's infrastructure

- Ensuring zero outages of critical services

- Database administration of SQL and NoSQL databases

- Infrastructure as a code (IaC)

- Keeping the cost of the infrastructure to the minimum

- Setting up the right set of security measures

- CI/CD and configuration management

- Doing RCA of production issues and providing resolution

- Setting up failover, DR, backups, logging, monitoring, and alerting

- Containerizing different applications on the Kubernetes platform

- Capacity planning of different environment's infrastructure

- Ensuring zero outages of critical services

- Database administration of SQL and NoSQL databases

- Infrastructure as a code (IaC)

- Keeping the cost of the infrastructure to the minimum

- Setting up the right set of security measures

Ideal Candidate Profile:

- A graduation/post-graduation degree in Computer Science and related fields

- 2-4 years of strong DevOps experience with the Linux environment.

- Strong interest in working in our tech stack

- Excellent communication skills

- Worked with minimal supervision and love to work as a self-starter

- Hands-on experience with at least one of the scripting languages - Bash, Python, Go etc

- Experience with version control systems like Git

- Strong experience of GCP.

- Strong experience with managing the Production Systems day in and day out

- Experience in finding issues in different layers of architecture in production environment and fixing them

- Knowledge of SQL and NoSQL databases, ElasticSearch, Solr etc.

- Knowledge of Networking, Firewalls, load balancers, Nginx, Apache etc.

- Experience in automation tools like Ansible/SaltStack and Jenkins

- Experience in Docker/Kubernetes platform and managing OpenStack (desirable)

- Experience with Hashicorp tools i.e. Vault, Vagrant, Terraform, Consul, VirtualBox etc. (desirable)

- Experience with managing/mentoring small team of 2-3 people (desirable)

- Experience in Monitoring tools like Prometheus/Grafana/Elastic APM.

- Experience in logging tools Like ELK/Loki.

Roles and Responsibilities

- Responsible for managing & driving the online business on E-commerce marketplaces.

- Responsible for uploading new collections on a weekly basis and ensuring catalogue hygiene of the uploaded catalogue.

- Handling the marketing budgets & the paid advertising campaigns/sponsored ads on the marketplaces.

- Responsible for handling product listings, promotions, deals, discounts & day-to-day operational issues related to the marketplace.

- Sole POC for communication with the category team.

- Strategizing & implementing the plan of action for the month on month growth in the sales from the existing online marketplace.

- Ensuring that all new products are uploaded and listed on websites/channels

- Repricing the products

- FBA Set up a pricing minimum to maximum for FBA listings

- Creating product listings and set minimum and maximum pricing

- Generating out of stock report, bestselling products report, runout stock report brand by brand on daily basis.

- Sort out pricing error issues and re-activate the listings. Inactivate listing to make active listing if required.

- Deleting discarded SKUs from all channels if required

- Optimizing listings with accurate product information, images, pricing and placement to increase sales and maximize revenue

- Optimize existing listings of current products to ensure we are exploring sales opportunities

- Keep up to date with industry techniques for SEO and content marketing

- To analyze product pricing vs our competitors on an ongoing basis and make pricing adjustments

- Support customer care emails and calls and also support social media campaigns.

- Ability to communicate effectively and to operate as part of a team

Job Summary:

We are seeking a motivated and enthusiastic Telecaller to join our admissions team. As a Telecaller, you

will be responsible for contacting customers, introducing our products or services, and generating leads.

Your primary objective will be to engage customers over the phone, provide information about our

offerings, address any inquiries or concerns, Excellent communication skills, persuasive abilities, and a

customer-oriented approach are essential for success in this role.

Responsibilities:

• Contact potential customers via telephone to introduce our programs. • Make outbound calls to clients and provide information about our offers. • Engage in active listening to understand Parent’s needs. • Answer customer inquiries, resolve complaints, and provide appropriate solutions. • Maintain accurate and up-to-date records of customer interactions and leads in the CRM system. • Follow up with customers to ensure satisfaction and foster long-term relationships. • Collaborate with the sales team to develop effective strategies and techniques. • Stay updated with product knowledge, market trends, and competitors' activities. • Participate in sales meetings, training sessions, and team-building activities.

Requirements:

• Proven experience as a Telecaller or similar sales role.

• Excellent verbal communication and interpersonal skills.

• Persuasive and confident with the ability to build rapport with customers.

• Active listening skills to understand customer needs and concerns.

• Ability to work in a target-driven environment and achieve goals.

• Strong organizational skills with the ability to multitask and prioritize effectively.

• Proficiency in using CRM software and other telecommunication tools.

• Ability to handle objections and resolve customer complaints professionally.

• High school diploma or equivalent; additional education or certifications in sales or customer

service is a plus.

- Lead and manage a team that:

- Reviews and refines software requirements.

- Drafts and executes test plans and test cases.

- Identifies and drafts steps to reproduce defects utilizing our defect tracking system, JIRA.

- Enters and tracks defects to closure.

- Utilizes testing tools to increase the effectiveness of testing.

- Maintains test environments that mimic our production systems.

- Maintains and updates Selenium driven UI automation test cases.

- Maintains and updates ReadyAPI API automation test cases.

- Maintains and updates manual test cases.

- Maintains and updates JMeter performance/load test cases.

- Coach, mentor, and assist the team in performing their duties as needed.

- Provide formal QA approval and reporting for each release.

- Operate within the SAFe Agile methodology.

- Coordinate with other QA teams across the company.

- Experience leading a SQA or Software Development team

- Experience managing projects and processes cross-functionally

- Comfortable working in a continually changing and dynamic environment and driving top issues to resolution

- Proven track record of attracting, hiring, motivating and developing the best quality-minded individuals

- Excellent cross-functional communication and influencing skills

- In-depth knowledge and experience in one or more of the following technologies: Java, Python, Selenium, JMeter, ReadyAPI

- Automation planning, execution, and triage for projects on multiple Platforms.

- Excellent presentation skills, distilling complex analysis and concepts into concise business-focused takeaways

- In-depth knowledge and experience in identity management including OpenID Connect, SAML2, OAuth2, and SCIM.

- In-depth knowledge and experience of application security testing.

- Unix command line scripting skills

- Play a complete Backend development role for Magento store development, maintenance, upgradation and customization projects. Also provide support to clients, via project management tool. Handle the complete project and achieve 100% client satisfaction.

- Must posses fair, clear understanding of fundamentals and concepts of Magento 1/2, PHP, Zend Framework

- Knowledge in Magento Extension development.

- Write well-engineered source code that complies with accepted web standards.

- Good experience in Magento 2 Store development - design implementation, creating themes, create custom modules;

- Strong Knowledge of Magento Best Practices, including experience developing custom extensions and extending third party extensions

- Ability to develop and manage e-commerce websites, web applications, and websites.

- Can understand the goals and create strategies for each project.

Technical Skills:

- Possess good exposure on Magento, CMS, CodeIgnitor, OpenCart, JavaScript/ jQuery.

- Extensive experience of PHP and MySQL.

- Focused on Object-Oriented Programming (OOP),

- Demonstrable knowledge of XML, XHTML, CSS, Modules i.e. API integration, Payment Gateways, XML with a focus on standards.

As a UI Developer with 2+ years of experience

Strong with Javascript/ Typescript with good understanding of DOM

Experience with UI frameworks such as React/ Angular/ VueJS

Good with CSS/ SCSS/ SASS and handling responsive UI development

Experience using standard UI frameworks such as bootstrap, bulma, material ui etc

Ability to split code into logical components with impetus towards writing reusable components/ styles

Experience with handling state management, separation of layers with the right kind of abstraction

We are an IIT Bombay incubated, healthcare start up developing a mobile based Ai technology to help reduce health risks for women during their pregnancy. Our Founders are Harvard and Columbia University alums with extensive experience in digital health in the US and India.

Mobile Development Engineer - React Native (Location: Mumbai)

Requirements:

2-4 years of experience on React Native. Good understanding of start up environment.

1. Advanced knowledge of React Native.

2. Excellent knowledge of React native libraries, Redux, HTML5, CSS3, JavaScript.

3. Excellent knowledge on android and iOS UI design principles, patterns and best practices.

4. Experience working with publishing on stores.

5. Experience working with Rest APIs.

6. Knowledge of integration and utilization of cloud services and payment systems.

7. Should have played a key role in conceptualising to deployment of apps using React Native.

8 Project management, scheduling, allocation and delivery.

9. Proven experience of agile development, sprint planning and backlog management.

10. Keen focus on customer, excellent communication skills, with high level of responsiveness and responsibility.

Good to Have:

Full Stack experience.

Experience working on chat apps, community, and healthcare domain.

Exposure or Interest in NLP, Machine Learning.