Company Overview:

At Codvo, software and people transformations go hand-in-hand. We are a global empathy-led technology services company. Product innovation and mature software engineering are part of our core DNA. Respect, Fairness, Growth, Agility, and Inclusiveness are the core values that we aspire to live by each day.

We continue to expand our digital strategy, design, architecture, and product management capabilities to offer expertise, outside-the-box thinking, and measurable results.

Job Description :

- Candidate should have strong technical and analytical skill with more in SQL Server, reporting tools like Tableau, Power BI, SSRS and .Net.

- Candidate should have experience for proper understanding of the project deliverables.

- Candidate should be responsible for the respective tasks assigned in the project.

- Candidate will be responsible for the deliverable with proper quality, in planned time and cost adhering to the industry standards that will be defined for the project.

- Candidate should be involved in client interaction.

- Candidate should possess excellent communication skills.

Required Skills : BI Gateway, MS SQL Server, Tableau, Power BI,.Net , OLAP, UI/UX , Dashboard Building

Experience : 5+Years

Job Location : Remote/Saudi Arabia

Work Timings : 2.30 pm- 11.30 pm

About Codvoai

About

At Codvo, we accelerate Cloud, AI, and Transformation roadmaps while offering most satisfying mix of work-life balance, quality of living, and cutting edge work to our employees.

We deliver value through our unique "Virtual Silicon Valley" model where we bring seasoned experts and global talent together as a Product Oriented Deliver (POD) unit to successfully deliver on your next roadmap priorities.

Our “Virtual Silicon Valley” PODs deliver better success and speed because they are self-managed, have right expertise mix, and most importantly are aligned to work in your time zone. The goal is to balance speed, expertise mix, and cost while ensuring the success of core product development, design, and transformation activities.

We are proud to have our customers ready to vouch for us and share their success stories. Our teams of scientists, engineers, architects, and designers have helped AI-driven companies, fast-growing Fintechs, Wealth Management & Healthcare startups, Energy companies, and US Defense contractors accelerate their product and transformation roadmaps.

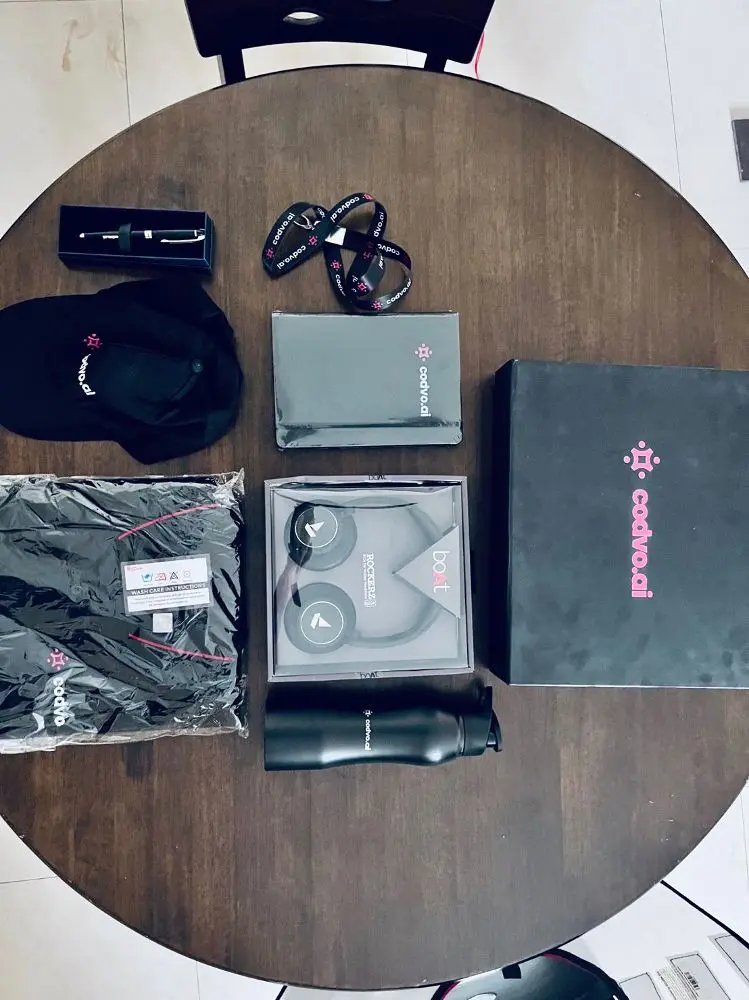

Photos

Connect with the team

Similar jobs

Designation: Campus Admin Officer

Department: Admin

Location: Mahape, Navi Mumbai

Experience Required: Min 1.5 – 2 years

Employment Type: Full-Time

Working Days & Timings

· It is a 6-day working week.

Office timings:

· Admin role: 8:00 AM to 6:00 PM

Job Summary

We are looking for a reliable and hands-on Campus Admin Officer to manage and maintain the

Electrical, Telecom & IT systems of our 120-employee office in Mahape, Navi Mumbai. The role

involves end-to-end responsibility for identifying and fixing electrical faults, desktops, printers,

intercom systems, LAN connections, and WiFi networks to ensure smooth daily operations with

minimal downtime.

Key Responsibilities

1. Electrical System Maintenance

Diagnose and resolve electrical faults promptly to minimize downtime.

Identifying and resolving issues with electrical appliances, office lights, fans, etc.

Troubleshooting issues related to generators, UPS, power supply cabling, etc.

2. IT Systems Management

Maintain desktops and laptops for employees.

Maintain office LAN network and structured cabling.

Coordinate with vendors for servicing, repairs, and consumables.

Maintain inventory of IT peripherals and spare equipment.

3. Intercom & Communication Systems

Maintain and troubleshoot internal intercom systems.

Coordinate with service vendors for maintenance and repairs.

Required Qualifications & Skills

1.5 -2 years of hands-on experience in maintaining Electrical & IT systems.

Ability to communicate in English, Hindi or Marathi

Basic knowledge of modems, routers, and switches.

Good communication skills and ability to support non-technical users.

Ability to work independently and manage day-to-day operations.

Required Skills: CI/CD Pipeline, Kubernetes, SQL Database, Excellent Communication & Stakeholder Management, Python

Criteria:

Looking for 15days and max 30 days of notice period candidates.

looking candidates from Hyderabad location only

Looking candidates from EPAM company only

1.4+ years of software development experience

2. Strong experience with Kubernetes, Docker, and CI/CD pipelines in cloud-native environments.

3. Hands-on with NATS for event-driven architecture and streaming.

4. Skilled in microservices, RESTful APIs, and containerized app performance optimization.

5. Strong in problem-solving, team collaboration, clean code practices, and continuous learning.

6. Proficient in Python (Flask) for building scalable applications and APIs.

7. Focus: Java, Python, Kubernetes, Cloud-native development

8. SQL database

Description

Position Overview

We are seeking a skilled Developer to join our engineering team. The ideal candidate will have strong expertise in Java and Python ecosystems, with hands-on experience in modern web technologies, messaging systems, and cloud-native development using Kubernetes.

Key Responsibilities

- Design, develop, and maintain scalable applications using Java and Spring Boot framework

- Build robust web services and APIs using Python and Flask framework

- Implement event-driven architectures using NATS messaging server

- Deploy, manage, and optimize applications in Kubernetes environments

- Develop microservices following best practices and design patterns

- Collaborate with cross-functional teams to deliver high-quality software solutions

- Write clean, maintainable code with comprehensive documentation

- Participate in code reviews and contribute to technical architecture decisions

- Troubleshoot and optimize application performance in containerized environments

- Implement CI/CD pipelines and follow DevOps best practices

Required Qualifications

- Bachelor's degree in Computer Science, Information Technology, or related field

- 4+ years of experience in software development

- Strong proficiency in Java with deep understanding of web technology stack

- Hands-on experience developing applications with Spring Boot framework

- Solid understanding of Python programming language with practical Flask framework experience

- Working knowledge of NATS server for messaging and streaming data

- Experience deploying and managing applications in Kubernetes

- Understanding of microservices architecture and RESTful API design

- Familiarity with containerization technologies (Docker)

- Experience with version control systems (Git)

Skills & Competencies

- Skills Java (Spring Boot, Spring Cloud, Spring Security)

- Python (Flask, SQL Alchemy, REST APIs)

- NATS messaging patterns (pub/sub, request/reply, queue groups)

- Kubernetes (deployments, services, ingress, ConfigMaps, Secrets)

- Web technologies (HTTP, REST, WebSocket, gRPC)

- Container orchestration and management

- Soft Skills Problem-solving and analytical thinking

- Strong communication and collaboration

- Self-motivated with ability to work independently

- Attention to detail and code quality

- Continuous learning mindset

- Team player with mentoring capabilities

We are looking for a skilled Oracle Developer with strong expertise in PL/SQL development and database management. The ideal candidate will be responsible for designing, developing, optimizing, and maintaining robust Oracle database solutions to support business applications and operations.

Key Responsibilities

Design, develop, test, and maintain robust PL/SQL code including stored procedures, functions, packages, triggers, and views.

Participate in database design and development activities ensuring efficient database structure and performance.

Optimize SQL queries and PL/SQL code for maximum efficiency and performance.

Identify, troubleshoot, and resolve database performance issues.

Develop and implement data migration, transformation, and ETL processes using Oracle tools and PL/SQL scripts.

Provide support for existing database applications and resolve issues within defined SLAs.

Collaborate with application developers, business analysts, and stakeholders to understand business requirements and translate them into technical solutions.

Maintain proper documentation for database designs, code, and technical processes.

Follow best practices in database development including code reviews, version control, and coding standards.

Required Skills & Qualifications

Bachelor’s degree in Computer Science, Information Technology, or a related field.

3–5 years of hands-on experience as an Oracle Developer.

Strong expertise in Oracle PL/SQL development.

Experience in writing complex stored procedures, functions, packages, and triggers.

Strong understanding of database schema design, tables, views, indexes, and constraints.

Experience with data migration, ETL processes, and data transformation.

Knowledge of Oracle backup and recovery techniques including RMAN.

Strong analytical and problem-solving skills.

Good communication and collaboration abilities.

Ability to work independently and manage multiple tasks effectively.

Preferred Skills

Experience working in performance tuning and query optimization.

Familiarity with version control and development best practices.

Exposure to Oracle tools and enterprise database environments.

Why Join Us?

Opportunity to work on enterprise-grade Oracle database solutions.

Collaborative and growth-oriented work environment.

Exposure to challenging projects and modern database practices.

Job Title: L2 Post-Sales Engineer – Cisco SSE (Secure Service Edge)

Department: Cybersecurity / Network Security

Location: Bangalore

Job Type: Full time

Gross Salary: 10 - 12 LPA

Experience: 3–5 Years

Key Responsibilities:

- Lead partial or full deployment of Cisco SSE components under guidance from senior engineers.

- Configure and optimize Cisco (ZTNA, SASE, SWG, CASB).

- Assist in integrating Cisco SD-WAN (Viptela/Meraki) into SSE frameworks.

- Perform Level 2 troubleshooting, including analysing logs, policy behaviour, and network flow.

- Engage directly with client IT teams to provide remote support, training, and resolution planning.

- Contribute to maintaining solution documentation, run books, and knowledge base.

- Participate in upgrade/migration projects and post-deployment optimization efforts.

Technical Skills Required:

- Good understanding of Cisco SD-WAN (routing, security policies, cloud integration).

- Familiarity with SASE architecture, ZTNA, SWG, CASB, and cloud security posture.

- Ability to perform log analysis, packet capture interpretation, and root cause diagnosis.

- Comfort with CLI and web-based interfaces of security/networking tools.

- Basic scripting/automation (Python, Shell) experience is a plus.

Certifications (Preferred but Not Mandatory):

- Cisco Certified Specialist – Security Core / CCNP Security

- Cisco Umbrella and Duo product training/certification

- Other SASE/SSE vendor exposure (Zscaler, Prisma, Netskope) is a plus

We are seeking a dedicated and skilled AI Project Field Engineer to join our team. The successful candidate will be responsible for executing AI projects on-site, ensuring the seamless deployment and operation of AI models and systems. This role requires a combination of technical expertise, problem-solving skills, and a strong customer focus.

Responsibilities:

- Execute and manage AI projects on customer sites, ensuring timely and successful deployment.

- Deploy and run AI models using PyTorch on various hardware configurations.

- Set up and maintain computer networks, particularly those involving IP cameras.

- Write and maintain shell scripts to automate deployment and monitoring tasks.

- Develop and troubleshoot Python code related to AI models and their deployment.

- Collaborate with customers to understand their needs and ensure their success with our AI solutions.

- Perform on-site visits as required to install, test, and troubleshoot AI systems.

- Provide training and support to customers on the use and maintenance of deployed AI systems.

- Work closely with the development team to provide feedback and insights from the field.

- Document all processes, configurations, and customer interactions for future reference.

Job Summary:

We are seeking 3D Modelling Interns to join our asset production team. The successful candidates will work closely with our asset production team to create 3D models of retail products. The ideal candidate should have a strong background in 3D modelling software, a keen eye for detail, and the ability to work independently.

Key Responsibilities:

- Collaborate with production team members to create accurate 3D models of retail products.

- Use 3D modelling software, preferably Blender 3D to create, modify, and refine 3D models.

- Ensure that the 3D models are accurate, detailed, and realistic.

- Test and evaluate 3D models to ensure they meet design and asset production requirements

- Work as part of a production team to ensure that projects are completed on time.

- Communicate progress and issues effectively with the asset production teams.

- Participate in reviews and provide feedback to improve the asset production process.

- Continuously learn and improve 3D modelling skills and techniques.

Requirements:

- Currently enrolled in a degree / certificate program in a relevant field such as Industrial Design, Product Design, or 3D Modelling.

- Strong knowledge of 3D modelling in Blender or similar modelling software. If not proficient in Blender, training will be provided.

- UV mapping and texturing skills are highly desirable.

- Knowledge of PBR texture workflow is preferred but not required.

- Excellent attention to detail and ability to work independently and as part of a team.

- Strong communication and interpersonal skills.

- Ability to meet tight deadlines.

These internships are paid and are a great opportunity for anyone looking to gain hands-on experience in working on the future of retail product visualisation in various industries. The successful candidates will work with a team of experienced professionals, and gain valuable insights into the asset production process.

- Deep knowledge and working experience in any of Adobe Marketo or Hybris Marketing or Emarsys platforms with end to end hands-on implementation and integration experience including integration tools

- Other platforms of interest include Adobe Campaign Emarsys Pardot Salesforce Marketing Cloud and Eloqua

- Hybris Marketing or Adobe Marketo Certification strongly preferred

- Experience in evaluating different marketing platforms (e.g. Salesforce SFMC versus Adobe Marketo versus Adobe Marketing)

- Experience in design and setup of Marketing Technology Architecture

- Proficient in scripting and experience with HTML XML CSS JavaScript etc.

- Experience with triggering campaigns via API calls

- Responsible for the detailed design of technical solutions POV on marketing solutions Proof-of-Concepts (POC) prototyping and documentation of the technical design

- Collaborate with Onshore team in tailoring solutions that meets business needs using agile/iterative development process

- Perform feasibility analysis for the marketing solution that meet the business goals

- Experience with client discovery workshops and technical solutions presentations

- Excellent communication skills required as this is a client business & IT interfacing role

About Mudrantar Solutions Private Limited

Mudrantar Solutions Pvt. Ltd. Is wholly owned subsidiary of US based startup Mudrantar Corporation. Mudrantar is a well-funded startup focused on disruptive changes in the Accounting Software for Small, Medium as well as large businesses in India. Our state-of-the-art OCR + Machine Learning technology allows customers to simply take photo and our software does the rest of the heavy lifting. Our strategy for Small and Medium businesses is realized through freely available mobile app. We also work for automation of Accounts Payable for large corporations through our channel partners in India.

Full Stack JavaScript Developer Position

We are looking for an expert Full Stack JavaScript developer who is highly skilled with Vue.js. Your primary focus will be developing user-facing web applications and components. You’ll implement them with the Vue.js framework, following generally accepted practices and workflows. You will ensure that you produce robust, secure, modular, and maintainable code. You will coordinate with other team members, including back-end developers and UX/UI designers. Your commitment to team collaboration, perfect communication, and a quality product is crucial.

Position

- Full time employment

Key Responsibilities

- Front end UI/UX technologies such as tailwinds, vuex router and similar

- Developing user-facing applications using NodeJS (HapiJS, ExpressJS ), Vue.js

- Building modular and reusable components and libraries

- Optimizing your application for performance

- Implementing automated testing integrated into development and maintenance workflows

- Staying up to date with all recent developments in the JavaScript

- Keeping an eye on security updates and issues reported and all project dependencies

- Proposing any upgrades and updates necessary for keeping up with modern security and development best practices

Skills

- Highly proficient with the JavaScript language and its modern ES6+ syntax and features

- Highly proficient with backend frameworks like HapiJS, ExpressJS

- Highly proficient with Vue.js framework and its core principles such as components, reactivity, and the virtual DOM

- Familiarity with the Vue.js ecosystem, including Vue CLI, Vuex, Vue Router, and NuxtJS

- Good understanding of HTML5 and CSS3

- Understanding of server-side rendering and its benefits and use cases

- Knowledge of functional programming and object-oriented programming paradigms

- Ability to write efficient, secure, well-documented, and clean JavaScript code

- Familiarity with automated JavaScript testing, specifically testing frameworks such as Jest or Mocha

- Proficiency with modern development tools, like BitBucket, Babel, Webpack, and Git

- Working knowledge of one or more of the following: NodeJS, Angular, ReactJS

- Experience with both consuming and designing RESTful APIs

- Relevant technical certifications a plus.

Qualifications

- Bachelor's Degree and/or equivalent Computer Science course

- 2-4 years programming experience

- Demonstrable track record of projects, applications

- Strong written and verbal communication skills

- To appoint Block Sales Manager in their respective Districts.

- To manage sales operations in assigned district to achieve revenue goals.

- To supervise sales team members on daily basis and provide guidance whenever needed.

- To identify skill gaps and conduct trainings to sales team.

- To work with team to implement new sales techniques to obtain profits.

- To assist in employee recruitment, promotion, retention and termination activities.

- To conduct employee performance evaluation and provide feedback for improvements.

- To contact potential customers and identify new business opportunities.

- To stay abreast with customer needs, market trends and competitors.

- To maintain clear and complete sales reports for management review.

- To build strong relationships with customers for business growth.

- To analyze sales performances and recommend improvements.

- To ensure that sales team follows company policies and procedures at all times.

- To develop promotional programs to increase sales and revenue.

- To plan and coordinate sales activities for assigned projects.

- To provide outstanding services and ensure customer satisfaction.