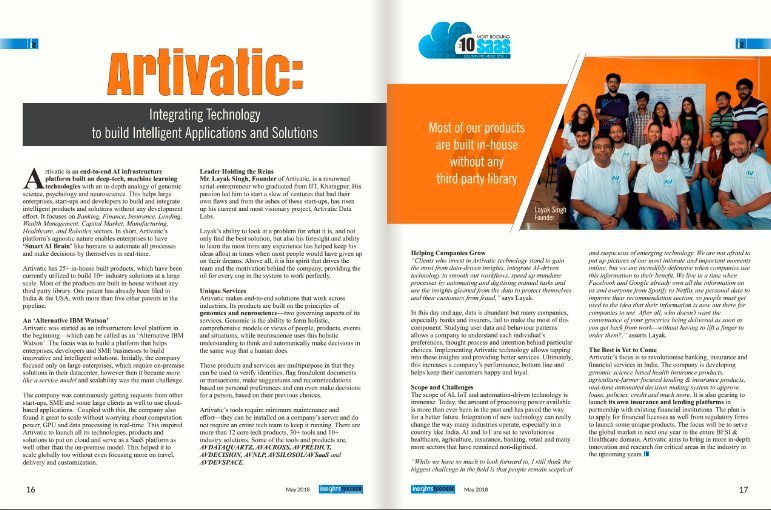

About Artivatic

About

Company video

Photos

Connect with the team

Similar jobs

Key responsibilities

• Design, build, and maintain robust CI/CD pipelines using Azure DevOps Services (Azure Pipelines) and Git-based workflows.

• Implement and manage infrastructure as code (IaC) using ARM templates, Bicep, and/or Terraform for repeatable environment provisioning.

• Containerize applications (Docker) and manage container orchestration platforms such as AKS (Azure Kubernetes Service).

• Automate build, test, release, and rollback processes; integrate automated testing and quality gates into pipelines.

• Monitor and improve platform reliability and observability using logging and monitoring tools (e.g., Azure Monitor, Application Insights, Prometheus, Grafana).

• Drive platform security and compliance through pipeline controls, secrets management (Key Vault / Vault), and secure configuration practices.

• Implement cost-optimization and governance for Azure resources (tags, policies, budgets).

• Troubleshoot build/release failures, production incidents, and performance bottlenecks; perform root-cause analysis and implement permanent fixes.

• Mentor developers in Git workflows, pipeline authoring, best practices for IaC, and cloud-native design.

• Maintain clear documentation: runbooks, deployment playbooks, architecture diagrams, and pipeline templates.

Required skills & experience

• 5+ years hands-on experience working with Azure and cloud-native application delivery.

• Deep experience with Azure DevOps (Repos, Pipelines, Artifacts, Boards).

• Strong IaC skills with Terraform, ARM templates, or Bicep.

• Solid experience with CI/CD design and YAML pipeline authoring.

• Practical knowledge of containerization (Docker) and Kubernetes — preferably AKS.

• Scripting skills: PowerShell, Bash, and/or Python for automation.

• Experience with Git workflows (branching strategies, PRs, code reviews).

• Familiarity with configuration management and secrets management (Azure Key Vault, HashiCorp Vault).

• Understanding of networking, identity (Azure AD), and security fundamentals in Azure.

• Strong troubleshooting, debugging, and incident response skills.

• Good collaboration and communication skills; ability to work across teams.

Certification

AZ-400: Microsoft Certified: DevOps Engineer Expert or AZ-104 or AZ 305 or Terraform Associate.

REVIEW CRITERIA:

MANDATORY:

- Strong Hands-On AWS Cloud Engineering / DevOps Profile

- Mandatory (Experience 1): Must have 12+ years of experience in AWS Cloud Engineering / Cloud Operations / Application Support

- Mandatory (Experience 2): Must have strong hands-on experience supporting AWS production environments (EC2, VPC, IAM, S3, ALB, CloudWatch)

- Mandatory (Infrastructure as a code): Must have hands-on Infrastructure as Code experience using Terraform in production environments

- Mandatory (AWS Networking): Strong understanding of AWS networking and connectivity (VPC design, routing, NAT, load balancers, hybrid connectivity basics)

- Mandatory (Cost Optimization): Exposure to cost optimization and usage tracking in AWS environments

- Mandatory (Core Skills): Experience handling monitoring, alerts, incident management, and root cause analysis

- Mandatory (Soft Skills): Strong communication skills and stakeholder coordination skills

ROLE & RESPONSIBILITIES:

We are looking for a hands-on AWS Cloud Engineer to support day-to-day cloud operations, automation, and reliability of AWS environments. This role works closely with the Cloud Operations Lead, DevOps, Security, and Application teams to ensure stable, secure, and cost-effective cloud platforms.

KEY RESPONSIBILITIES:

- Operate and support AWS production environments across multiple accounts

- Manage infrastructure using Terraform and support CI/CD pipelines

- Support Amazon EKS clusters, upgrades, scaling, and troubleshooting

- Build and manage Docker images and push to Amazon ECR

- Monitor systems using CloudWatch and third-party tools; respond to incidents

- Support AWS networking (VPCs, NAT, Transit Gateway, VPN/DX)

- Assist with cost optimization, tagging, and governance standards

- Automate operational tasks using Python, Lambda, and Systems Manager

IDEAL CANDIDATE:

- Strong hands-on AWS experience (EC2, VPC, IAM, S3, ALB, CloudWatch)

- Experience with Terraform and Git-based workflows

- Hands-on experience with Kubernetes / EKS

- Experience with CI/CD tools (GitHub Actions, Jenkins, etc.)

- Scripting experience in Python or Bash

- Understanding of monitoring, incident management, and cloud security basics

NICE TO HAVE:

- AWS Associate-level certifications

- Experience with Karpenter, Prometheus, New Relic

- Exposure to FinOps and cost optimization practices

Key Qualifications :

- At least 2 years of hands-on experience with cloud infrastructure on AWS or GCP

- Exposure to configuration management and orchestration tools at scale (e.g. Terraform, Ansible, Packer)

- Knowledge in DevOps tools (e.g. Jenkins, Groovy, and Gradle)

- Familiarity with monitoring and alerting tools(e.g. CloudWatch, ELK stack, Prometheus)

- Proven ability to work independently or as an integral member of a team

Preferable Skills :

- Familiarity with standard IT security practices such as encryption, credentials and key management

- Proven ability to acquire various coding languages (Java, Python- ) to support DevOps operation and cloud transformation

- Familiarity in web standards (e.g. REST APIs, web security mechanisms)

- Multi-cloud management experience with GCP / Azure

- Experience in performance tuning, services outage management and troubleshooting

Job Responsibilities:

Section 1 -

- Responsible for managing and providing L1 support to Build, design, deploy and maintain the implementation of Cloud solutions on AWS.

- Implement, deploy and maintain development, staging & production environments on AWS.

- Familiar with serverless architecture and services on AWS like Lambda, Fargate, EBS, Glue, etc.

- Understanding of Infra as a code and familiar with related tools like Terraform, Ansible Cloudformation etc.

Section 2 -

- Managing the Windows and Linux machines, Kubernetes, Git, etc.

- Responsible for L1 management of Servers, Networks, Containers, Storage, and Databases services on AWS.

Section 3 -

- Timely monitoring of production workload alerts and quick addressing the issues

- Responsible for monitoring and maintaining the Backup and DR process.

Section 4 -

- Responsible for documenting the process.

- Responsible for leading cloud implementation projects with end-to-end execution.

Qualifications: Bachelors of Engineering / MCA Preferably with AWS, Cloud certification

Skills & Competencies

- Linux and Windows servers management and troubleshooting.

- AWS services experience on CloudFormation, EC2, RDS, VPC, EKS, ECS, Redshift, Glue, etc. - AWS EKS

- Kubernetes and containers knowledge

- Understanding of setting up AWS Messaging, streaming and queuing Services(MSK, Kinesis, SQS, SNS, MQ)

- Understanding of serverless architecture. - High understanding of Networking concepts

- High understanding of Serverless architecture concept - Managing to monitor and alerting systems

- Sound knowledge of Database concepts like Dataware house, Data Lake, and ETL jobs

- Good Project management skills

- Documentation skills

- Backup, and DR understanding

Soft Skills - Project management, Process Documentation

Ideal Candidate:

- AWS certification with between 2-4 years of experience with certification and project execution experience.

- Someone who is interested in building sustainable cloud architecture with automation on AWS.

- Someone who is interested in learning and being challenged on a day-to-day basis.

- Someone who can take ownership of the tasks and is willing to take the necessary action to get it done.

- Someone who is curious to analyze and solve complex problems.

- Someone who is honest with their quality of work and is comfortable with taking ownership of their success and failure, both.

Behavioral Traits

- We are looking for someone who is interested to be part of creativity and the innovation-based environment with other team members.

- We are looking for someone who understands the idea/importance of teamwork and individual ownership at the same time.

- We are looking for someone who can debate logically, respectfully disagree, and can admit if proven wrong and who can learn from their mistakes and grow quickly

We are a managed video calling solution built on top of WebRTC, which allows it's

customers to integrate live video-calls within existing solutions in less than 10 lines of

code. We provide a completely managed SDK that solves the problem of battling

endless cases of video calling APIs.

Location - Bangalore (Remote)

Experience - 3+ Years

Requirements:

● Should have at least 2+ years of DevOps experience

● Should have experience with Kubernetes

● Should have experience with Terraform/Helm

● Should have experience in building scalable server-side systems

● Should have experience in cloud infrastructure and designing databases

● Having experience with NodeJS/TypeScript/AWS is a bonus

● Having experience with WebRTC is a bonus

Infra360 Solutions is a services company specializing in Cloud, DevSecOps, Security, and Observability solutions. We help technology companies adapt DevOps culture in their organization by focusing on long-term DevOps roadmap. We focus on identifying technical and cultural issues in the journey of successfully implementing the DevOps practices in the organization and work with respective teams to fix issues to increase overall productivity. We also do training sessions for the developers and make them realize the importance of DevOps. We provide these services - DevOps, DevSecOps, FinOps, Cost Optimizations, CI/CD, Observability, Cloud Security, Containerization, Cloud Migration, Site Reliability, Performance Optimizations, SIEM and SecOps, Serverless automation, Well-Architected Review, MLOps, Governance, Risk & Compliance. We do assessments of technology architecture, security, governance, compliance, and DevOps maturity model for any technology company and help them optimize their cloud cost, streamline their technology architecture, and set up processes to improve the availability and reliability of their website and applications. We set up tools for monitoring, logging, and observability. We focus on bringing the DevOps culture to the organization to improve its efficiency and delivery.

Job Description

Our Mission

Our mission is to help customers achieve their business objectives by providing innovative, best-in-class consulting, IT solutions and services and to make it a joy for all stakeholders to work with us. We function as a full stakeholder in business, offering a consulting-led approach with an integrated portfolio of technology-led solutions that encompass the entire Enterprise value chain.

Our Customer-centric Engagement Model defines how we engage with you, offering specialized services and solutions that meet the distinct needs of your business.

Our Culture

Culture forms the core of our foundation and our effort towards creating an engaging workplace has resulted in Infra360 Solution Pvt Ltd.

Our Tech-Stack:

- Azure DevOps, Azure Kubernetes Service, Docker, Active Directory (Microsoft Entra)

- Azure IAM and managed identity, Virtual network, VM Scale Set, App Service, Cosmos

- Azure, MySQL Scripting (PowerShell, Python, Bash),

- Azure Security, Security Documentation, Security Compliance,

- AKS, Blob Storage, Azure functions, Virtual Machines, Azure SQL

- AWS - IAM, EC2, EKS, Lambda, ECS, Route53, Cloud formation, Cloud front, S3

- GCP - GKE, Compute Engine, App Engine, SCC

- Kubernetes, Linux, Docker & Microservices Architecture

- Terraform & Terragrunt

- Jenkins & Argocd

- Ansible, Vault, Vagrant, SaltStack

- CloudFront, Apache, Nginx, Varnish, Akamai

- Mysql, Aurora, Postgres, AWS RedShift, MongoDB

- ElasticSearch, Redis, Aerospike, Memcache, Solr

- ELK, Fluentd, Elastic APM & Prometheus Grafana Stack

- Java (Spring/Hibernate/JPA/REST), Nodejs, Ruby, Rails, Erlang, Python

What does this role hold for you…??

- Infrastructure as a code (IaC)

- CI/CD and configuration management

- Managing Azure Active Directory (Entra)

- Keeping the cost of the infrastructure to the minimum

- Doing RCA of production issues and providing resolution

- Setting up failover, DR, backups, logging, monitoring, and alerting

- Containerizing different applications on the Kubernetes platform

- Capacity planning of different environments infrastructure

- Ensuring zero outages of critical services

- Database administration of SQL and NoSQL databases

- Setting up the right set of security measures

Requirements

Apply if you have…

- A graduation/post-graduation degree in Computer Science and related fields

- 2-4 years of strong DevOps experience in Azure with the Linux environment.

- Strong interest in working in our tech stack

- Excellent communication skills

- Worked with minimal supervision and love to work as a self-starter

- Hands-on experience with at least one of the scripting languages - Bash, Python, Go etc

- Experience with version control systems like Git

- Understanding of Azure cloud computing services and cloud computing delivery models (IaaS, PaaS, and SaaS)

- Strong scripting or programming skills for automating tasks (PowerShell/Bash)

- Knowledge and experience with CI/CD tools: Azure DevOps, Jenkins, Gitlab etc.

- Knowledge and experience in IaC at least one (ARM Templates/ Terraform)

- Strong experience with managing the Production Systems day in and day out

- Experience in finding issues in different layers of architecture in a production environment and fixing them

- Experience in automation tools like Ansible/SaltStack and Jenkins

- Experience in Docker/Kubernetes platform and managing OpenStack (desirable)

- Experience with Hashicorp tools i.e. Vault, Vagrant, Terraform, Consul, VirtualBox etc. (desirable)

- Experience in Monitoring tools like Prometheus/Grafana/Elastic APM.

- Experience in logging tools Like ELK/Loki.

- Experience in using Microsoft Azure Cloud services

If you are passionate about infrastructure, and cloud technologies, and want to contribute to innovative projects, we encourage you to apply. Infra360 offers a dynamic work environment and opportunities for professional growth.

Interview Process

Application Screening=>Test/Assessment=>2 Rounds of Tech Interview=>CEO Round=>Final Discussion

Job Responsibilities:

Work & Deploy updates and fixes Provide Level 2 technical support Support implementation of fully automated CI/CD pipelines as per dev requirement Follow the escalation process through issue completion, including providing documentation after resolution Follow regular Operations procedures and complete all assigned tasks during the shift. Assist in root cause analysis of production issues and help write a report which includes details about the failure, the relevant log entries, and likely root cause Setup of CICD frameworks (Jenkins / Azure DevOps Server), Containerization using Docker, etc Implement continuous testing, Code Quality, Security using DevOps tooling Build a knowledge base by creating and updating documentation for support

Skills Required:

DevOps, Linux, AWS, Ansible, Jenkins, GIT, Terraform, CI, CD, Cloudformation, Typescript

A Strong Devops experience of at least 4+ years

Strong Experience in Unix/Linux/Python scripting

Strong networking knowledge,vSphere networking stack knowledge desired.

Experience on Docker and Kubernetes

Experience with cloud technologies (AWS/Azure)

Exposure to Continuous Development Tools such as Jenkins or Spinnaker

Exposure to configuration management systems such as Ansible

Knowledge of resource monitoring systems

Ability to scope and estimate

Strong verbal and communication skills

Advanced knowledge of Docker and Kubernetes.

Exposure to Blockchain as a Service (BaaS) like - Chainstack/IBM blockchain platform/Oracle Blockchain Cloud/Rubix/VMWare etc.

Capable of provisioning and maintaining local enterprise blockchain platforms for Development and QA (Hyperledger fabric/Baas/Corda/ETH).

DevOps Engineer

Notice Period: 45 days / Immediate Joining

Banyan Data Services (BDS) is a US-based Infrastructure services Company, headquartered in San Jose, California, USA. It provides full-stack managed services to support business applications and data infrastructure. We do provide the data solutions and services on bare metal, On-prem, and all Cloud platforms. Our engagement service is built on the DevOps standard practice and SRE model.

We are looking for a DevOps Engineer to help us build functional systems that improve customer experience. we offer you an opportunity to join our rocket ship startup, run by a world-class executive team. We are looking for candidates that aspire to be a part of the cutting-edge solutions and services we offer, that address next-gen data evolution challenges. Candidates who are willing to use their experience in areas directly related to Infrastructure Services, Software as Service, and Cloud Services and create a niche in the market.

Key Qualifications

· 4+ years of experience as a DevOps Engineer with monitoring, troubleshooting, and diagnosing infrastructure systems.

· Experience in implementation of continuous integration and deployment pipelines using Jenkins, JIRA, JFrog, etc

· Strong experience in Linux/Unix administration.

· Experience with automation/configuration management using Puppet, Chef, Ansible, Terraform, or other similar tools.

· Expertise in multiple coding and scripting languages including Shell, Python, and Perl

· Hands-on experience Exposure to modern IT infrastructure (eg. Docker swarm/Mesos/Kubernetes/Openstack)

· Exposure to any of relation database technologies MySQL/Postgres/Oracle or any No-SQL database

· Worked on open-source tools for logging, monitoring, search engine, caching, etc.

· Professional Certificates in AWS or any other cloud is preferable

· Excellent problem solving and troubleshooting skills

· Must have good written and verbal communication skills

Key Responsibilities

Ambitious individuals who can work under their own direction towards agreed targets/goals.

Must be flexible to work on the office timings to accommodate the multi-national client timings.

Will be involved in solution designing from the conceptual stages through development cycle and deployments.

Involve development operations & support internal teams

Improve infrastructure uptime, performance, resilience, reliability through automation

Willing to learn new technologies and work on research-orientated projects

Proven interpersonal skills while contributing to team effort by accomplishing related results as needed.

Scope and deliver solutions with the ability to design solutions independently based on high-level architecture.

Independent thinking, ability to work in a fast-paced environment with creativity and brainstorming

http://www.banyandata.com" target="_blank">www.banyandata.com

![[x]cube LABS](/_next/image?url=https%3A%2F%2Fcdnv2.cutshort.io%2Fcompany-static%2F639877aa0ad87e002533a1c5%2Fuser_uploaded_data%2Flogos%2Fx_whiteB_eeCk0gqs.png&w=256&q=75)