What we look for:

As a DevOps Developer, you will contribute to a thriving and growing AIGovernance Engineering team. You will work in a Kubernetes-based microservices environment to support our bleeding-edge cloud services. This will include custom solutions, as well as open source DevOps tools (build and deploy automation, monitoring and data gathering for our software delivery pipeline). You will also be contributing to our continuous improvement and continuous delivery while increasing maturity of DevOps and agile adoption practices.

Responsibilities:

- Ability to deploy software using orchestrators /scripts/Automation on Hybrid and Public clouds like AWS

- Ability to write shell/python/ or any unix scripts

- Working Knowledge on Docker & Kubernetes

- Ability to create pipelines using Jenkins or any CI/CD tool and GitOps tool like ArgoCD

- Working knowledge of Git as a source control system and defect tracking system

- Ability to debug and troubleshoot deployment issues

- Ability to use tools for faster resolution of issues

- Excellent communication and soft skills

- Passionate and ability work and deliver in a multi-team environment

- Good team player

- Flexible and quick learner

- Ability to write docker files, Kubernetes yaml files / Helm charts

- Experience with monitoring tools like Nagios, Prometheus and visualisation tools such as Grafana.

- Ability to write Ansible, terraform scripts

- Linux System experience and Administration

- Effective cross-functional leadership skills: working with engineering and operational teams to ensure systems are secure, scalable, and reliable.

- Ability to review deployment and operational environments, i.e., execute initiatives to reduce failure, troubleshoot issues across the entire infrastructure stack, expand monitoring capabilities, and manage technical operations.

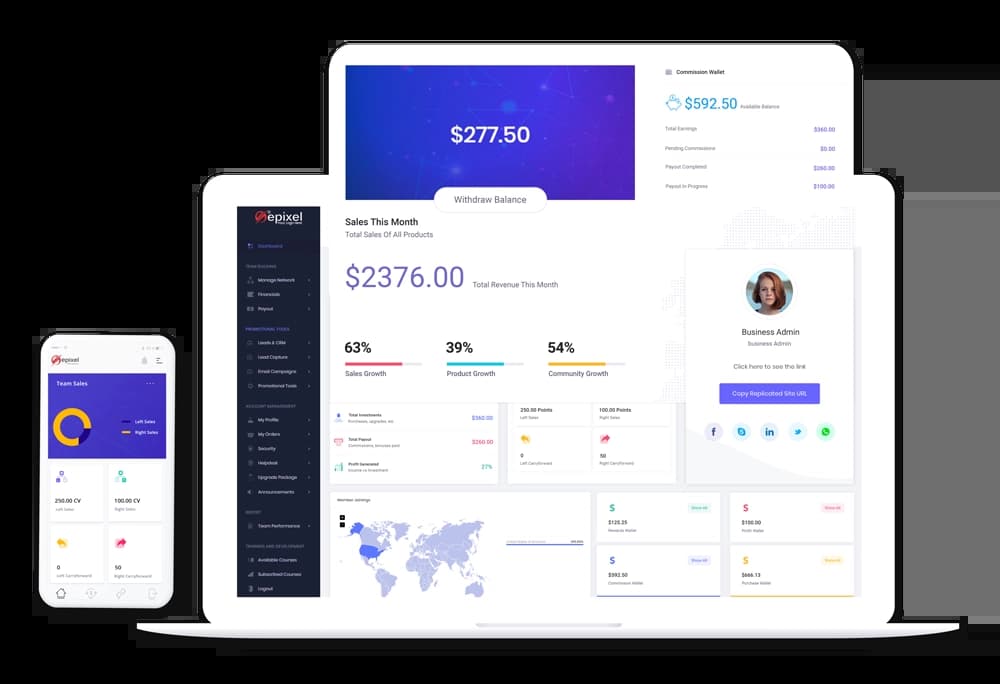

About Epixel MLM Software

About

Epixel MLM Software offers an advanced and sophisticated platform that guarantees quick business growth opportunities and helps to reach new customers and markets. Integrating your MLM business with Epixel MLM software provides you a stable back-office and commission processing system with features and tools that ensure maximum distributor engagement. Developed with the latest technologies and built-in features, Epixel can assure fast and real-time processing to return profitable results quickly. Epixel MLM system also supports custom requirements and integrations to adapt your business to the evolving requirements.

Want to join us? Contact our team at https://www.epixelmlmsoftware.com/contact-us

Company video

Photos

Connect with the team

Similar jobs

Job Summary:

Seeking a seasoned SQL + ETL Developer with 4+ years of experience in managing large-scale datasets and cloud-based data pipelines. The ideal candidate is hands-on with MySQL, PySpark, AWS Glue, and ETL workflows, with proven expertise in AWS migration and performance optimization.

Key Responsibilities:

- Develop and optimize complex SQL queries and stored procedures to handle large datasets (100+ million records).

- Build and maintain scalable ETL pipelines using AWS Glue and PySpark.

- Work on data migration tasks in AWS environments.

- Monitor and improve database performance; automate key performance indicators and reports.

- Collaborate with cross-functional teams to support data integration and delivery requirements.

- Write shell scripts for automation and manage ETL jobs efficiently.

Required Skills:

- Strong experience with MySQL, complex SQL queries, and stored procedures.

- Hands-on experience with AWS Glue, PySpark, and ETL processes.

- Good understanding of AWS ecosystem and migration strategies.

- Proficiency in shell scripting.

- Strong communication and collaboration skills.

Nice to Have:

- Working knowledge of Python.

- Experience with AWS RDS.

Please Apply - https://zrec.in/L51Qf?source=CareerSite

About Us

Infra360 Solutions is a services company specializing in Cloud, DevSecOps, Security, and Observability solutions. We help technology companies adapt DevOps culture in their organization by focusing on long-term DevOps roadmap. We focus on identifying technical and cultural issues in the journey of successfully implementing the DevOps practices in the organization and work with respective teams to fix issues to increase overall productivity. We also do training sessions for the developers and make them realize the importance of DevOps. We provide these services - DevOps, DevSecOps, FinOps, Cost Optimizations, CI/CD, Observability, Cloud Security, Containerization, Cloud Migration, Site Reliability, Performance Optimizations, SIEM and SecOps, Serverless automation, Well-Architected Review, MLOps, Governance, Risk & Compliance. We do assessments of technology architecture, security, governance, compliance, and DevOps maturity model for any technology company and help them optimize their cloud cost, streamline their technology architecture, and set up processes to improve the availability and reliability of their website and applications. We set up tools for monitoring, logging, and observability. We focus on bringing the DevOps culture to the organization to improve its efficiency and delivery.

Job Description

Job Title: Senior DevOps Engineer (Infrastructure/SRE)

Department: Technology

Location: Gurgaon

Work Mode: On-site

Working Hours: 10 AM - 7 PM

Terms: Permanent

Experience: 4-6 years

Education: B.Tech/MCA

Notice Period: Immediately

About Us

At Infra360.io, we are a next-generation cloud consulting and services company committed to delivering comprehensive, 360-degree solutions for cloud, infrastructure, DevOps, and security. We partner with clients to transform and optimize their technology landscape, ensuring resilience, scalability, cost efficiency and innovation.

Our core services include Cloud Strategy, Site Reliability Engineering (SRE), DevOps, Cloud Security Posture Management (CSPM), and related Managed Services. We specialize in driving operational excellence across multi-cloud environments, helping businesses achieve their goals with agility and reliability.

We thrive on ownership, collaboration, problem-solving, and excellence, fostering an environment where innovation and continuous learning are at the forefront. Join us as we expand and redefine what’s possible in cloud technology and infrastructure.

Role Summary

We are looking for a Senior DevOps Engineer (Infrastructure) to design, automate, and manage cloud-based and datacentre infrastructure for diverse projects. The ideal candidate will have deep expertise in a public cloud platform (AWS, GCP, or Azure), with a strong focus on cost optimization, security best practices, and infrastructure automation using tools like Terraform and CI/CD pipelines.

This role involves designing scalable architectures (containers, serverless, and VMs), managing databases, and ensuring system observability with tools like Prometheus and Grafana. Strong leadership, client communication, and team mentoring skills are essential. Experience with VPN technologies and configuration management tools (Ansible, Helm) is also critical. Multi-cloud experience and familiarity with APM tools are a plus.

Ideal Candidate Profile

- Solid 4-6 years of experience as a DevOps engineer with a proven track record of architecting and automating solutions on Cloud

- Experience in troubleshooting production incidents and handling high-pressure situations.

- Strong leadership skills and the ability to mentor team members and provide guidance on best practices.

- Bachelor's or Master's degree in Computer Science, Engineering, or a related field.

- Extensive experience with Kubernetes, Terraform, ArgoCD, and Helm.

- Strong with at least one public cloud AWS/GCP/Azure

- Strong with Cost Optimization and Security Best practices

- Strong with Infrastructure automation using Terraform and CI/CD automation

- Strong with Configuration Management using Ansible, Helm etc

- Good with designing architectures (Containers, Serverless, VMs etc)

- Hands-on Experience working on Multiple Projects

- Strong with Client communication and requirements gathering

- Databases management experience

- Good experience with Prometheus, Grafana & Alert Manager

- Able to manage multiple clients and take ownership of client issues.

- Experience with Git and coding best practices

- Proficiency in cloud networking, including VPCs, DNS, VPNs (OpenVPN, OpenSwan, Pritunl, Site-to-Site VPNs), load balancers, and firewalls, ensuring secure and efficient connectivity.

- Strong understanding of cloud security best practices, identity and access management (IAM), and compliance requirements for modern infrastructure.

Good to have

- Multi-cloud experience with AWS, GCP & Azure

- Experience with APM & Observability tools like - Newrelic, Datadog, and OpenTelemetry

- Proficiency in scripting languages (Python, Go) for automation and tooling to improve infrastructure and application reliability.

Key Responsibilities

- Design and Development:

- Architect, design, and develop high-quality, scalable, and secure cloud-based software solutions.

- Collaborate with product and engineering teams to translate business requirements into technical specifications.

- Write clean, maintainable, and efficient code, following best practices and coding standards.

- Cloud Infrastructure:

- Develop and optimise cloud-native applications, leveraging cloud services like AWS, Azure, or Google Cloud Platform (GCP).

- Implement and manage CI/CD pipelines for automated deployment and testing.

- Ensure the security, reliability, and performance of cloud infrastructure.

- Technical Leadership:

- Mentor and guide junior engineers, providing technical leadership and fostering a collaborative team environment.

- Participate in code reviews, ensuring adherence to best practices and high-quality code delivery.

- Lead technical discussions and contribute to architectural decisions.

- Problem Solving and Troubleshooting:

- Identify, diagnose, and resolve complex software and infrastructure issues.

- Perform root cause analysis for production incidents and implement preventative measures.

- Continuous Improvement:

- Stay up-to-date with the latest industry trends, tools, and technologies in cloud computing and software engineering.

- Contribute to the continuous improvement of development processes, tools, and methodologies.

- Drive innovation by experimenting with new technologies and solutions to enhance the platform.

- Collaboration:

- Work closely with DevOps, QA, and other teams to ensure smooth integration and delivery of software releases.

- Communicate effectively with stakeholders, including technical and non-technical team members.

- Client Interaction & Management:

- Will serve as a direct point of contact for multiple clients.

- Able to handle the unique technical needs and challenges of two or more clients concurrently.

- Involve both direct interaction with clients and internal team coordination.

- Production Systems Management:

- Must have extensive experience in managing, monitoring, and debugging production environments.

- Will work on troubleshooting complex issues and ensure that production systems are running smoothly with minimal downtime.

We are looking to fill the role of Kubernetes engineer. To join our growing team, please review the list of responsibilities and qualifications.

Kubernetes Engineer Responsibilities

- Install, configure, and maintain Kubernetes clusters.

- Develop Kubernetes-based solutions.

- Improve Kubernetes infrastructure.

- Work with other engineers to troubleshoot Kubernetes issues.

Kubernetes Engineer Requirements & Skills

- Kubernetes administration experience, including installation, configuration, and troubleshooting

- Kubernetes development experience

- Linux/Unix experience

- Strong analytical and problem-solving skills

- Excellent communication and interpersonal skills

- Ability to work independently and as part of a team

Looking out for GCP Devop's Engineer who can join Immediately or within 15 days

Job Summary & Responsibilities:

Job Overview:

You will work in engineering and development teams to integrate and develop cloud solutions and virtualized deployment of software as a service product. This will require understanding the software system architecture function as well as performance and security requirements. The DevOps Engineer is also expected to have expertise in available cloud solutions and services, administration of virtual machine clusters, performance tuning and configuration of cloud computing resources, the configuration of security, scripting and automation of monitoring functions. This position requires the deployment and management of multiple virtual clusters and working with compliance organizations to support security audits. The design and selection of cloud computing solutions that are reliable, robust, extensible, and easy to migrate are also important.

Experience:

Experience working on billing and budgets for a GCP project - MUST

Experience working on optimizations on GCP based on vendor recommendations - NICE TO HAVE

Experience in implementing the recommendations on GCP

Architect Certifications on GCP - MUST

Excellent communication skills (both verbal & written) - MUST

Excellent documentation skills on processes and steps and instructions- MUST

At least 2 years of experience on GCP.

Basic Qualifications:

● Bachelor’s/Master’s Degree in Engineering OR Equivalent.

● Extensive scripting or programming experience (Shell Script, Python).

● Extensive experience working with CI/CD (e.g. Jenkins).

● Extensive experience working with GCP, Azure, or Cloud Foundry.

● Experience working with databases (PostgreSQL, elastic search).

● Must have 2 years of minimum experience with GCP certification.

Benefits :

● Competitive salary.

● Work from anywhere.

● Learning and gaining experience rapidly.

● Reimbursement for basic working set up at home.

● Insurance (including top-up insurance for COVID).

Location :

Remote - work from anywhere.

Bito is a startup that is using AI (ChatGPT, OpenAI, etc) to create game-changing productivity experiences for software developers in their IDE and CLI. Already, over 100,000 developers are using Bito to increase their productivity by 31% and performing more than 1 million AI requests per week.

Our founders have previously started, built, and taken a company public (NASDAQ: PUBM), worth well over $1B. We are looking to take our learnings, learn a lot along with you, and do something more exciting this time. This journey will be incredibly rewarding, and is incredibly difficult!

We are building this company with a fully remote approach, with our main teams for time zone management in the US and in India. The founders happen to be in Silicon Valley and India.

We are hiring a DevOps Engineer to join our team.

Responsibilities:

- Collaborate with the development team to design, develop, and implement Java-based applications

- Perform analysis and provide recommendations for Cloud deployments and identify opportunities for efficiency and cost reduction

- Build and maintain clusters for various technologies such as Aerospike, Elasticsearch, RDS, Hadoop, etc

- Develop and maintain continuous integration (CI) and continuous delivery (CD) frameworks

- Provide architectural design and practical guidance to software development teams to improve resilience, efficiency, performance, and costs

- Evaluate and define/modify configuration management strategies and processes using Ansible

- Collaborate with DevOps engineers to coordinate work efforts and enhance team efficiency

- Take on leadership responsibilities to influence the direction, schedule, and prioritization of the automation effort

Requirements:

- Minimum 4+ years of relevant work experience in a DevOps role

- At least 3+ years of experience in designing and implementing infrastructure as code within the AWS/GCP/Azure ecosystem

- Expert knowledge of any cloud core services, big data managed services, Ansible, Docker, Terraform/CloudFormation, Amazon ECS/Kubernetes, Jenkins, and Nginx

- Expert proficiency in at least two scripting/programming languages such as Bash, Perl, Python, Go, Ruby, etc.

- Mastery in configuration automation tool sets such as Ansible, Chef, etc

- Proficiency with Jira, Confluence, and Git toolset

- Experience with automation tools for monitoring and alerts such as Nagios, Grafana, Graphite, Cloudwatch, New Relic, etc

- Proven ability to manage and prioritize multiple diverse projects simultaneously

What do we offer:

At Bito, we strive to create a supportive and rewarding work environment that enables our employees to thrive. Join a dynamic team at the forefront of generative AI technology.

· Work from anywhere

· Flexible work timings

· Competitive compensation, including stock options

· A chance to work in the exciting generative AI space

· Quarterly team offsite events

Roles and Responsibilities:

• Gather and analyse cloud infrastructure requirements

• Automating system tasks and infrastructure using a scripting language (Shell/Python/Ruby

preferred), with configuration management tools (Ansible/ Puppet/Chef), service registry and

discovery tools (Consul and Vault, etc), infrastructure orchestration tools (Terraform,

CloudFormation), and automated imaging tools (Packer)

• Support existing infrastructure, analyse problem areas and come up with solutions

• An eye for monitoring – the candidate should be able to look at complex infrastructure and be

able to figure out what to monitor and how.

• Work along with the Engineering team to help out with Infrastructure / Network automation needs.

• Deploy infrastructure as code and automate as much as possible

• Manage a team of DevOps

Desired Profile:

• Understanding of provisioning of Bare Metal and Virtual Machines

• Working knowledge of Configuration management tools like Ansible/ Chef/ Puppet, Redfish.

• Experience in scripting languages like Ruby/ Python/ Shell Scripting

• Working knowledge of IP networking, VPN's, DNS, load balancing, firewalling & IPS concepts

• Strong Linux/Unix administration skills.

• Self-starter who can implement with minimal guidance

• Hands-on experience setting up CICD from SCRATCH in Jenkins

• Experience with Managing K8s infrastructure

Q2 is seeking a team-focused Lead Release Engineer with a passion for managing releases to ensure we release quality software developed using Agile Scrum methodology. Working within the Development team, the Release Manager will work in a fast-paced environment with Development, Test Engineering, IT, Product Management, Design, Implementations, Support and other internal teams to drive efficiencies, transparency, quality and predictability in our software delivery pipeline.

RESPONSIBILITIES:

- Provide leadership on cross-functional development focused software release process.

- Management of the product release cycle to new and existing clients including the build release process and any hotfix releases

- Support end-to-end process for production issue resolution including impact analysis of the issue, identifying the client impacts, tracking the fix through dev/testing and deploying the fix in various production branches.

- Work with engineering team to understand impacts of branches and code merges.

- Identify, communicate, and mitigate release delivery risks.

- Measure and monitor progress to ensure product features are delivered on time.

- Lead recurring release reporting/status meetings to include discussion around release scope, risks and challenges.

- Responsible for planning, monitoring, executing, and implementing the software release strategy.

- Establish completeness criteria for release of successfully tested software component and their dependencies to gate the delivery of releases to Implementation groups

- Serve as a liaison between business units to guarantee smooth and timely delivery of software packages to our Implementations and Support teams

- Create and analyze operational trends and data used for decision making, root cause analysis and performance measurement.

- Build partnerships, work collaboratively, and communicate effectively to achieve shared objectives.

- Make Improvements to processes to improve the experience and delivery for internal and external customers.

- Responsible for ensuring that all security, availability, confidentiality and privacy policies and controls are adhered to.

EXPERIENCE AND KNOWLEDGE:

- Bachelor’s degree in Computer Science, or related field or equivalent experience.

- Minimum 4 years related experience in product release management role.

- Excellent understanding of software delivery lifecycle.

- Technical Background with experience in common Scrum and Agile practices preferred.

- Deep knowledge of software development processes, CI/CD pipelines and Agile Methodology

- Experience with tools like Jenkins, Bitbucket, Jira and Confluence.

- Familiarity with enterprise software deployment architecture and methodologies.

- Proven ability in building effective partnership with diverse groups in multiple locations/environments

- Ability to convey technical concepts to business-oriented teams.

- Capable of assessing and communicating risks and mitigations while managing ambiguity.

- Experience managing customer and internal expectations while understanding the organizational and customer impact.

- Strong organizational, process, leadership, and collaboration skills.

- Strong verbal, written, and interpersonal skills.

Our client is a call management solutions company, which helps small to mid-sized businesses use its virtual call center to manage customer calls and queries. It is an AI and cloud-based call operating facility that is affordable as well as feature-optimized. The advanced features offered like call recording, IVR, toll-free numbers, call tracking, etc are based on automation and enhances the call handling quality and process, for each client as per their requirements. They service over 6,000 business clients including large accounts like Flipkart and Uber.

- Beng involved in Configuration Management, Web Services Architectures, DevOps Implementation, Build & Release Management, Database management, Backups, and Monitoring.

- Creating and managing CI/ CD pipelines for microservice architectures.

- Creating and managing application configuration.

- Researching and planning architectures and tools for smooth deployments.

- Logging, metrics and alerting management.

What you need to have:

- Proficient in Linux Commands line and troubleshooting.

- Proficient in designing CI/ CD pipelines using jenkins. Experience in deployment using Ansible.

- Experience in microservices architecture deployment, Hands-on experience on Docker, Kubernetes, EKS.

- Knowledge of infrastructure management tools (Infrastructure as cloud) such as terraform, AWS cloudformation etc.

- Proficient in AWS Services. Deployment, Monitoring and troubleshooting applications in AWS.

- Configuration management tools like ansible/chef/puppet.

- Proficient in deployment of applications behind load balancers and proxy servers such as nginx, apache.

- Proficient in bash scripting, python scripting is an advantage.

- Experience with Logging, Monitoring, and Alerting tools like ELK(Elastic-search, Logstash, Kibana), Nagios. Graylog, splunk Prometheus, Grafana is a plus.

- Proficient in Configuration Management.

Our client is a call management solutions company, which helps small to mid-sized businesses use its virtual call center to manage customer calls and queries. It is an AI and cloud-based call operating facility that is affordable as well as feature-optimized. The advanced features offered like call recording, IVR, toll-free numbers, call tracking, etc are based on automation and enhances the call handling quality and process, for each client as per their requirements. They service over 6,000 business clients including large accounts like Flipkart and Uber.

- Being involved in Configuration Management, Web Services Architectures, DevOps Implementation, Build & Release Management, Database management, Backups, and Monitoring.

- Ensuring reliable operation of CI/ CD pipelines

- Orchestrate the provisioning, load balancing, configuration, monitoring and billing of resources in the cloud environment in a highly automated manner

- Logging, metrics and alerting management.

- Creating Docker files

- Creating Bash/ Python scripts for automation.

- Performing root cause analysis for production errors.

What you need to have:

- Proficient in Linux Commands line and troubleshooting.

- Proficient in AWS Services. Deployment, Monitoring and troubleshooting applications in AWS.

- Hands-on experience with CI tooling preferably with Jenkins.

- Proficient in deployment using Ansible.

- Knowledge of infrastructure management tools (Infrastructure as cloud) such as terraform, AWS cloudformation etc.

- Proficient in deployment of applications behind load balancers and proxy servers such as nginx, apache.

- Scripting languages: Bash, Python, Groovy.

- Experience with Logging, Monitoring, and Alerting tools like ELK(Elastic-search, Logstash, Kibana), Nagios. Graylog, splunk Prometheus, Grafana is a plus.

EXP:: 4 - 7 yrs

- Any scripting language:: Python, Scala, shell or bash

- Cloud:: AWS

- Database:: Relational (SQL) & non-relational (NoSQL)

- CI/CD tools and Version controlling