VMax eSolutions India Pvt Ltd

https://vmaxindia.comAbout

Jobs at VMax eSolutions India Pvt Ltd

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

We are seeking an experienced AI Architect to design, build, and scale production-ready AI voice conversation agents deployed locally (on-prem / edge / private cloud) and optimized for GPU-accelerated, high-throughput environments.

You will own the end-to-end architecture of real-time voice systems, including speech recognition, LLM orchestration, dialog management, speech synthesis, and low-latency streaming pipelines—designed for reliability, scalability, and cost efficiency.

This role is highly hands-on and strategic, bridging research, engineering, and production infrastructure.

Key Responsibilities

Architecture & System Design

- Design low-latency, real-time voice agent architectures for local/on-prem deployment

- Define scalable architectures for ASR → LLM → TTS pipelines

- Optimize systems for GPU utilization, concurrency, and throughput

- Architect fault-tolerant, production-grade voice systems (HA, monitoring, recovery)

Voice & Conversational AI

- Design and integrate:

- Automatic Speech Recognition (ASR)

- Natural Language Understanding / LLMs

- Dialogue management & conversation state

- Text-to-Speech (TTS)

- Build streaming voice pipelines with sub-second response times

- Enable multi-turn, interruptible, natural conversations

Model & Inference Engineering

- Deploy and optimize local LLMs and speech models (quantization, batching, caching)

- Select and fine-tune open-source models for voice use cases

- Implement efficient inference using TensorRT, ONNX, CUDA, vLLM, Triton, or similar

Infrastructure & Production

- Design GPU-based inference clusters (bare metal or Kubernetes)

- Implement autoscaling, load balancing, and GPU scheduling

- Establish monitoring, logging, and performance metrics for voice agents

- Ensure security, privacy, and data isolation for local deployments

Leadership & Collaboration

- Set architectural standards and best practices

- Mentor ML and platform engineers

- Collaborate with product, infra, and applied research teams

- Drive decisions from prototype → production → scale

Required Qualifications

Technical Skills

- 7+ years in software / ML systems engineering

- 3+ years designing production AI systems

- Strong experience with real-time voice or conversational AI systems

- Deep understanding of LLMs, ASR, and TTS pipelines

- Hands-on experience with GPU inference optimization

- Strong Python and/or C++ background

- Experience with Linux, Docker, Kubernetes

AI & ML Expertise

- Experience deploying open-source LLMs locally

- Knowledge of model optimization:

- Quantization

- Batching

- Streaming inference

- Familiarity with voice models (e.g., Whisper-like ASR, neural TTS)

Systems & Scaling

- Experience with high-QPS, low-latency systems

- Knowledge of distributed systems and microservices

- Understanding of edge or on-prem AI deployments

Preferred Qualifications

- Experience building AI voice agents or call automation systems

- Background in speech processing or audio ML

- Experience with telephony, WebRTC, SIP, or streaming audio

- Familiarity with Triton Inference Server / vLLM

- Prior experience as Tech Lead or Principal Engineer

What We Offer

- Opportunity to architect state-of-the-art AI voice systems

- Work on real-world, high-scale production deployments

- Competitive compensation and equity (if applicable)

- High ownership and technical influence

- Collaboration with top-tier AI and infrastructure talent

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

Company Description

VMax e-Solutions India Private Limited, based in Hyderabad, is a dynamic organization specializing in Open Source ERP Product Development and Mobility Solutions. As an ISO 9001:2015 and ISO 27001:2013 certified company, VMax is dedicated to delivering tailor-made and scalable products, with a strong focus on e-Governance projects across multiple states in India. The company's innovative technologies aim to solve real-life problems and enhance the daily services accessed by millions of citizens. With a culture of continuous learning and growth, VMax provides its team members opportunities to develop expertise, take ownership, and grow their careers through challenging and impactful work.

About the Role

We’re hiring a Senior Data Scientist with deep real-time voice AI experience and strong backend engineering skills.

1. You’ll own and scale our end-to-end voice agent pipeline that powers AI SDRs, customer support 2. agents, and internal automation agents on calls. This is a hands-on, highly technical role where you’ll design and optimize low-latency, high-reliability voice systems.

3. You’ll work closely with our founders, product, and platform teams, with significant ownership over architecture, benchmarks.

What You’ll Do

1. Own the voice stack end-to-end – from telephony / WebRTC entrypoints to STT, turn-taking, LLM reasoning, and TTS back to the caller.

2. Design for real-time – architect and optimize streaming pipelines for sub-second latency, barge-in, interruptions, and graceful recovery on bad networks.

3. Integrate and tune models – evaluate, select, and integrate STT/TTS/LLM/VAD providers (and self-hosted models) for different use-cases, balancing quality, speed, and cost.

4. Build orchestration & tooling – implement agent orchestration logic, evaluation frameworks, call simulators, and dashboards for latency, quality, and reliability.

5. Harden for production – ensure high availability, observability, and robust fault-tolerance for thousands of concurrent calls in customer VPCs.

6. Shape the voice roadmap – influence how voice fits into our broader Agentic OS vision (simulation, analytics, multi-agent collaboration, etc.).

You’re a Great Fit If You Have

1. 6+ years of software engineering experience (backend or full-stack) in production systems.

2. Strong experience building real-time voice agents or similar systems using:

STT / ASR (e.g. Whisper, Deepgram, Assembly, AWS Transcribe, GCP Speech)

TTS (e.g. ElevenLabs, PlayHT, AWS Polly, Azure Neural TTS)

VAD / turn-taking and streaming audio pipelines

LLMs (e.g. OpenAI, Anthropic, Gemini, local models)

3. Proven track record designing and operating low-latency, high-throughput streaming systems (WebRTC, gRPC, websockets, Kafka, etc.).

4. Hands-on experience integrating ML models into live, user-facing applications with real-time inference & monitoring.

5. Solid backend skills with Python and TypeScript/Node.js; strong fundamentals in distributed systems, concurrency, and performance optimization.

6. Experience with cloud infrastructure – especially AWS (EKS, ECS, Lambda, SQS/Kafka, API Gateway, load balancers).

7. Comfortable working in Kubernetes / Docker environments, including logging, metrics, and alerting.

8. Startup DNA – at least 2 years in an early or mid-stage startup where you shipped fast, owned outcomes, and worked close to the customer.

Nice to Have

1. Experience self-hosting AI models (ASR / TTS / LLMs) and optimizing them for latency, cost, and reliability.

2. Telephony integration experience (e.g. Twilio, Vonage, Aircall, SignalWire, or similar).

3. Experience with evaluation frameworks for conversational agents (call quality scoring, hallucination checks, compliance rules, etc.).

4. Background in speech processing, signal processing, or dialog systems.

5. Experience deploying into enterprise VPC / on-prem environments and working with security/compliance constraints.

Similar companies

About the company

Jobs

2

About the company

The Wissen Group was founded in the year 2000. Wissen Technology, a part of Wissen Group, was established in the year 2015. Wissen Technology is a specialized technology company that delivers high-end consulting for organizations in the Banking & Finance, Telecom, and Healthcare domains.

With offices in US, India, UK, Australia, Mexico, and Canada, we offer an array of services including Application Development, Artificial Intelligence & Machine Learning, Big Data & Analytics, Visualization & Business Intelligence, Robotic Process Automation, Cloud, Mobility, Agile & DevOps, Quality Assurance & Test Automation.

Leveraging our multi-site operations in the USA and India and availability of world-class infrastructure, we offer a combination of on-site, off-site and offshore service models. Our technical competencies, proactive management approach, proven methodologies, committed support and the ability to quickly react to urgent needs make us a valued partner for any kind of Digital Enablement Services, Managed Services, or Business Services.

We believe that the technology and thought leadership that we command in the industry is the direct result of the kind of people we have been able to attract, to form this organization (you are one of them!).

Our workforce consists of 1000+ highly skilled professionals, with leadership and senior management executives who have graduated from Ivy League Universities like MIT, Wharton, IITs, IIMs, and BITS and with rich work experience in some of the biggest companies in the world.

Wissen Technology has been certified as a Great Place to Work®. The technology and thought leadership that the company commands in the industry is the direct result of the kind of people Wissen has been able to attract. Wissen is committed to providing them the best possible opportunities and careers, which extends to providing the best possible experience and value to our clients.

Jobs

490

About the company

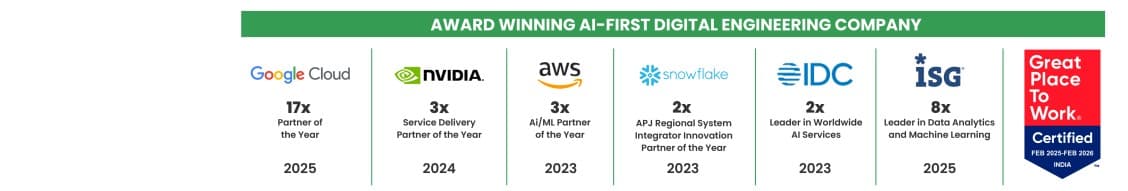

Quantiphi is an award-winning AI-first digital engineering company driven by the desire to reimagine and realize transformational opportunities at the heart of the business. Since its inception in 2013, Quantiphi has solved the toughest and most complex business problems by combining deep industry experience, disciplined cloud, and data-engineering practices, and cutting-edge artificial intelligence research to achieve accelerated and quantifiable business results.

Jobs

12

About the company

Jobs

5

About the company

Your Go-To AI Consultancy For AI Research, AI Products, AI Solutions, AI MVP Design, Idea Validation

Jobs

22

About the company

VDart - We are a global, emerging technology staffing solutions provider with expertise in SMAC (Social, Mobile, Analytics & Cloud), Enterprise Resource Planning (Oracle Applications, SAP), Business Intelligence (Hyperion), and Infrastructure services. We work with leading System Integrators in, the private and public sectors. We have deep industry expertise and focus in BFSI, Manufacturing, Energy & Utility, Healthcare and Technology sector. Our scope, knowledge, industry expertise, and global footprint have enabled us to provide best in the industry solutions. With our Core focus in emerging technologies, we have provided global technology workforce solutions in USA, Canada, Mexico, Brazil, UK, Australia & India. We take pride in delivering specialized talent, superior performance, and seamless execution to meet the challenging business needs of customers worldwide.

Jobs

15

About the company

BPO Hirings

Jobs

16

About the company

Jobs

2

About the company

Jobs

7

About the company

Creatif Technologies Pvt. Ltd., formerly known as Shreeji Soft, was established in 2001 with a mission to deliver customer-centric and high-quality software solutions. Over the years, we have grown into a trusted partner for custom software development, working closely with businesses to simplify processes and maximize productivity. Our focus is always on innovation, performance, and customer satisfaction.

Jobs

3