Cymetrix Software

https://cymetrixsoft.comAbout

Cymetrix is a global CRM and Data Analytics consulting company. It has expertise across industries such as manufacturing, retail, BFSI, NPS, Pharma, and Healthcare. It has successfully implemented CRM and related business process integrations for more than 50+ clients.

Catalyzing Tangible Growth: Our pivotal role involves facilitating and driving actual growth for clients. We're committed to becoming a catalyst for dynamic transformation within the business landscape.

Niche focus, limitless growth: Cymetrix specializes in CRM, Data, and AI-powered technologies, offering tailored solutions and profound insights. This focused approach paves the way for exponential growth opportunities for clients.

A Digital Transformation Partner: Cymetrix aims to deliver the necessary support, expertise, and solutions that drive businesses to innovate with unwavering assurance. Our commitment fosters a culture of continuous improvement and growth, ensuring your innovation journey is successful.

The Cymetrix Software team is under the leadership of agile, entrepreneurial, and veteran technology experts who are devoted to augmenting the value of the solutions they are delivering.

Our certified team of 150+ consultants excels in Salesforce products. We have experience in designing and developing products and IPs on the Salesforce platform enables us to design industry-specific, customized solutions, with intuitive user interfaces.

Candid answers by the company

Cymetrix is a global CRM and Data Analytics consulting company. It has expertise across industries such as manufacturing, retail, BFSI, NPS, Pharma, and Healthcare. It has successfully implemented CRM and related business process integrations for more than 50+ clients.

Jobs at Cymetrix Software

Mandatory Skills: ETL, Data Warehousing, Python, GCP Services

Required Skills:

● Bachelor’s degree in Computer Science or similar field or equivalent work experience.

● 4+ years of experience on Data Warehousing, Data Engineering or Data Integration projects.

● Expert with data warehousing concepts, strategies, and tools.

● Strong SQL background.

● Strong knowledge of relational databases like SQL Server, PostgreSQL, MySQL.

● Strong experience in GCP & Google BigQuery, Cloud SQL, Composer (Airflow), Dataflow, Dataproc, Cloud Function and GCS

● Good to have knowledge on SQL Server Reporting Services (SSRS), and SQL Server Integration Services (SSIS).

● Knowledge of AWS and Azure Cloud is a plus.

● Experience in Informatica Power exchange for Mainframe, Salesforce, and other new-age data sources.

● Experience in integration using APIs, XML, JSONs etc.

● In-depth understanding of database management systems, online analytical processing (OLAP) and ETL (Extract, transform, load) framework, data-warehousing and Data Lakes

● Good understanding of SDLC, Agile and Scrum processes.

● Strong problem-solving, multi-tasking, and organizational skills.

● Highly proficient in working with large volumes of business data and strong understanding of database design and implementation.

● Good written and verbal communication skills.

● Demonstrated experience of leading a team spread across multiple locations.

Role & Responsibilities:

● Work with business users and other stakeholders to understand business processes.

● Ability to design and implement Dimensional and Fact tables

● Identify and implement data transformation/cleansing requirements

● Develop a highly scalable, reliable, and high-performance data processing pipeline to extract, transform and load data from various systems to the Enterprise Data Warehouse

● Develop conceptual, logical, and physical data models with associated metadata including data lineage and technical data definitions

● Design, develop and maintain ETL workflows and mappings using the appropriate data load technique

● Provide research, high-level design, and estimates for data transformation and data integration from source applications to end-user BI solutions.

● Provide production support of ETL processes to ensure timely completion and availability of data in the data warehouse for reporting use.

● Analyze and resolve problems and provide technical assistance as necessary. Partner with the BI team to evaluate, design, develop BI reports and dashboards according to functional specifications while maintaining data integrity and data quality.

● Work collaboratively with key stakeholders to translate business information needs into well-defined data requirements to implement the BI solutions.

● Leverage transactional information, data from ERP, CRM, HRIS applications to model, extract and transform into reporting & analytics.

● Define and document the use of BI through user experience/use cases, prototypes, test, and deploy BI solutions.

● Develop and support data governance processes, analyze data to identify and articulate trends, patterns, outliers, quality issues, and continuously validate reports, dashboards and suggest improvements.

● Train business end-users, IT analysts, and developers.

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

Location: Andheri, Mumbai

Work Mode: Hybrid

Working Days: Monday to Friday (5 Days Working)

About the Role

We are looking for a Junior Technical Recruiter / Fresher who is passionate about building a career in IT recruitment. If you are eager to learn, have strong communication skills, and want to grow in a fast-paced hiring environment, this role is for you.

Key Responsibilities

- Source and screen candidates for various IT roles using job portals, social media, and networking.

- Understand job requirements and match relevant profiles.

- Conduct initial HR screening and coordinate interviews.

- Maintain candidate database and ensure timely follow-ups.

- Support the senior recruitment team in daily hiring activities.

What We’re Looking For

- Freshers or candidates with 0–1 year experience in recruitment.

- Strong interest in IT hiring and willingness to learn new technologies.

- Good communication and interpersonal skills.

- Basic knowledge of hiring tools (Naukri, LinkedIn, etc.) is a plus.

- Immediate joiners preferred.

Why Join Us?

- Structured training and mentorship.

- Fast growth opportunities in IT recruitment.

- Friendly work culture with hybrid flexibility.

- Opportunity to work on niche and emerging tech roles.

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

Remote opening

min 3.5 years

What you’ll do:

You will be working as a senior software engineer within the healthcare domain, where you will focus on module level integration and collaboration across other areas of projects, helping healthcare organizations achieve their business goals with use of full stack technologies, cloud services & DevOps. You will be working with Architects from other specialties such as cloud engineering, data engineering, ML engineering to create platforms, solutions and applications that cater to latest trends in the healthcare industry such as digital diagnosis, software as a medical product, AI marketplace, amongst others. Focuses on module level integration and collaboration across other areas of projects

Role & Responsibilities:

We are looking for a Full Stack Developer who is motivated to combine the art of design with programming.Responsibilities will include translation of the UI/UX design wireframes to actual code that will produce visual elements of the application. You will work with the UI/UX designer and bridge the gap between graphical design and technical implementation, taking an active role on both sides and defining how the application looks as well as how it works.

• Develop new user-facing features

• Build reusable code and libraries for future use

• Ensure the technical feasibility of UI/UX designs

• Optimize application for maximum speed and scalability

• Assure that all user input is validated before submitting to back-end

• Collaborate with other team members and stakeholders

• Would be responsible to provide stable technical solutions which are robust and scalable as pe business needs

Skills expectation:

• Must have

o Frontend:

Proficient understanding of web markup, including HTML5, CSS3

Basic understanding of server-side CSS pre-processing platforms, such as LESS and SASS

Proficient understanding of client-side scripting and JavaScript frameworks, including jQuery

Good understanding of at least one of the advanced JavaScript libraries and frameworks such as AngularJS, KnockoutJS, BackboneJS, ReactJS etc.

Familiarity with one or more modern front-end frameworks such as Angular 15+, React, VueJS, Backbone.

Good understanding of asynchronous request handling, partial page updates, and AJAX.

Proficient understanding of cross-browser compatibility issues and ways to work

around them.

Experience with generic Angular testing frameworks

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

Must have skills:

1. GCP - GCS, PubSub, Dataflow or DataProc, Bigquery, Airflow/Composer, Python(preferred)/Java

2. ETL on GCP Cloud - Build pipelines (Python/Java) + Scripting, Best Practices, Challenges

3. Knowledge of Batch and Streaming data ingestion, build End to Data pipelines on GCP

4. Knowledge of Databases (SQL, NoSQL), On-Premise and On-Cloud, SQL vs No SQL, Types of No-SQL DB (At least 2 databases)

5. Data Warehouse concepts - Beginner to Intermediate level

Role & Responsibilities:

● Work with business users and other stakeholders to understand business processes.

● Ability to design and implement Dimensional and Fact tables

● Identify and implement data transformation/cleansing requirements

● Develop a highly scalable, reliable, and high-performance data processing pipeline to extract, transform and load data

from various systems to the Enterprise Data Warehouse

● Develop conceptual, logical, and physical data models with associated metadata including data lineage and technical

data definitions

● Design, develop and maintain ETL workflows and mappings using the appropriate data load technique

● Provide research, high-level design, and estimates for data transformation and data integration from source

applications to end-user BI solutions.

● Provide production support of ETL processes to ensure timely completion and availability of data in the data

warehouse for reporting use.

● Analyze and resolve problems and provide technical assistance as necessary. Partner with the BI team to evaluate,

design, develop BI reports and dashboards according to functional specifications while maintaining data integrity and

data quality.

● Work collaboratively with key stakeholders to translate business information needs into well-defined data

requirements to implement the BI solutions.

● Leverage transactional information, data from ERP, CRM, HRIS applications to model, extract and transform into

reporting & analytics.

● Define and document the use of BI through user experience/use cases, prototypes, test, and deploy BI solutions.

● Develop and support data governance processes, analyze data to identify and articulate trends, patterns, outliers,

quality issues, and continuously validate reports, dashboards and suggest improvements.

● Train business end-users, IT analysts, and developers.

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

Advanced SQL, data modeling skills - designing Dimensional Layer, 3NF, denormalized views & semantic layer, Expertise in GCP services

Role & Responsibilities:

● Design and implement robust semantic layers for data systems on Google Cloud Platform (GCP)

● Develop and maintain complex data models, including dimensional models, 3NF structures, and denormalized views

● Write and optimize advanced SQL queries for data extraction, transformation, and analysis

● Utilize GCP services to create scalable and efficient data architectures

● Collaborate with cross-functional teams to translate business requirements(specified in mapping sheets or Legacy

Datastage jobs) into effective data models

● Implement and maintain data warehouses and data lakes on GCP

● Design and optimize ETL/ELT processes for large-scale data integration

● Ensure data quality, consistency, and integrity across all data models and semantic layers

● Develop and maintain documentation for data models, semantic layers, and data flows

● Participate in code reviews and implement best practices for data modeling and database design

● Optimize database performance and query execution on GCP

● Provide technical guidance and mentorship to junior team members

● Stay updated with the latest trends and advancements in data modeling, GCP services, and big data technologies

● Collaborate with data scientists and analysts to enable efficient data access and analysis

● Implement data governance and security measures within the semantic layer and data model

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

Bangalore / Chennai

- Hands-on data modelling for OLTP and OLAP systems

- In-depth knowledge of Conceptual, Logical and Physical data modelling

- Strong understanding of Indexing, partitioning, data sharding, with practical experience of having done the same

- Strong understanding of variables impacting database performance for near-real-time reporting and application interaction.

- Should have working experience on at least one data modelling tool, preferably DBSchema, Erwin

- Good understanding of GCP databases like AlloyDB, CloudSQL, and BigQuery.

- People with functional knowledge of the mutual fund industry will be a plus

Role & Responsibilities:

● Work with business users and other stakeholders to understand business processes.

● Ability to design and implement Dimensional and Fact tables

● Identify and implement data transformation/cleansing requirements

● Develop a highly scalable, reliable, and high-performance data processing pipeline to extract, transform and load data from various systems to the Enterprise Data Warehouse

● Develop conceptual, logical, and physical data models with associated metadata including data lineage and technical data definitions

● Design, develop and maintain ETL workflows and mappings using the appropriate data load technique

● Provide research, high-level design, and estimates for data transformation and data integration from source applications to end-user BI solutions.

● Provide production support of ETL processes to ensure timely completion and availability of data in the data warehouse for reporting use.

● Analyze and resolve problems and provide technical assistance as necessary. Partner with the BI team to evaluate, design, develop BI reports and dashboards according to functional specifications while maintaining data integrity and data quality.

● Work collaboratively with key stakeholders to translate business information needs into well-defined data requirements to implement the BI solutions.

● Leverage transactional information, data from ERP, CRM, HRIS applications to model, extract and transform into reporting & analytics.

● Define and document the use of BI through user experience/use cases, prototypes, test, and deploy BI solutions.

● Develop and support data governance processes, analyze data to identify and articulate trends, patterns, outliers, quality issues, and continuously validate reports, dashboards and suggest improvements.

● Train business end-users, IT analysts, and developers.

Required Skills:

● Bachelor’s degree in Computer Science or similar field or equivalent work experience.

● 5+ years of experience on Data Warehousing, Data Engineering or Data Integration projects.

● Expert with data warehousing concepts, strategies, and tools.

● Strong SQL background.

● Strong knowledge of relational databases like SQL Server, PostgreSQL, MySQL.

● Strong experience in GCP & Google BigQuery, Cloud SQL, Composer (Airflow), Dataflow, Dataproc, Cloud Function and GCS

● Good to have knowledge on SQL Server Reporting Services (SSRS), and SQL Server Integration Services (SSIS).

● Knowledge of AWS and Azure Cloud is a plus.

● Experience in Informatica Power exchange for Mainframe, Salesforce, and other new-age data sources.

● Experience in integration using APIs, XML, JSONs etc.

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

1. GCP - GCS, PubSub, Dataflow or DataProc, Bigquery, BQ optimization, Airflow/Composer, Python(preferred)/Java

2. ETL on GCP Cloud - Build pipelines (Python/Java) + Scripting, Best Practices, Challenges

3. Knowledge of Batch and Streaming data ingestion, build End to Data pipelines on GCP

4. Knowledge of Databases (SQL, NoSQL), On-Premise and On-Cloud, SQL vs No SQL, Types of No-SQL DB (At Least 2 databases)

5. Data Warehouse concepts - Beginner to Intermediate level

6.Data Modeling, GCP Databases, DB Schema(or similar)

7.Hands-on data modelling for OLTP and OLAP systems

8.In-depth knowledge of Conceptual, Logical and Physical data modelling

9.Strong understanding of Indexing, partitioning, data sharding, with practical experience of having done the same

10.Strong understanding of variables impacting database performance for near-real-time reporting and application interaction.

11.Should have working experience on at least one data modelling tool,

preferably DBSchema, Erwin

12Good understanding of GCP databases like AlloyDB, CloudSQL, and

BigQuery.

13.People with functional knowledge of the mutual fund industry will be a plus Should be willing to work from Chennai, office presence is mandatory

Role & Responsibilities:

● Work with business users and other stakeholders to understand business processes.

● Ability to design and implement Dimensional and Fact tables

● Identify and implement data transformation/cleansing requirements

● Develop a highly scalable, reliable, and high-performance data processing pipeline to extract, transform and load data from various systems to the Enterprise Data Warehouse

● Develop conceptual, logical, and physical data models with associated metadata including data lineage and technical data definitions

● Design, develop and maintain ETL workflows and mappings using the appropriate data load technique

● Provide research, high-level design, and estimates for data transformation and data integration from source applications to end-user BI solutions.

● Provide production support of ETL processes to ensure timely completion and availability of data in the data warehouse for reporting use.

● Analyze and resolve problems and provide technical assistance as necessary. Partner with the BI team to evaluate, design, develop BI reports and dashboards according to functional specifications while maintaining data integrity and data quality.

● Work collaboratively with key stakeholders to translate business information needs into well-defined data requirements to implement the BI solutions.

● Leverage transactional information, data from ERP, CRM, HRIS applications to model, extract and transform into reporting & analytics.

● Define and document the use of BI through user experience/use cases, prototypes, test, and deploy BI solutions.

● Develop and support data governance processes, analyze data to identify and articulate trends, patterns, outliers, quality issues, and continuously validate reports, dashboards and suggest improvements.

● Train business end-users, IT analysts, and developers.

The recruiter has not been active on this job recently. You may apply but please expect a delayed response.

Full Stack Developer

Location: Hyderabad

Experience: 7+ Years

Type: BCS - Business Consulting Services

RESPONSIBILITIES:

* Strong programming skills in Node JS [ Must] , React JS, Android and Kotlin [Must]

* Hands on Experience in UI development with good UX sense understanding.

• Hands on Experience in Database design and management

• Hands on Experience to create and maintain backend-framework for mobile applications.

• Hands-on development experience on cloud-based platforms like GCP/Azure/AWS

• Ability to manage and provide technical guidance to the team.

• Strong experience in designing APIs using RAML, Swagger, etc.

• Service Definition Development.

• API Standards, Security, Policies Definition and Management.

REQUIRED EXPERIENCE:

* Bachelor’s and/or master's degree in computer science or equivalent work experience

* Excellent analytical, problem solving, and communication skills.

* 7+ years of software engineering experience in a multi-national company

* 6+ years of development experience in Kotlin, Node and React JS

* 3+ Year(s) experience creating solutions in native public cloud (GCP, AWS or Azure)

* Experience with Git or similar version control system, continuous integration

* Proficiency in automated unit test development practices and design methodologies

* Fluent English

Proficient in Looker Action, Looker Dashboarding, Looker Data Entry, LookML, SQL Queries, BigQuery, LookML, Looker Studio, BigQuery, GCP.

Remote Working

2 pm to 12 am IST or

10:30 AM to 7:30 PM IST

Sunday to Thursday

Responsibilities:

● Create and maintain LookML code, which defines data models, dimensions, measures, and relationships within Looker.

● Develop reusable LookML components to ensure consistency and efficiency in report and dashboard creation.

● Build and customize dashboard to Incorporate data visualizations, such as charts and graphs, to present insights effectively.

● Write complex SQL queries when necessary to extract and manipulate data from underlying databases and also optimize SQL queries for performance.

● Connect Looker to various data sources, including databases, data warehouses, and external APIs.

● Identify and address bottlenecks that affect report and dashboard loading times and Optimize Looker performance by tuning queries, caching strategies, and exploring indexing options.

● Configure user roles and permissions within Looker to control access to sensitive data & Implement data security best practices, including row-level and field-level security.

● Develop custom applications or scripts that interact with Looker's API for automation and integration with other tools and systems.

● Use version control systems (e.g., Git) to manage LookML code changes and collaborate with other developers.

● Provide training and support to business users, helping them navigate and use Looker effectively.

● Diagnose and resolve technical issues related to Looker, data models, and reports.

Skills Required:

● Experience in Looker's modeling language, LookML, including data models, dimensions, and measures.

● Strong SQL skills for writing and optimizing database queries across different SQL databases (GCP/BQ preferable)

● Knowledge of data modeling best practices

● Proficient in BigQuery, billing data analysis, GCP billing, unit costing, and invoicing, with the ability to recommend cost optimization strategies.

● Previous experience in Finops engagements is a plus

● Proficiency in ETL processes for data transformation and preparation.

● Ability to create effective data visualizations and reports using Looker’s dashboard tools.

● Ability to optimize Looker performance by fine-tuning queries, caching strategies, and indexing.

● Familiarity with related tools and technologies, such as data warehousing (e.g., BigQuery ), data transformation tools (e.g., Apache Spark), and scripting languages (e.g., Python).

Similar companies

About the company

About Us

Incubyte is an AI-first software development agency built on the principles of software craftsmanship—where how we build is just as important as what we build. We partner with organizations across stages, from enterprises looking to scale and modernize to early-stage founders bringing new ideas to life.

At Incubyte, AI is deeply integrated across the software development lifecycle to drive speed, efficiency, and smarter outcomes. Guided by Software Craftsmanship values and Extreme Programming practices, we combine high velocity with disciplined engineering to deliver reliable, high-impact solutions.

We don’t just build software—we incubate dedicated engineering teams. From designing systems to shaping team structures and organizational strategy, we enable our clients to launch and scale products that are relevant today and resilient for the future.

Whether you’re scaling an existing product, building from scratch, or optimizing manual processes, we help you move faster with confidence:

- Scale and modernize your product

- Launch quickly and iterate continuously

- Automate processes for non-linear growth

- Build systems that are stable, predictable, and measurable

Our approach is rooted in ownership. As a DevOps-driven organization, our engineers take responsibility for the entire lifecycle—from development to release—ensuring quality at every step.

Founded by product professionals, we bring a strong product mindset into services. We’re driven by curiosity, continuous learning, and a passion for building great software the right way.

We’re always looking for people who care deeply about code, craftsmanship, and growth. Join us if you’re excited to build, learn, and make an impact.

Jobs

6

About the company

Beyond Seek is a team of R.A.R.E individuals who're solving impactful problems using the best tools available today!

Jobs

1

About the company

Jobs

1

About the company

The Wissen Group was founded in the year 2000. Wissen Technology, a part of Wissen Group, was established in the year 2015. Wissen Technology is a specialized technology company that delivers high-end consulting for organizations in the Banking & Finance, Telecom, and Healthcare domains.

With offices in US, India, UK, Australia, Mexico, and Canada, we offer an array of services including Application Development, Artificial Intelligence & Machine Learning, Big Data & Analytics, Visualization & Business Intelligence, Robotic Process Automation, Cloud, Mobility, Agile & DevOps, Quality Assurance & Test Automation.

Leveraging our multi-site operations in the USA and India and availability of world-class infrastructure, we offer a combination of on-site, off-site and offshore service models. Our technical competencies, proactive management approach, proven methodologies, committed support and the ability to quickly react to urgent needs make us a valued partner for any kind of Digital Enablement Services, Managed Services, or Business Services.

We believe that the technology and thought leadership that we command in the industry is the direct result of the kind of people we have been able to attract, to form this organization (you are one of them!).

Our workforce consists of 1000+ highly skilled professionals, with leadership and senior management executives who have graduated from Ivy League Universities like MIT, Wharton, IITs, IIMs, and BITS and with rich work experience in some of the biggest companies in the world.

Wissen Technology has been certified as a Great Place to Work®. The technology and thought leadership that the company commands in the industry is the direct result of the kind of people Wissen has been able to attract. Wissen is committed to providing them the best possible opportunities and careers, which extends to providing the best possible experience and value to our clients.

Jobs

499

About the company

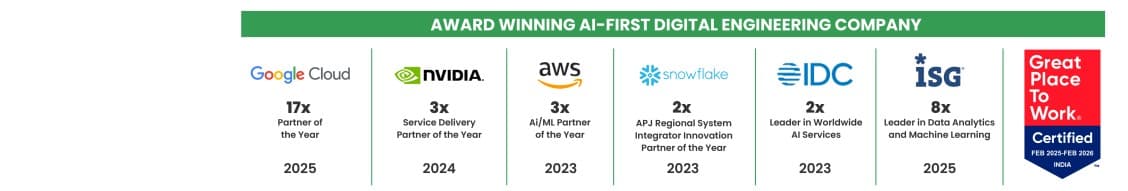

Quantiphi is an award-winning AI-first digital engineering company driven by the desire to reimagine and realize transformational opportunities at the heart of the business. Since its inception in 2013, Quantiphi has solved the toughest and most complex business problems by combining deep industry experience, disciplined cloud, and data-engineering practices, and cutting-edge artificial intelligence research to achieve accelerated and quantifiable business results.

Jobs

8

About the company

Stairio is a digital infrastructure company building scalable online systems for modern businesses.

We help service-driven brands establish strong digital foundations through high-performance websites, booking systems, management dashboards, and integrated payment solutions. Our goal is to give businesses ownership, control, and long-term digital assets that generate measurable revenue.

Jobs

2

About the company

Jobs

10

About the company

Jobs

1

About the company

Jobs

1

About the company

Jobs

1