Entropik Tech

https://entropiktech.comAbout

Optimize your Ads, Trailers, Products, and User Experience (UX) using Emotion Recognition Technology

Entropik Tech is the leading company in the field of emotion artificial intelligence (AI), which helps to redesign experiences by reading people's emotions. They have created AI technologies that recognize human emotions via facial expressions, eye movement, voice tone, and brainwaves in a manner that is rapid and scalable as part of their Mission to Humanise Experiences. These technologies can be found on their website. Individuals can correctly and meaningfully evaluate their experiences across Media, Digital, and Shopper encounters because of the extensive product variety that the company offers.

Around 150 companies all over the globe, in industries such as consumer packaged goods (CPG), retail, media and publishing, telecommunications, and financial services, get Emotion Insights from Entropik Tech. The firm has offices in a variety of locations throughout the world, including North America, Europe, the Middle East, India, and Southeast Asia.

Connect with the team

Jobs at Entropik Tech

No jobs found

Similar companies

About the company

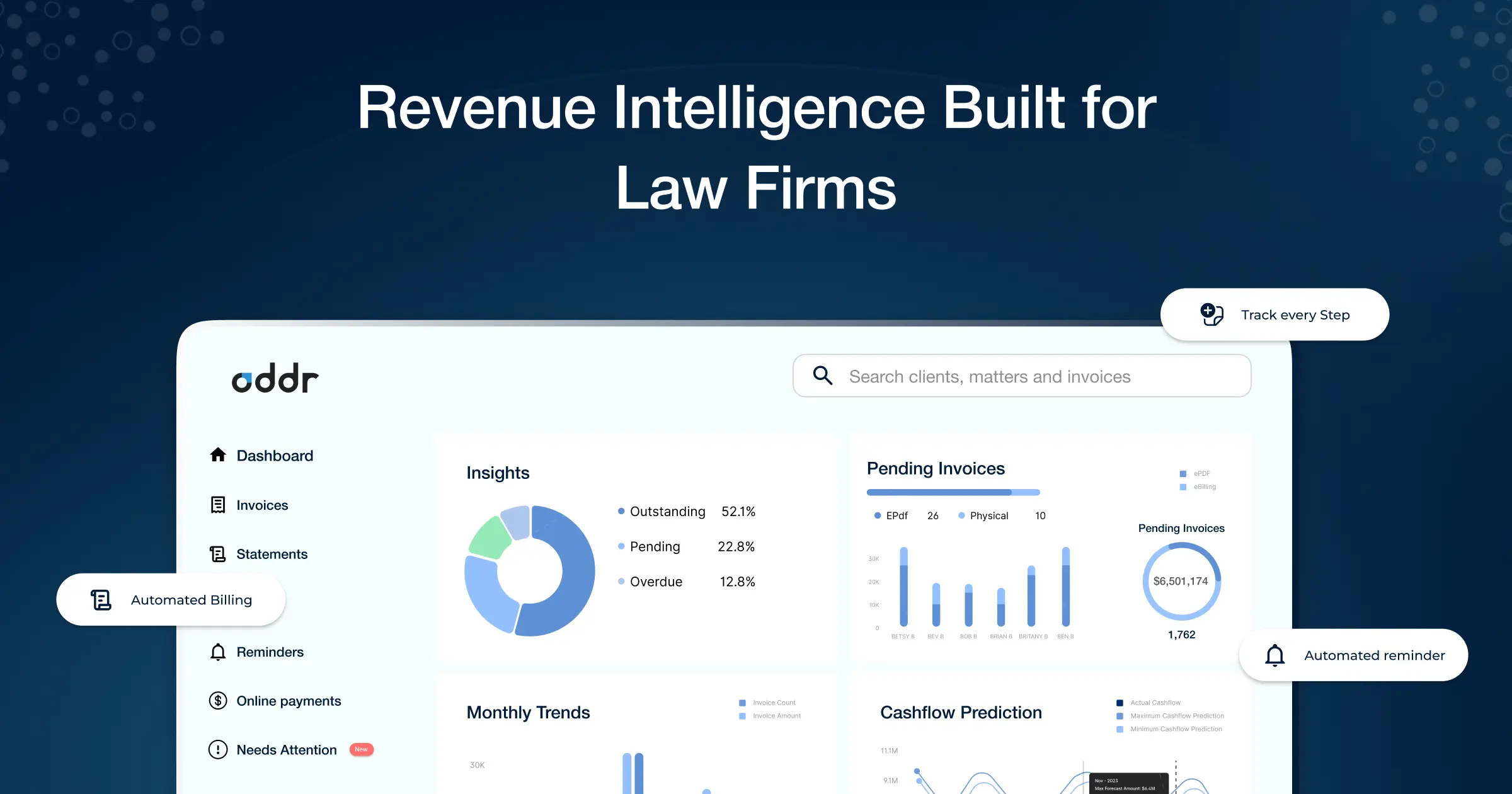

Oddr is the legal industry’s only AI-powered invoice-to-cash platform. Oddr’s AI-powered platform centralizes, streamlines and accelerates every step of billing + collections— from bill preparation and delivery to collections and reconciliation - enabling new possibilities in analytics, forecasting, and client service that eliminate revenue leakage and increase profitability in the billing and collections lifecycle.

www.oddr.com

Jobs

9

About the company

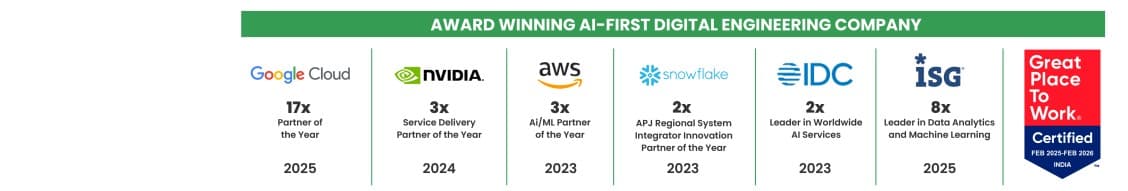

Quantiphi is an award-winning AI-first digital engineering company driven by the desire to reimagine and realize transformational opportunities at the heart of the business. Since its inception in 2013, Quantiphi has solved the toughest and most complex business problems by combining deep industry experience, disciplined cloud, and data-engineering practices, and cutting-edge artificial intelligence research to achieve accelerated and quantifiable business results.

Jobs

6

About the company

Jobs

6

About the company

Jobs

2

About the company

BPO Hirings

Jobs

20

About the company

At Ampera Technologies, we empower businesses with cutting-edge data analytics, quality assurance, and data engineering solutions

Jobs

16

About the company

Jobs

1

About the company

Jobs

55

About the company

Ande is an AI-native, full-stack TypeScript platform built on React, Node.js, GraphQL, and Postgres, running on AWS and powering web, mobile, internal operations, deep integrations, and agentic workflows.

Our product sits at the intersection of enterprise workflows, hospitality operations, payments, compliance, procurement, and AI — giving engineers the opportunity to solve problems that combine polished user experiences with complex real-world systems.

Engineering at Ande is deeply product-oriented and systems-heavy. We care about:

- Type safety and shared abstractions

- Fast iteration and observable production systems

- High-quality user experiences

- Building durable foundations for a category-defining platform

PMs and engineers work closely with the business domain, contributing directly to:

- Booking experiences

- Client entertainment policies

- Venue operations

- Spend visibility and approvals

- Payments and procurement workflows

- Enterprise integrations

- AI-driven workflows that reduce manual coordination across enterprises and hospitality partners

Founders

- Lohit Sarma

- Ashish Bidadi

- Michael McDermott

Jobs

2

About the company

The Supreme Consultancy is a leading Placement Consultant based in Mumbai, Maharashtra. We specialize in providing tailored solutions in Corporate Training Services, Manpower Recruitment, HR Consultant, Placement Consultant, Overseas Placement. Our mission is to bridge the gap between talented individuals and organizations, ensuring optimal placements and career growth. With a deep understanding of industry trends and client needs, we deliver customized services that foster long-term success for both employees and employers. At The Supreme Consultancy, we are committed to excellence, professionalism, and shaping future-ready careers.

Jobs

2